Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

AMD's EPYC Server CPU

If you have read Ian's articles about Zen and EPYC in detail, you can skip this page. For those of you who need a refresher, let us quickly review what AMD is offering.

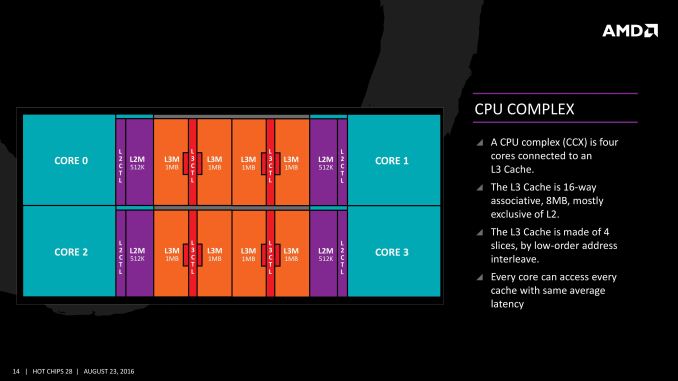

The basic building block of EPYC and Ryzen is the CPU Complex (CCX), which consists of 4 vastly improved "Zen" cores, connected to an L3-cache. In a full configuration each core technically has its own 2 MB of L3, but access to the other 6 MB is rather speedy. Within a CCX we measured 13 ns to access the first 2 MB, and 15 to 19 ns for the rest of the 8 MB L3-cache, a difference that's hardly noticeable in the grand scheme of things. The L3-cache acts as a mostly exclusive victim cache.

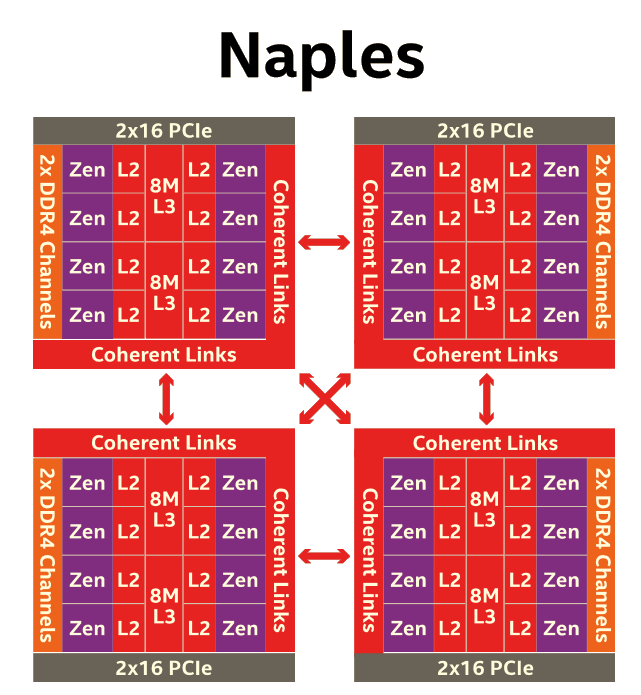

Two CCXes make up one Zeppelin die. A custom fabric – AMD's Infinity Fabric – ties together two CCXes, the two 8 MB L3-caches, 2 DDR4-channels, and the integrated PCIe lanes. That topology is not without some drawbacks though: it means that there are two separate 8 MB L3 caches instead of one single 16 MB LLC. This has all kinds of consequences. For example the prefetchers of each core make sure that data of the L3 is brought into the L1 when it is needed. Meanwhile each CCX has its own separate (not inside the L3, so no capacity hit) and dedicated SRAM snoop directory (keeping track of 7 possible states). In other words, the local L3-cache communicates very quickly with everything inside the same CCX, but every data exchange between two CCXes comes with a tangible latency penalty.

Moving further up the chain, the complete EPYC chip is a Multi Chip Module(MCM) containing 4 Zeppelin dies.

AMD made sure that each die is only one hop apart from the other, ensuring that the off-die latency is as low as reasonably possible.

Meanwhile scaling things up to their logical conclusion, we have 2P configurations. A dual socket EPYC setup is in fact a "virtual octal socket" NUMA system.

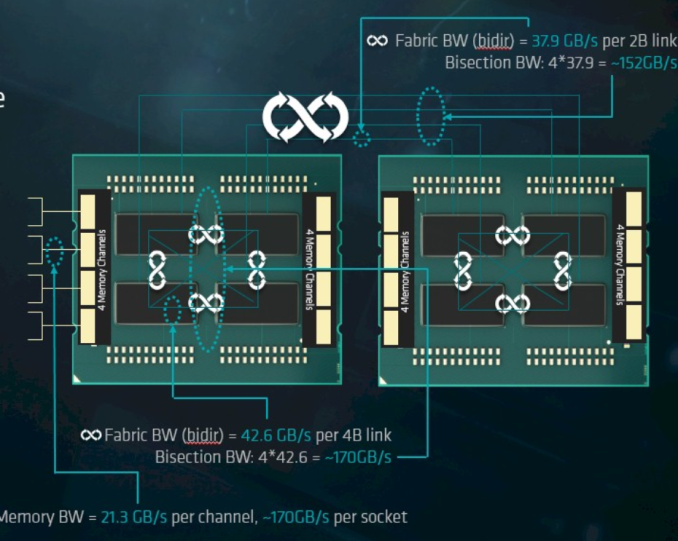

AMD gave this "virtual octal socket" topology ample bandwidth to communicate. The two physical sockets are connected by four bidirectional interconnects, each consisting of 16 PCIe lanes. Each of these interconnect links operates at +/- 38 GB/s (or 19 GB/s in each direction).

So basically, AMD's topology is ideal for applications with many independently working threads such as small VMs, HPC applications, and so on. It is less suited for applications that require a lot of data synchronization such as transactional databases. In the latter case, the extra latency of exchanging data between dies and even CCX is going to have an impact relative to a traditional monolithic design.

219 Comments

View All Comments

StargateSg7 - Sunday, August 6, 2017 - link

Maybe I'm spoiled, but to me a BIG database is something I usually deal with on a daily basissuch as 500,000 large and small video files ranging from two megabytes to over a PETABYTE

(1000 Terabytes) per file running on a Windows and Linux network.

What sort of read and write speeds do we get between disk, main memory and CPU

and when doing special FX LIVE on such files which can be 960 x 540 pixel youtube-style

videos up to full blown 120 fps 8192 x 4320 pixel RAW 64 bits per pixel colour RGBA files

used for editing and video post-production.

AND I need for the smaller files, total I/O-transaction rates at around

OVER 500,000 STREAMS of 1-to-1000 64 kilobyte unique packets

read and written PER SECOND. Basically 500,000 different users

simultaneously need up to one thousand 64 kilobyte packets per

second EACH sent to and read from their devices.

Obviously Disk speed and network comm speed is an issue here, but on

a low-level hardware basis, how much can these new Intel and AMD chips

handle INTERNALLY on such massive data requirements?

I need EXABYTE-level storage management on a chip! Can EITHER

Xeon or EPyC do this well? Which One is the winner? ... Based upon

this report it seems multiple 4-way EPyC processors on waterblocked

blades could be racked on a 100 gigabit (or faster) fibre backbone

to do 500,000 simultaneous users at a level MUCH CHEAPER than

me having to goto IBM or HP for a 30+ million dollar HPC solution!

PixyMisa - Tuesday, July 11, 2017 - link

It seems like a well-balanced article to me. Sure the DB performance issue is a corner case, but from a technical point of view its worth knowing.I'd love to see a test on a larger database (tens of GB) though.

philehidiot - Wednesday, July 12, 2017 - link

It seems to me that some people should set up their own server review websites in order that they might find the unbiased balance that they so crave. They might also find a time dilation device that will allow them to perform the multitude of different workload tests they so desire. I believe this article stated quite clearly the time constraints and the limitations imposed by such constraints. This means that the benchmarks were scheduled down to the minute to get as many in as possible and therefore performing different tests based on the results of the previous benchmarks would have put the entire review dataset in jeopardy.It might be nice to consider just how much data has been acquired here, how it might have been done and the degree of interpretation. It might also be worth considering, if you can do a better job, setting up shop on your own and competing as obviously the standard would be so much higher.

Sigh.

JohanAnandtech - Thursday, July 13, 2017 - link

Thank you for being reasonable. :-) Many of the benchmarks (Tinymembench, Stream, SPEC) etc. can be repeated, so people can actually check that we are unbiased.Shankar1962 - Monday, July 17, 2017 - link

Don't go by the labs idiotUnderstand what real world workloads are.....understand what owning an entire rack means ......you started foul language so you deserve the same respect from me......

roybotnik - Wednesday, July 12, 2017 - link

EPYC looks extremely good here aside from the database benchmark, which isn't a useful benchmark anyways. Need to see the DB performance with 100GB+ of memory in use.CarlosYus - Friday, July 14, 2017 - link

A detailed and unbiased article. I'm awaiting for more tests as testing time passes.3.2 Ghz is a moderate Turbo for AMD EPYC, I think AMD could push it further with a higher thermal envelope i/o 14 nm process improvement in the coming months.

mdw9604 - Tuesday, July 11, 2017 - link

Nice, comprehensive article. Glad to see AMD is competitive once again in the server CPU space.nathanddrews - Tuesday, July 11, 2017 - link

"Competitive" seems like an understatement, but yes, AMD is certainly bringing it!ddriver - Tuesday, July 11, 2017 - link

Yeah, offering pretty much double the value is so barely competitive LOL.