In The Lab: The Netgear XS724EM, a 24-port 2.5G/5G/10GBase-T Switch

by Ian Cutress on September 28, 2018 3:00 PM EST- Posted in

- Networking

- NetGear

- 10GBase-T

- 10GbE

- XS724EM

For a special occasion, and with what looked like a pricing error, I decided to splash out on a 10GBase-T switch for my testing lab. Coming in at almost £800, reduced from £1900, this beast was not cheap but surprisingly below my personal cost-per-port to get into the 10-gigabit game. Rather than review the switch (how do you review a switch anyway? ), I just want to go through what this thing is and what I can do with it. Plus some rough point-to-point bandwidth speeds.

The Quest for 10G on Copper

One of my personal crusades in recent years has been to push 10-gigabit networking – specifically Ethernet over copper (10GBase-T) – into a price range that is more amenable to home users. For a long time, this technology has been priced for commercial and enterprise: upwards of $100 per port for the switch and $100-$200 per port for the add-in cards. This is partly because the technology has a lot of enterprise bells and whistles, such as QoS, but also there has never been a big drive for more than gigabit Ethernet in the home.

Recently this changed somewhat. After a decade of Intel’s 10G silicon on the shelves, Aquantia came in and started offering add-in cards below $100 – and not only for 10G but also the new 2.5G and 5G standards as well. Their idea is to expand the market for this technology, given that they’ve been in the backhaul and networking backbone markets for a while. They had a 2 year lead over others on the 2.5G/5G silicon, but the key issue (as I explained to them over a year ago) was that in order to make it happen in the home it would require switches. These switches could either be managed or unmanaged, but really there needs to be a $50/port or $30/port series of switches for multi-gigabit to really take off. I make an online poll just for this.

For those waiting for Multi-Gig (5G/10G) switches to come down in price, at what point would you pay for a 5-port switch (if you were buying one / it fit into your plan)? Describe your use case in the comments [POLL]

— Ian Cutress (@IanCutress) May 31, 2018

Out of 137 voters at the time, about 10% said they would jump on the technology at $80 per port. Around a third said $50 per port, and 60% or so said $30 per port. To be honest, these results were around what I expected. Personally, I think a $250 5-port switch would be a great point to enter the market.

All that being said, and as much as the good folks at Aquantia agree with me, they don’t make the switches – it’s up to the Netgears, the D-Links, the TP-Links, and such to actually build them. I don’t have contacts with any of them to say what their thoughts are, but they haven’t been as quick as I hoped. One thing is that, I guess, they don’t want to build cheap 10G switches which might pull business away from the high margin enterprise hardware.

The State of 10GBase-T

A while back, before Aquantia burst into the scene, we did a piece about every consumer motherboard with 10GBase-T built in. This article saw insane traffic for a short piece, but it also showed every motherboard that was using Intel’s X540-T2 controller chip. For these boards, the chip was expensive (adding ~$250 to the board retail price), power hungry, and it required a good number of PCIe lanes. The upside was that most of these boards were dual port.

Since then, we’ve seen boards with Aquatia AQC107 (and AQC108) chips on board, which raise the price of the board by $70-$100 for a single port, but this is still a far more accessible way of enabling anything better than gigabit Ethernet on a PC. Then there's the range of 10GbE PCIe cards available, running at around $100.

As for the switches, the only options were a number of managed 8-port models from the likes of Netgear, such as the Netgear XS708E, which was around the $750 mark. Shelling out almost $80-$100 per port (after taxes), as we saw in the poll above, is a little insane for a home network and doesn’t appeal to very many users.

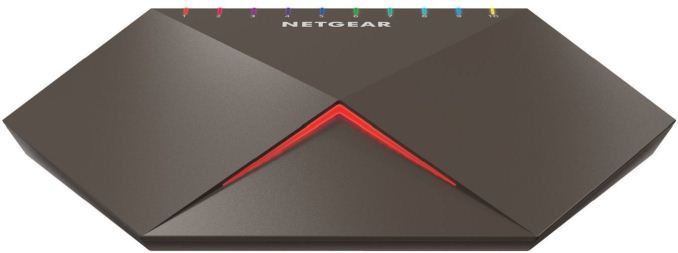

In the last year or so, there have been a number of switches that have hit the market offering two 10GBase-T ports and eight 1G ports. This includes switches such as the ASUS XG-U2008, which has been on sale for $250-$300 or so, the Netgear gaming-focused GS810EMX at $250-$300, and the Netgear GS110EMX which is a non-gaming version for slightly less. The problem with these switches is that they only have two ports – there’s no way to make a ‘tree’ from them, it essentially becomes expensive point-to-point connection, given the cheap cost of gigabit switches.

The Netgear Gaming 2x10G + 8x1G managed switch

So as of this week, the state of play was this for 10G offerings:

- £175 / $200 for a 2-port 10GBase-T (GS110EMX), $100 per port

- £395 / $435 for a 4-port 10GBase-T (QNAP QSW-804-4C), $110 per port

- £504 / $553 for an 8-port 10GBase-T (Netgear XS708E), $70 per port

- £1182 / $1300 or a 12-port 10GBase-T (TP-Link T1700X-16TS), $108 per port

- £1230 / $1350 for a 16-port 10GBase-T (Netgear XS716E), $84 per port

The offerings are still pretty abysmal for anyone looking for a ‘quick fix’ to enable 10GBase-T in the home.

It Was A Misprice or Something

So this week, when a family member asked me what I wanted for my birthday, I idly flicked through some switch listings. Thinking I might just splurge for a 2-port, I was hoping that an 8-port had come down in price. What I found, without too much trouble, was the Netgear XS724EM, a 24-port 10GBase-T switch. My search hadn’t been for that many ports – I assumed it would automatically be too expensive.

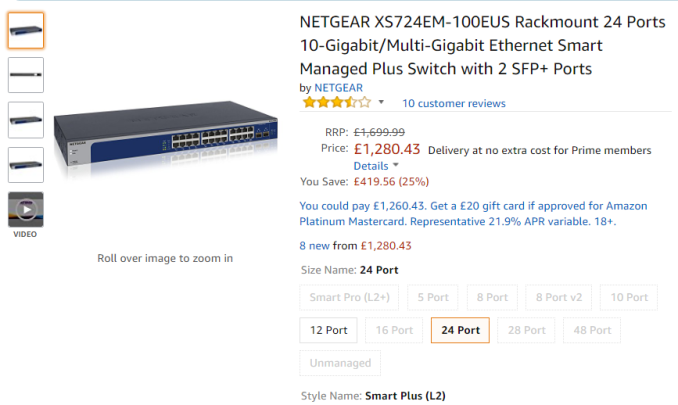

The XS724EM had an RRP of £1700. The price in front of me was £782. After a quick rant on Twitter, it was a no brainer (ed: I still think you're insane). At £782 / $858, this was a 55% discount, and comes in at just under $36 per port. I expected at some point that the cost of the switches would come down in price, although I didn’t anticipate the first one to do so would be a super-large one. Not only that, but it supports both 5G and 2.5G as well, so it's still beneficial with existing Cat5e runs.

If you go to the page today, you will see that this might have been a misprice.

The unit is currently up for £1280, almost £500 more than what I paid for it. Bargain. Prime delivery too.

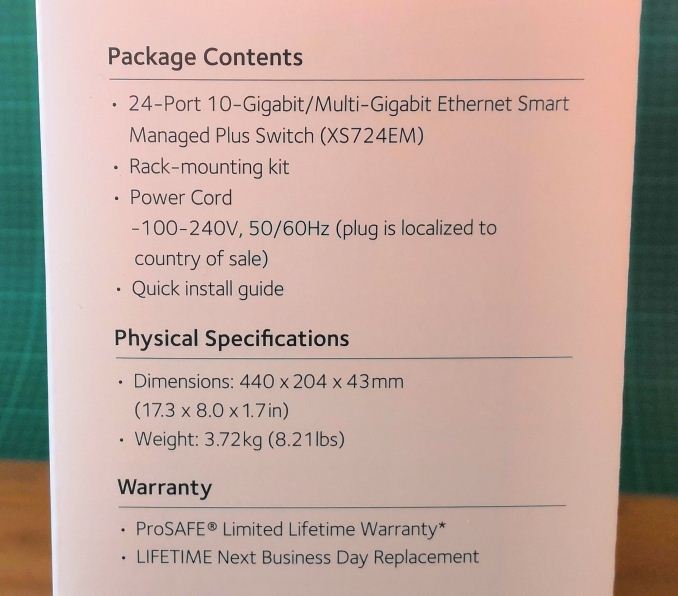

Unboxing the XS724EM

After showing the box to the resident feline population, it was time to see what we had. On the side it gives a lot of pertinent information. This unit weighs 3.72kg / 8.21 lbs, which will be a key point for some users.

In the box, the unit is well packaged with foam blocks, although there is little space above and below it should the box be punctured.

Aside from the manual, the box came with two power cords (one UK, one EU), along with rubber feet for users putting the switch on a desk somewhere, and brackets to extend the unit to a standard 19-inch rack. Some of the comments online state that in a rack, using the screws, it actually ends up very rear heavy, putting a lot of torque on the screws if the unit isn’t directly above a server. In this case, it might be good to invest in rails.

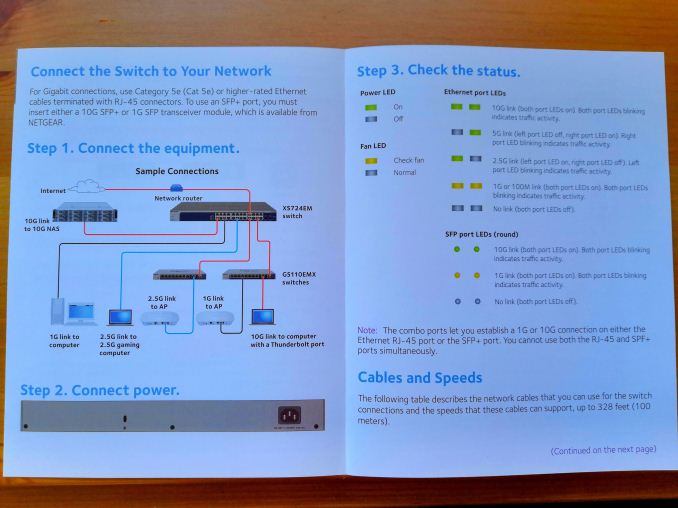

The manual gives examples of how to connect the switch to multiple devices. Interestingly it thinks that gaming laptops with 2.5G connections are somewhat ubiquitous – I think someone should tell Netgear this is not the case.

There is also an app for the smartphone to help with additional management.

The cables for the switch are designed to be put in the front, and we get 24x 1G/2.5G/5G/10GBase-T ports for RJ45 cables. There is also two 10G SFP+ ports on the right, muxed with the final two 10GBase-T ports so only one pair can be used at once.

The lights on a normal gigabit Ethernet port are both orange and flicker with data. In this case, to discern 2.5G, 5G, and 10G, the LEDs go green and will have different patterns based on the connectivity.

There’s a Kensington Lock on the rear for physical security.

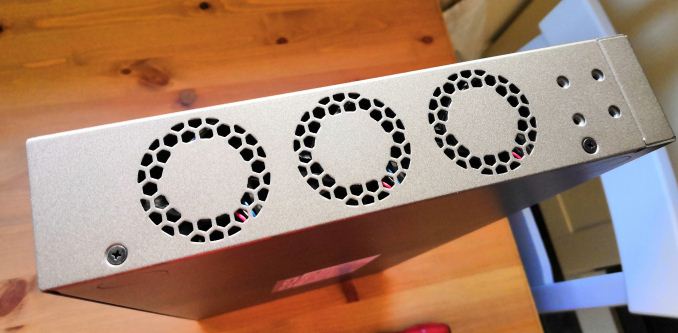

Airflow through the unit is provided by three fans near the outtake, with the intake on the other side.

Opening the chassis takes two screws on either side and three on the rear. It slides off like a standard server chassis, keeping the front panel.

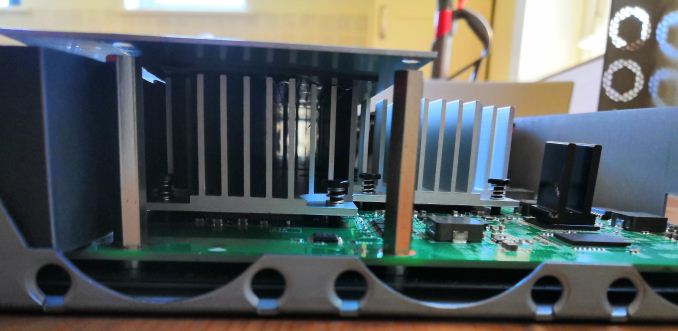

At the rear of the chassis, covered in a shroud, in the built in power supply. The main PCB has several big heatsinks on it, which we’ll get to in a bit.

The fans in the chassis are Delta AFB0412SHB brushless fans, and these can kick up quite a noise at full blast. Luckily the only time I’ve heard them on full is when turning the unit on.

On the PCB are the controllers covered in aluminium heatsinks. These heatsinks are big and heavy, and there’s even a metal plate on top of the main switching fabric.

I actually tried to take this plate off to see the controllers underneath, but that was a no-go. The heatsinks actually use additional thermal pads to keep the plate attached and to conduct the heat energy through the unit. As I bought this unit personally for my use, rather than AnandTech’s money or a review sample, I wasn’t willing to potentially break things. Sorry.

After fitting it all back together, and putting the rubber feet on, it was time to hook it up to my home network.

This switch is going to sit at the crossroads of my five main test beds, along with a steam cache server (to enable quicker downloads), a local NAS, and a few other devices. For sure, I’ll be doing some office rearrangement soon to make the most out of the switch.

Using the Switch

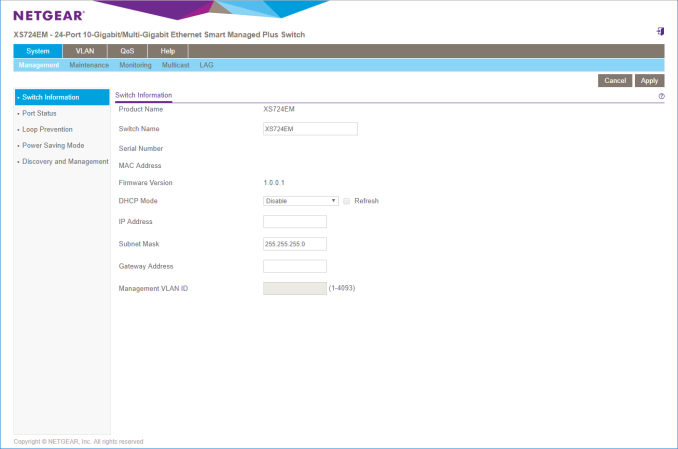

This is a managed switch, which means there is the opportunity to go in and organise all of the settings. However, for users who just want to use it as a switch, it is almost as easy as plug and play. In fact, it was plug and play to begin with – in order to make the process a bit easier, I went into the web interface for the switch and disabled DHCP to make it perfectly clear (DHCP is handled by my router).

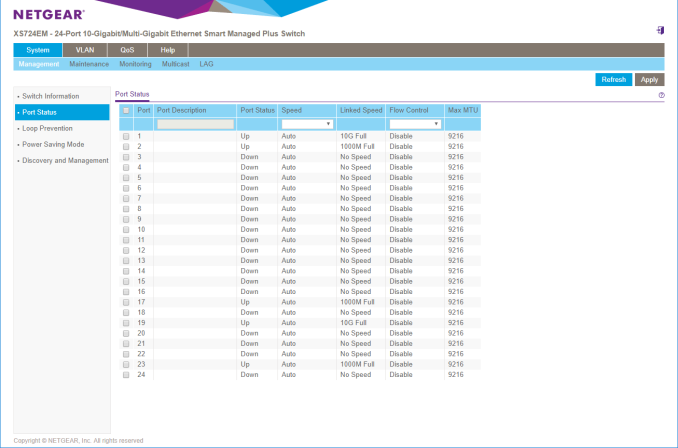

Logging in was straightforward (IP and password are on the bottom of the switch, and the password default is password), and the management control seems suitable for what it was designed for. Users can tell which ports are connected at what speeds, and also limit connectivity per port, and set up VLANs. In my case I’m not going to be using much of any of this, but the VLAN and QoS options are going to be key for office users.

Performance

As it turns out, testing networking hardware is difficult. If you really want to get a detailed overview of a switch, it requires the best part of 12-16 systems hitting it hard, aggregating the results for latency and bandwidth, and also keeping track of power, temperature, and noise. Unfortunately I have neither the time nor the facilities to do that, but a quick blast of iperf for peak-to-peak speeds is what we have at hand.

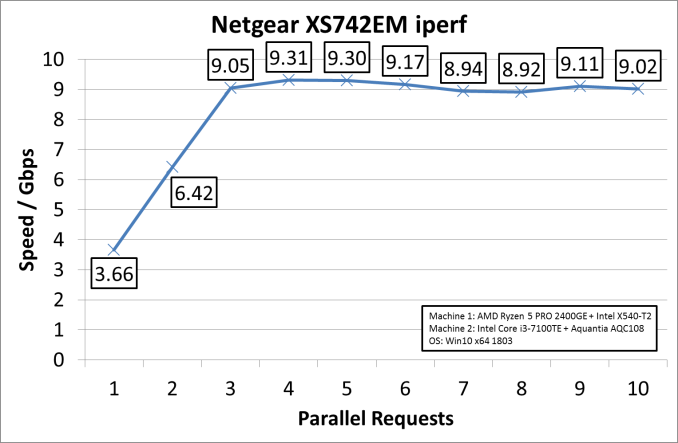

For our testing systems, on one end I have an AMD Ryzen Pro 2400GE (35W) APU system with an Intel X540T2 PCIe card equipped, and the other end is an X170 motherboard with a Core i3-7100T (35W) and an Aquantia AQC107 PCIe card equipped. Both systems were running Win10 x64 Enterprise 1803. I ran installed cards and drivers, made no other settings changes, and ran iperf by varying the number of parallel connections.

The default settings in iperf and on the two systems showed that we could, in theory, reach transfer rates of around 9.3 Gbps. The cards could also be the limiting factor here – the dangers of testing networking is that typically a 10G card is connected to a 10G switch is connected to another 10G card; either one of those three parts could be the bottleneck. I did note that iperf very easily used 85% of one thread on each system, so it could be that we need a faster CPU for better performance as well.

But a more initial concern when buying a switch like this is noise. This is a switch designed for the hubbub of a small office, or a server rack – not necessarily a home office where I might be recording audio. However in my initial use, the only time the fans have come on is when the machine is turned on (like some motherboards turn all fans to full until the startup sequence finishes). After that initial 15 second startup, the fans go to silent. When testing point-to-point peak speeds over several minutes, the unit is still silent. It naturally gets warm to touch, but in my setup it is out of the way on a desk. I’m sure I can find a place for the cats to sit on it and enjoy.

The Final Word

As mentioned, I forked over my own cash for this hardware. At $36 a port, I’m still amazed that the first one that crossed my $50 line was a massive 24-port switch, so now I have overkill for whatever I have planned (ed: it may involve CPUs and motherboards). The key thing here, for me, will be my testing – every new testbed requires 100GB of CPU tests and 800G+ of gaming tests, so copying these over takes time. On a gigabit network, using my new Steam Cache means that at a speed of 70MB/s a big game like GTA5 can still take 13 minutes. I’m hoping that with 10G, if can push that transfer speed to SATA limits, that the total time will be down to around two minutes. There's also the possibility of doing some network card testing in the future now.

Related Reading

- Aquantia’s Gamer Edition AQtion AQN-107 10 GbE NIC Available

- Aquantia Multi-Gig: Single Chip USB 3.0 to 5G/2.5G Dongles Coming Soon

- Dell Now Offers Aquantia AQtion AQN-108-Based 5 GbE Cards with Select PCs

- Aquantia Launches New 2.5G/5G Multi-Gigabit Network Controllers for PCs

- AKiTiO Displays Thunderbolt 3 to 10GBase-T Adapter

- Consumer 10GBase-T Options: Motherboards with 10G Built-In

- Netgear Introduces Second-Gen ProSAFE 10GBase-T Switches for SMBs

- Netgear ReadyNAS 716 Review: 10GBase-T in a Desktop NAS

_thumb.png)

_thumb.png)

_thumb.png)

_thumb.png)

_thumb.png)

_thumb.png)

51 Comments

View All Comments

thewishy - Monday, October 1, 2018 - link

Exactly the same setup as I have for home. Fileserver, ESXi boxes and my desktop are all hooked up to 10 gig because I chuck a lot of files around. Don't need to waste a 10g ports on a CCTV cameraJoeyJoJo123 - Monday, October 1, 2018 - link

>it doesn't help when that old Cat5e or cat6 cable can't run 10Gb because it either isn't up to spec or wasn't installed to spec for 10Gb.Please stop with this nonsense. Cat5e, Cat6 (not an official cabling standard, by the way), Cat6a, Cat7 are all twisted pair copper cables with the same port shape and number of connectors with the same order to the pinout. The difference is shielding and effective SNR.

Can you connect 10Gig switch with existing "Cat5e" cable runs? Sure can do boss. Some of the runs may be noisier/longer than others and may not transfer at the best rate, but it'll connect up just fine. There are even products that can effectively measure the transfer bandwidth that you can get out of an existing cable run. So you may not even need to rip out every single run and replace it for new cabling. If you're not happy with the existing bandwidth out of your current cabling solution you can replace the individual cable runs to cabling that's at a higher spec.

https://www.amazon.com/dp/B00Q6Y0LIA

Every time people talk about "Waaahhh the Cat5e cables won't work" I die a little bit inside. It's not like it's some completely different cable, so you might as well be coming off like a Monster Cables salesman telling us all about the wonders of your new diamond-plated "True 4K 60hz High Speed Ethernet over HDMI with Audio Return Channel" cables.

BGADK - Wednesday, October 3, 2018 - link

I have several clients running 10GBase-T on Cat5e cables on full speed. No problem, and no errors in the logs. The cable runs are nowhere the maximum 100 meters, but more like 25-35 meters, so that is a factor.Before we start, I allways tell the client, that there is no guarantee that their Cat5e installation is going to work, but there is no reason to rip out the old cables and replace them with new cat6a cables, before we test if 10GBit is going to work with their existing cat5e installation.

And until now, that has allways been the case. Cat5e do really work with 10GBase-T, even if it is not a sure thing.

cygnus1 - Saturday, September 29, 2018 - link

I went the SFP+ route for my home lab as well, but I went with the new mikrotik 16 10g port switch (CRS317-1G-16S+) that was only about $350. it's worked great so far. it's not technically fanless, but the fans never spin so I never hear it. With 16 ports, it let me do dual 10G to my handful of lab servers, plus 1 to my main desktop. it's been awesomedgingeri - Saturday, September 29, 2018 - link

While it is pretty cool that there's a 16 port 10G SFP+ switch, Microtik's hardware warranty is only 1 month, so I wouldn't be comfortable buying one.On the other hand, I found Trendnet has released a switch with the same port config as my Dlink DGS-1510-28X for $100 cheaper. I'm not as comfortable with Trendnet's reliability or warranty as I am Dlink, but it is a nice option.

daviderickson - Friday, September 28, 2018 - link

If you care at all about latency, don't go for copper at 10G. We did measurements here and copper was 10x higher than a direct attach cable.Death666Angel - Friday, September 28, 2018 - link

As someone not "in the know", what is "direct attach cable". And why would that be different to 10Gbe R45 ethernet? Some links and further info would be appreciated.praeses - Friday, September 28, 2018 - link

Direct Attach Cable or DAC is used for 10G+, it has an integrated interface that goes in an SFP+ slot on either end of the cable and requires less circuitry on either end to convert it to a longer range signal/protocol. This dramatically reduces power/latency per port and can often be cheaper for short range (15m, maybe longer now). Generally from the following perspectives (greater is not always better):Power consumption:

Copper (RJ45) > Fiber > DAC

Range:

Fiber > Copper (RJ45) > DAC

Cost: (recently, this has been changing)

Fiber > Copper (RJ45) > DAC

Latency:

Copper (RJ45) > Fiber > DAC

Inter-compatibility between manufacturers/devices:

Fiber > Copper (RJ45) > DAC

There are best use cases for each especially when budget/existing infrastructure are taken into consideration. Copper will be mostly used in the home because it's easy to work with and cabling is cheap. Otherwise generally you will want to use DAC where you can, then Fiber, then Copper. DAC cable management (sometimes stiffer cables/limited bending radius/limited length options) can be a little more annoying.

sor - Saturday, September 29, 2018 - link

We always called it twinax, usually had SFP+ or QSFP, sometimes a QSFP on one side going out to four SFP+ on the other.I liked it because for runs less than 10m it was far cheaper than buying optics for either end but performed just as well.

dgingeri - Saturday, September 29, 2018 - link

Well, I can tell you SFP+ cables are now much more expensive than optical, unless you're insistent on using Cisco or HP equipment. Generic SFP+ optic modules can now be found in the $20-25 range, and OM-3 cables are as cheap as $15 for 3m and $25 for 10m. Compared to the $60-100 range for SFP+ cables no matter the length, it's quite cheap these days.