The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM EST

It has been over a year since Intel launched its Skylake-X processors and Basin Falls platform, with a handful of processors from six-core up to eighteen-core. In that time, Intel’s competition has gone through the roof in core count, PCIe lanes, power consumption. In order to compete, Intel has gone down a different route, with its refresh product stack focusing on frequency, cache updates, and an updated thermal interface. Today we are testing the top processor on that list, the Core i9-9980XE.

Intel’s Newest Processors

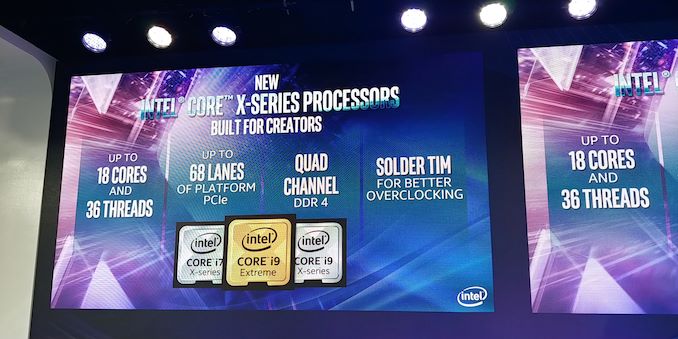

In October, at their Fall Desktop Event, Intel lifted the lid on its new generation of high-end desktop processors. There will be seven new processors in all, with the Core i9-9980XE eighteen-core part at the top, down to the eight-core Core i7-9800X as the cheapest model.

| Intel Basin Falls Skylake-X Refresh | ||||||||

| AnandTech | Cores | TDP | Freq | L3 (MB) |

L3 Per Core |

DRAM DDR4 |

PCIe | |

| i9-9980XE | $1979 | 18 / 36 | 165 W | 3.0 / 4.5 | 24.75 | 1.375 | 2666 | 44 |

| i9-9960X | $1684 | 16 / 32 | 165 W | 3.1 / 4.5 | 22.00 | 1.375 | 2666 | 44 |

| i9-9940X | $1387 | 14 / 28 | 165 W | 3.3 / 4.5 | 19.25 | 1.375 | 2666 | 44 |

| i9-9920X | $1189 | 12 / 24 | 165 W | 3.5 / 4.5 | 19.25 | 1.604 | 2666 | 44 |

| i9-9900X | $989 | 10 / 20 | 165 W | 3.5 / 4.5 | 19.25 | 1.925 | 2666 | 44 |

| i9-9820X | $889 | 10 / 20 | 165 W | 3.3 / 4.2 | 16.50 | 1.650 | 2666 | 44 |

| i7-9800X | $589 | 8 / 16 | 165 W | 3.8 / 4.5 | 16.50 | 2.031 | 2666 | 44 |

The key highlights on these new processors is the increased frequency of most of the parts compared to the models they replace, the increased L3 cache on most of the parts, and now all of Intel’s high-end desktop processors will have 44 PCIe lanes from the CPU out of the box (not including chipset lanes).

When doing a direct comparison to the previous generation of Intel’s high-end desktop processors, the key highlights are in the frequency. There is no six-core processor any more, given that the mainstream processor line goes up to eight cores. Anything with twelve cores or below gets extra L3 cache and 44 PCIe lanes, but also rises up to a sustained TDP of 165W.

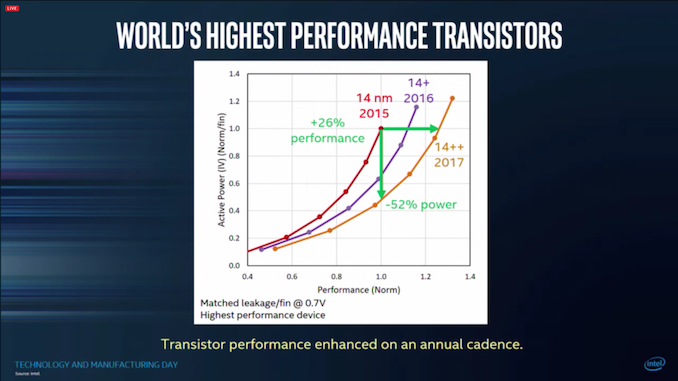

All of the new processors will be manufactured on Intel’s 14++ node, which allows for the higher frequency, and will use a soldered thermal material between the processor and the heatspreader to help manage temperatures.

New HCC Wrapping

Intel’s high-end desktop cadence since 2010 has been relatively straightforward: firstly a new microarchitecture with a new socket on a new platform, followed by an update using the same microarchitecture and socket but on a new process node. The Skylake-X Refresh (or Basin Falls Refresh, named after the chipset family) series of processors breaks that mold.

The new processors instead take advantage of Intel’s middle-sized silicon configuration to exploit additional L3 cache. I’ll break this down in to what it actually means:

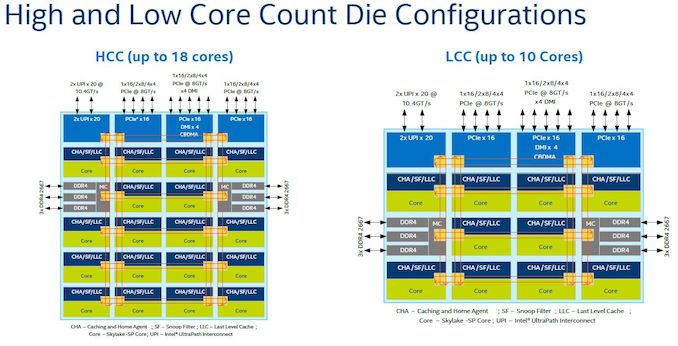

Intel historically creates three different sizes of enterprise CPUs: a low core count (LCC) design, a high core count (HCC) design, and an extreme core count design (XCC). For the Skylake-SP family, the latest enterprise family, the LCC design offers up to 10 cores and 13.75MB L3 cache, the HCC design is up to 18 cores and 24.75 MB L3 cache, and the XCC design is up to 28 cores with 38.5 MB of L3 cache. Intel then disables cores to fit the required configurations its customer needs. However, an 8 core processor in this model could be a 10-core LCC cut down by two cores, or an 18-core HCC cut down by 10 cores. Some of those cut cores can have their L3 cache still enabled, creating more differentiation.

For the high-end desktop, Intel usually uses the LCC core count design exclusively. For Skylake-X, this changed, and Intel started to offer HCC designs up to 18 cores in its desktop portfolio. The split was very obvious – anything 10 core or under was LCC, and 12-18 core was HCC. The options were very strict – the 14 core part had 14 cores of L3 cache, 12 core part had 12 cores of L3 cache, and so on. Intel also split the processors into some that had 28 PCIe lanes and some with 44 PCIe lanes.

For the new refresh parts, Intel has decided that there are no LCC variants any more. Every new processor is a HCC variant, cut down from the 18-core HCC die. If Intel didn’t tell us this outright, it would be easily spotted by the L3 cache counts: the lowest new chip is the Core i7-9800X, an eight-core processor with 16.5 MB of L3 cache, which would be more than the LCC silicon could offer.

Using HCC across all of the new processors is a double-edged sword. On the plus side, some CPUs have more cache, and everyone has 44 PCIe lanes. On the downside, the TDP has increased to 165W for some of those parts, but it also means that a lot of silicon is perhaps being ‘wasted’. The HCC silicon is significantly larger than the LCC silicon, and Intel gets fewer working processors per wafer it manufactures. This ultimately means that if these processors were in high demand, its ability to manufacture more could be lower. On the flip side, having one silicon design for the whole processor range, but with bits disabled, might make stock management easier.

Because the new parts are using Skylake-X cores, it comes with AVX512 support as well as Intel's mesh interconnect. As with the original Skylake-X parts, Intel is not defining the base frequency of the mesh, but suggesting a recommended range for the mesh frequency. This means that we are likely to see motherboard vendors very in their mesh frequency implementation: some will run at the peak turbo mesh frequency at all times, some will follow the mesh frequency with the core frequency, and others will run the mesh at the all-core turbo frequency.

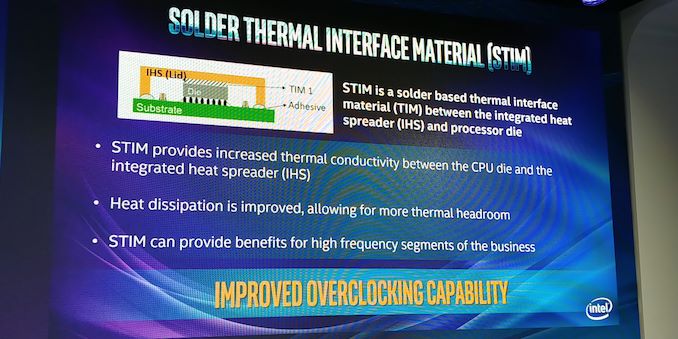

Soldered Thermal Interface Material (sTIM)

One of the key messages out of Intel’s Fall Desktop Launch event is a return to higher performance thermal interface materials. As we’ve covered several times in the past, Intel has been reverting back to using a base thermal grease in its processor designs. This thermal grease typically has better longevity through thermal cycling (although we’re comparing years of use to years of use), is cheaper, but performs worse for thermal management. The consumer line from Intel has been using thermal grease since Ivy Bridge, while the high-end desktop processors were subjected to grease for Skylake-X.

| Thermal Interface | |||||||

| Intel | |||||||

| Intel | Celeron | Pentium | Core i3 | Core i5 | Core i7 Core i9 |

HEDT | |

| Sandy Bridge | LGA1155 | Paste | Paste | Paste | Bonded | Bonded | Bonded |

| Ivy Bridge | LGA1155 | Paste | Paste | Paste | Paste | Paste | Bonded |

| Haswell / DK | LGA1150 | Paste | Paste | Paste | Paste | Paste | Bonded |

| Broadwell | LGA1150 | Paste | Paste | Paste | Paste | Paste | Bonded |

| Skylake | LGA1151 | Paste | Paste | Paste | Paste | Paste | Paste |

| Kaby Lake | LGA1151 | Paste | Paste | Paste | Paste | Paste | - |

| Coffee Lake | 1151 v2 | Paste | Paste | Paste | Paste | Paste | - |

| CFL-R | 1151 v2 | ? | ? | ? | K = Bonded | - | |

| AMD | |||||||

| Zambezi | AM3+ | Bonded | Carrizo | AM4 | Bonded | ||

| Vishera | AM3+ | Bonded | Bristol R | AM4 | Bonded | ||

| Llano | FM1 | Paste | Summit R | AM4 | Bonded | ||

| Trinity | FM2 | Paste | Raven R | AM4 | Paste | ||

| Richland | FM2 | Paste | Pinnacle | AM4 | Bonded | ||

| Kaveri | FM2+ | Paste / Bonded* | TR | TR4 | Bonded | ||

| Carrizo | FM2+ | Paste | TR2 | TR4 | Bonded | ||

| Kabini | AM1 | Paste | |||||

| *Some Kaveri Refresh were bonded | |||||||

With the update to 9th Generation parts, both consumer overclockable processors and all of the high-end desktop processors, Intel is moving back to a soldered interface. The use of a liquid-metal bonding agent between the processor and the heatspreader should help improve thermal efficiency and the ability to extract thermal energy away from the processor quicker when sufficient coolers are applied. It should also remove the need for some extreme enthusiasts to ‘delid’ the processor to put their own liquid metal interface between the two.

The key thing here from the Intel event is that in the company’s own words, it recognizes that a soldered interface provides better thermal performance and ‘can provide benefits for high frequency segments of the business’. This is where enthusiasts rejoice. For professional or commercial users who are looking for stability, this upgrade will help the processors run cooler for a given thermal solution.

How Did Intel Gain 15% Efficiency?

Looking at the base frequency ratings, the Core i9-9980XE is set to run at 3.0 GHz for a sustained TDP of 165W. Comparing that to the previous generation which is only at 2.6 GHz, this would equate to a 15% increase in efficiency in real terms. These new processors have no microarchitectural changes over the previous generation, so the answers lie into two main areas: binning and process optimization.

The binning argument is easy – if Intel tightened the screws on its best bin, then we would see a really nice product. A company as large as Intel has to balance how often they get a processor to fall into a bin with demand – there’s no point advertising a magical 28-core 5 GHz CPU at a low TDP if only one in a million hits that value.

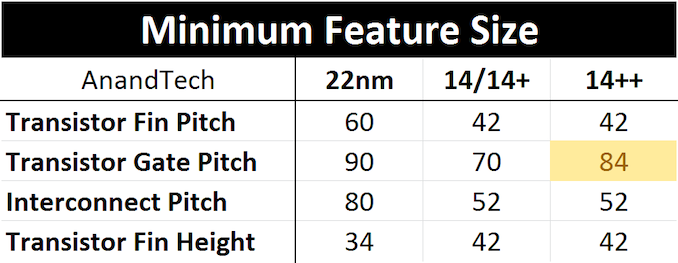

Process optimization is going to be a likely cause in that case. Intel is now manufacturing these parts on its 14++ manufacturing node, which is part of Intel’s ‘14nm class family’, and is a slightly relaxed version of 14+ with a larger transistor gate pitch to allow for a higher frequency:

As with all adjustments to semiconductor processes, if you improve one parameter then several others change as well. The increase in higher frequency typically means higher power consumption and energy output, which can be assisted with the thermal interface. To use 14++ over 14+, Intel might have also had to use new masks, which might allow for some minor adjustments to also improve power consumption/thermal efficiency. One of the key features right now in CPU world is the ability for the chip to track voltage per-core more efficiently do decrease overall power consumption, however Intel doesn’t usually spill the beans on features like this unless it has a point to prove.

Exactly how much performance Intel has gained with its new processor stack will come through in our testing.

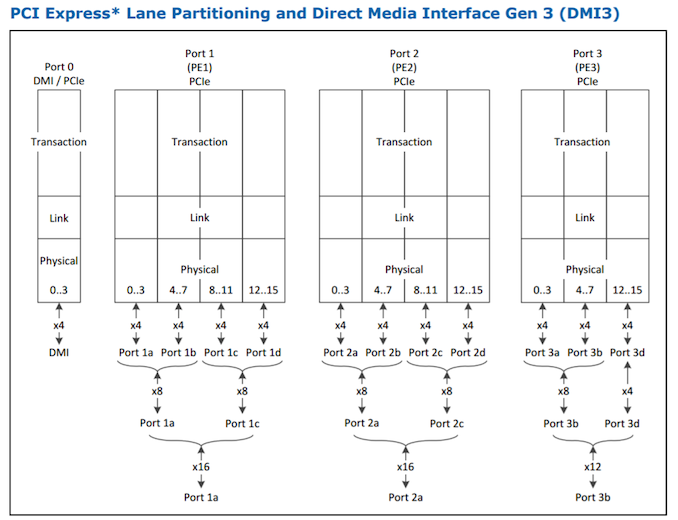

More PCIe 3.0 Please: You Get 44 Lanes, Everyone Gets 44 Lanes

The high-end desktop space has started becoming a PCIe lane competition. The more lanes that are available direct from the processor, the more accelerators, high-performance networking, and high-performance storage options can be applied on the same motherboard. Rather than offering slightly cheaper, lower core count models with only 28 lanes (and making motherboard layout a complete pain), Intel has decided that each of its new CPUs will offer 44 PCIe 3.0 lanes. This makes motherboard layouts much easier to understand, and allows plenty of high-speed storage through the CPU even for the cheaper parts.

On top of this, Intel likes to promote that its high-end desktop chipset also has 24 PCIe 3.0 lanes available. This is a bit of a fudge, given that these lanes are bottlenecked by a PCIe 3.0 x4 link back to the CPU, and that some of these lanes will be taken up by USB ports or networking, but much like any connectivity hub, the idea is that the connections through the chipset are not ‘always hammered’ connections. What sticks in my craw though is that Intel likes to add up the 44 + 24 lanes to say that there are ’68 Platform PCIe 3.0 Lanes’, which implies they are all equal. That’s a hard no from me.

Intel faces competition here on PCIe lanes, as AMD’s high-end desktop Threadripper 2 platform offers 60 PCIe lanes on all of its parts. AMD also recently announced that its next generation 7nm enterprise processors will be using PCIe 4.0, so we can expect AMD’s HEDT platform to also get PCIe 4.0 at some point in the future. Intel will need to up its game here to remain competitive for sure.

Motherboard Options

The new high-end desktop processors are built to fit the LGA2066 socket and use X299 chipsets, and so any X299 motherboard on the market with a BIOS update should be able to accept these new parts, including the Core i9-9980XE. When we asked ASRock for a new BIOS, given that the ones listed did not state if the new processors were supported, we were told ‘actually the latest BIOS already does support them’. It sounds like the MB vendors have been ready with the microcode for a couple of months at least, so any user with an updated motherboard could be OK straight away (although we do suggest that you double check and update to the latest anyway).

For users looking at new high-end desktop systems, we have a wealth of motherboard reviews for you to look through:

- The MSI X299 XPower Gaming AC Review: Flagship Fantasy

- The ASRock X299 Extreme4 Motherboard Review: $200 Entry To HEDT

- The MSI X299 Gaming M7 ACK Motherboard Review: Light up the Night

- The ASUS Prime X299-Deluxe Motherboard Review: Onboard OLED and WiGig

- The EVGA X299 Micro Motherboard Review: A Smaller Take on X299

- The EVGA X299 FTW K Motherboard Review: Dual U.2 Ports

- The GIGABYTE X299 Gaming 7 Pro Motherboard, Reviewed

- The ASUS ROG Strix X299-XE Gaming Motherboard Review: Strix Refined

- The ASUS TUF X299 Mark I Motherboard Review: TUF Refined

- The MSI X299 SLI Plus Motherboard Review: $232 with U.2

- The MSI X299 Tomahawk Arctic Motherboard Review: White as Snow

- The ASRock X299 Taichi Motherboard Review

- The ASRock X299 Professional Gaming i9 Motherboard Review

- The MSI X299 Gaming Pro Carbon AC Motherboard Review

We are likely to see some new models come to market as part of the refresh, as we’ve already seen with GIGABYTE’s new X299-UA8 system with dual PLX chips, although most vendors have substantial X299 motherboard lines already.

The Competition

In this review of the Core i9-9980XE, Intel has two classes of competition.

Firstly, itself. The Core i9-7980XE was the previous generation flagship, and will undoubtedly be offered at discount when the i9-9980XE hits the shelves. There’s also the question of whether more cores or higher frequency is best, which will be answered on a per-benchmark basis. We have all the Skylake-X 7000-series HEDT processors tested for just such an answer. We will test the rest of the 9000-series when samples are made available.

| AnandTech | Cores | TDP | Freq | L3 (MB) |

L3 Per Core |

DRAM DDR4 |

PCIe | |

| Intel | ||||||||

| i9-9980XE | $1979 | 18 / 36 | 165 W | 3.0 / 4.5 | 24.75 | 1.375 | 2666 | 44 |

| i9-7980XE | $1999 | 18 / 36 | 165 W | 2.5 / 4,4 | 24.75 | 1.375 | 2666 | 44 |

| AMD | ||||||||

| TR 2990WX | $1799 | 32 / 64 | 250 W | 3.0 / 4.2 | 64.00 | 2.000 | 2933 | 60 |

| TR 2970WX | $1299 | 24 / 48 | 250 W | 3.0 / 4.2 | 64.00 | 2.000 | 2933 | 60 |

| TR 2950X | $899 | 16 / 32 | 180 W | 3.5 / 4.4 | 32.00 | 2.000 | 2933 | 60 |

Secondly, AMD. The recent release of the Threadripper 2 set of processors is likely to have been noticed by Intel, offering 32 cores at just under the price of Intel’s 18-core Core i9-9980XE. What we found in our review of the Threadripper 2990WX is that for benchmarks that can take advantage of the bi-modal configuration, Intel cannot compete. However, Intel’s processors cover a wider range of workloads more effectively. It’s a tough sell when we compare items such as the 12-core AMD vs 12-core Intel, where benchmarks come out equal but AMD is half the price with more PCIe lanes. This is going to be a tricky one for Intel to be competitive on all fronts (and vice versa).

Availability and Pricing

Interestingly, Intel only offered us our review sample of the i9-9980XE last week. I suspect that this means that the processors, or at least the i9-9980XE, should be available from today. If not, then very soon: Intel has promised by the end of the year, along with the 28-core Xeon W-3175X as well (still no word on that yet).

Pricing is as follows:

| Pricing | ||

| Intel* | AMD** | |

| i9-9980XE | $1979 | |

| $1799 | TR 2990WX | |

| i9-9960X | $1684 | |

| i9-9940X | $1387 | |

| $1299 | TR 2970WX | |

| i9-9920X | $1189 | |

| i9-9900X | $989 | |

| i9-9820X | ~$890 | TR 2950X |

| $649 | TR 2920X | |

| i7-9800X | $589 | |

| i9-9900K | $488 | |

| $329 | Ryzen 7 2700X | |

| * Intel pricing is per 1k units ** AMD pricing is suggested retail pricing |

||

Pages In This Review

- Analysis and Competition

- Test Bed and Setup

- 2018 and 2019 Benchmark Suite: Spectre and Meltdown Hardened

- HEDT Performance: Encoding Tests

- HEDT Performance: Rendering Tests

- HEDT Performance: System Tests

- HEDT Performance: Office Tests

- HEDT Performance: Web and Legacy Tests

- HEDT Performance: SYSMark 2018

- Gaming: World of Tanks enCore

- Gaming: Final Fantasy XV

- Gaming: Shadow of War

- Gaming: Civilization 6

- Gaming: Ashes Classic

- Gaming: Strange Brigade

- Gaming: Grand Theft Auto V

- Gaming: Far Cry 5

- Gaming: Shadow of the Tomb Raider

- Gaming: F1 2018

- Power Consumption

- Conclusions and Final Words

143 Comments

View All Comments

TheJian - Friday, November 16, 2018 - link

I stopped reading when I saw 8k with a 1080. Most tests are just pointless, as it would be more interesting with a 1080ti at least or better 2080ti. That would give the chips more room to run when they can to separate the men from the boys so to speak.Vid tests with handbrake stupid too. Does anyone look at the vid after those tests? It would look like crap. Try SLOWER as a setting and lets find out how the chips fare, and bitrates of ~4500-5000 for 1080p. Something I'd actually watch on a 60in+ tv without going blind.

Release groups for AMZN for example release 5000 bitrate L4.1, 5-9 ref frames, SLOWER. etc. Nfo files reveal stuff like this:

cabac=1 / ref=9 / deblock=1:-3:-3 / analyse=0x3:0x133 / me=umh / subme=11 / psy=1 / psy_rd=1.00:0.00 / mixed_ref=1 / me_range=32 / chroma_me=1 / trellis=2 / 8x8dct=1 / cqm=0 / deadzone=21,11 / fast_pskip=0 / chroma_qp_offset=-2 / threads=6 / lookahead_threads=1 / sliced_threads=0 / nr=0 / decimate=0 / interlaced=0 / bluray_compat=0 / constrained_intra=0 / bframes=8 / b_pyramid=2 / b_adapt=2 / b_bias=0 / direct=3 / weightb=1 / open_gop=0 / weightp=2 / keyint=250 / keyint_min=23 / scenecut=40 / intra_refresh=0 / rc=crf / mbtree=0 / crf=17.0 / qcomp=0.60 / qpmin=0 / qpmax=69 / qpstep=4 / ip_ratio=1.40 / pb_ratio=1.30 / aq=3:0.85

More than I'd do, but the point is, SLOWER will give you far better quality (something I could actually stomach watching), without all the black blocks in dark scenes etc. Current 720p releases from nf or amzn have went to crap (700mb files for h264? ROFL). We are talking untouched direct from NF or AMZN. Meaning that is the quality you are watching as a subscriber that is, which is just one of the reasons we cancelled NF (agenda TV was the largest reason to dump them).

If you're going to test at crap settings nobody would watch, might as well kick in quicksync with quality maxed and get better results as MOST people would do if quality wasn't an issue anyway.

option1=value1:option2=value2:tu=1:ref=9:trellis=3 and L4.1 with encoder preset set to QUALITY.

That's a pretty good string for decent quality with QSV. Seems to me you're choosing to turn off AVX/Quicksync so AMD looks better or something. Why would any USER turn off stuff that speeds things up unless quality (guys like me) is an issue? Same with turning off gpu in blender etc. What is the point of a test that NOBODY would do in real life? Who turns off AVX512 in handbrake if you bought a chip to get it? LOL. That tech is a feature you BUY intel for. IF you turn off all the good stuff, the chip becomes a ripoff. But users don't do that :) Same for NV, if you have the ability to use RTX stuff, why would you NOT when a game supports it? To make AMD cards look better? Pffft. To wait for AMD to catch up? Pffft.

I say this as an AMD stock holder :) Most useless review I've seen in a while. Not wasting my time reading much of it. Moving on to better reviews that actually test how we PLAY/WATCH/WORK in the real world. 8K...ROFLMAO. Ryan has been claiming 1440p was norm since 660ti. Then it was 4k not long after for the last 5yrs when nobody was using that, now it's 8k tests with a GTX 1080...ROFLMAO. No wonder I come here once a month or less pretty much and when I do, I'm usually turned off by the tests. Constantly changing what people do (REAL TESTS) to turning stuff off, down, (vid cards at ref speeds instead of OC OOTB settings etc), etc etc...Let's see if we can set up this test in a way nobody would do at home to strike down advantages of anyone competing with AMD. Blah. I'd rather see where both sides REALLY win in ways we USE these products. Turn everything on if it's in the chip, gpu, test, etc and spend MORE time testing resolutions etc we actually USE in practice. 8k...hahaha. Whatever. 13fps?

"Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain."

Yeah, I'm out. Dropdown quality is against my religion and useless to me. I'm sure the other tests have issues I'd hate also, no time to waste on junk review tests. Too many other places that don't do this crap. I bought a 1070ti to run MAX settings at 1200p (dell 24in) in everything or throw it to my lower res 22in. If I can't do that, I'll wait for my next card to play game X. Not knocking AMD here, just Anandtech. I'll likely buy a 7nm AMD cpu when they hit, and they have a shot at a 7nm gpu for me too. You guys and tomshardware (heh, you joined) have really went downhill with irrational testing setups. If you're going to do 4k at ultra, why not do them all there? I digress...

spikespiegal - Saturday, November 24, 2018 - link

Just curious, but how many of you AMD fanbois have ever been in a data center or been responsible for adjusting performance on a couple dozen VMware hosts running mixed applications? Oh wait...none. In the mythical world according to AMDs BS dept a Hypervisor / Operating system takes the number of tasks running and divides them by the number of cores running, and you clowns believe it. In the *real world* where we have to deal with really expensive hosts that don't have LED fans in them and run applications adults use we know that's not the truth. Hypervisors and Operating systems schedulers all favor cores that process mixed threads faster, and if you want to argue that please consult with a VMware or Hyper-V engineer the next time you see them in your drive thru. Oh wait...I am a VMware engineer.An i3 8530 costs $200 and literally beats any AMD chip made running stock in dual threaded applications. Seriously....look up the single threaded performance. More cores don't make an application more multithreaded and they don't make contribute to a better desktop experience. I have servers with 30-40% of my CPU resources not being used, and just assigning more cores won't make applications faster. It just ties up my scheduler doing nothing and wastes performance. The only way to get better application efficiency is vertical, and that's higher core performance, and that's nothing I'm seeing AMD bringing to the table.

Michael011 - Wednesday, December 12, 2018 - link

The pricing shows just how greedy Intel has become. It is better to spend your money on a top end AMD Threadripper and motherboard. https://mobdro.io/