Apple Announces 5nm A14 SoC - Meagre Upgrades, Or Just Less Power Hungry?

by Andrei Frumusanu on September 15, 2020 4:30 PM EST

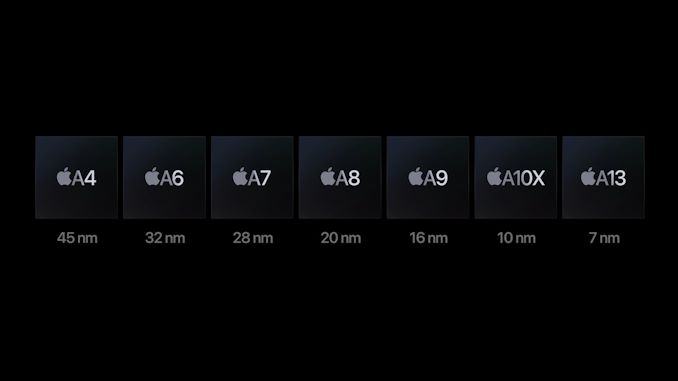

Amongst the new iPad and Watch devices released today, Apple made news in releasing the new A14 SoC chip. Apple’s newest generation silicon design is noteworthy in that is the industry’s first commercial chip to be manufactured on a 5nm process node, marking this the first of a new generation of designs that are expected to significantly push the envelope in the semiconductor space.

Apple’s event disclosures this year were a bit confusing as the company was comparing the new A14 metrics against the A12, given that’s what the previous generation iPad Air had been using until now – we’ll need to add some proper context behind the figures to extrapolate what this means.

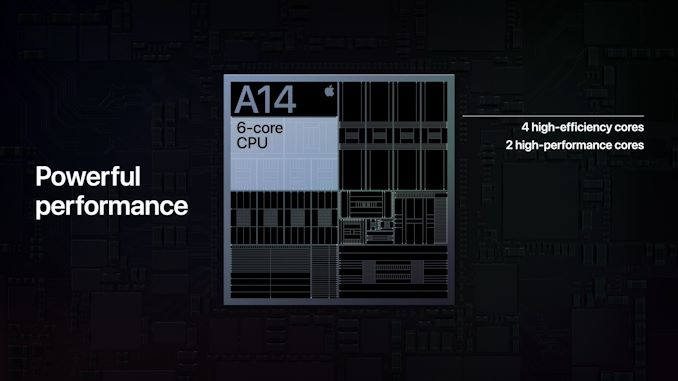

On the CPU side of things, Apple is using new generation large performance cores as well as new small power efficient cores, but remains in a 2+4 configuration. Apple here claims a 40% performance boost on the part of the CPUs, although the company doesn’t specify exactly what this metric refers to – is it single-threaded performance? Is it multi-threaded performance? Is it for the large or the small cores?

What we do know though is that it’s in reference to the A12 chipset, and the A13 already had claimed a 20% boost over that generation. Simple arithmetic thus dictates that the A14 would be roughly 16% faster than the A13 if Apple’s performance metric measurements are consistent between generations.

On the GPU side, we also see a similar calculation as Apple claims a 30% performance boost compared to the A12 generation thanks to the new 4-core GPU in the A14. Normalising this against the A13 this would mean only an 8.3% performance boost which is actually quite meagre.

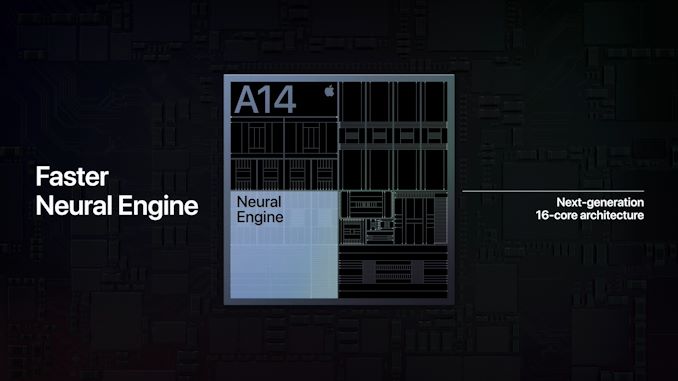

In other areas, Apple is boasting more significant performance jumps such as the new 16-core neural engine which now sports up to 11TOPs inferencing throughput, which is over double the 5TOPs of the A12 and 83% more than the estimated 6TOPs of the A13 neural engine.

Apple does advertise a new image signal processor amongst new features of the SoC, but otherwise the performance metrics (aside from the neural engine) seem rather conservative given the fact that the new chip is boasting 11.8 billion transistors, a 38% generational increase over the A13’s 8.5bn figures.

The one explanation and theory I have is that Apple might have finally pulled back on their excessive peak power draw at the maximum performance states of the CPUs and GPUs, and thus peak performance wouldn’t have seen such a large jump this generation, but favour more sustainable thermal figures.

Apple’s A12 and A13 chips were large performance upgrades both on the side of the CPU and GPU, however one criticism I had made of the company’s designs is that they both increased the power draw beyond what was usually sustainable in a mobile thermal envelope. This meant that while the designs had amazing peak performance figures, the chips were unable to sustain them for prolonged periods beyond 2-3 minutes. Keeping that in mind, the devices throttled to performance levels that were still ahead of the competition, leaving Apple in a leadership position in terms of efficiency.

What speaks against such a theory is that Apple made no mention at all of concrete power or power efficiency improvements this generation, which is rather very unusual given they’ve traditionally always made a remark on this aspect of the new A-series designs.

We’ll just have to wait and see if this is indicative of the actual products not having improved in this regard, of it’s just an omission and side-effect of the new more streamlined presentation style of the event.

Whatever the performance and efficiency figures are, what Apple can boast about is having the industry’s first ever 5nm silicon design. The new TSMC-fabricated A14 thus represents the cutting-edge of semiconductor technology today, and Apple made sure to mention this during the presentation.

Related Reading:

- The Apple iPhone 11, 11 Pro & 11 Pro Max Review: Performance, Battery, & Camera Elevated

- The Samsung Galaxy S20+, S20 Ultra Exynos & Snapdragon Review: Megalomania Devices

- TSMC Expects 5nm to be 11% of 2020 Wafer Production (sub 16nm)

- ‘Better Yield on 5nm than 7nm’: TSMC Update on Defect Rates for N5

127 Comments

View All Comments

Duke Brobbey - Wednesday, September 16, 2020 - link

Who is this user ?...infact this has been my thoughts all these years starting with the Galaxy s7 with it exynos then slowing making it way to main-stream smartphone marketingSometimes I doubt if these mobile chipsets indeed have this desk top grade hardware as they claim.

Hyper72 - Wednesday, September 16, 2020 - link

Palm detection when Apple Pencil, Siri app recommendations, photo gallery, camera use it a lot, on device dictation, health features (sleep, hand washing), accessibility/sound detection, Lidar processing. There was an Arc article last month about how it's used almost everywhere in the OS now.Isaacc7 - Wednesday, September 16, 2020 - link

The neural engine is used in all sorts of categories of programs and uses on Apple devices. The neural engine speeds up and/or is what allows live effects on pictures like the various Portrait Lighting modes, Animojis and other live selfie effects, their nighttime picture mode, all of the AR stuff, as well as image detection, focus tracking, body position and analysis, etc. Pixelmator Photo’s new enhancement feature was shown off during the presentation. There is a good chance that an improved neural engine will allow them to apply live effects to video.The core ML API is also used for on device language parsing and dictation. It is even used in low level operations like making the use of the pencil smoother on the ipad. ML is a very important tool for more and more types of application. Having dedicated hardware for processing instead of using brute attacks via the GPU or CPU makes for both a faster and more efficient system. Apple will also make a big deal about this in the new Macs. A lot of processing intensive manipulations could be moved off the GPU and onto the Neural Engine. It could very well lead to photo and video manipulations being able to be done on a lighter weight and less expensive computer.

ANORTECH - Thursday, September 17, 2020 - link

According to Dan Riccio they are doing tons of proyects based on ML so they want to be prepared for everything, but im with you in terms of wanting more cpu power now.cha0z_ - Monday, September 21, 2020 - link

There are use cases, but we can argue if they should had put all the budget from the bigger die&new process into ML instead of smaller part there and bigger towards CPU and GPU. The current A13 ML is not showing any issues with the AI tasks that apple implements, for example and about fitness+ it will be available on a lot older phones lol. Bumping all the budget towards the ML only is stupid, because less than 1% are really pushing that one while all 100% benefits from faster CPU and GPU. Play some civilization VI for example and one can clearly see that A13 struggles - it's laggy and not smooth at all - so yes, that game for example needs faster SOC and I can list quite a lot more really.tkSteveFOX - Thursday, September 17, 2020 - link

Correct. Performance is more than enough. AI and ISP along with better sustained performance is a much better choice. Sadly, we don't get manufacturers to decide this in the Android space as long as only ARM provides the CPU cores.Sure, manufacturers do the ISP and AI, but they can't fully customise their core design.

Kangal - Sunday, September 20, 2020 - link

My guess is this:- The Apple A13 sets the benchmark for minimum performance for the coming generation.

- The Apple A14 maintains that performance, but extends silicon budget to AI. This beefy ML-coprocessors are used for the new OS X Macs who are transitioning from x86. On the iPhone front, the decent efficiency gains are spent on the thirsty 5G external chip.

- On the A15 SoC, they'll focus again on efficiency this time by shifting the external 5G modem to an efficient internal modem, and the extra efficiency gains are spent on making the 120Hz display have acceptable battery life. On the OS X side, the efficiency is instead sacrificed to increase performance on the MacBook Air and Pro.

ANORTECH - Thursday, September 17, 2020 - link

Well next years iphone will be huge, new high MP camera sensor (48 or 64) hopefully with option for 8K recording, 120hz, maybe notch reduction we will see what they decide because the decision has not been made in terms of design yet, and probably more things we dont know yet.But this years iphone wont be bad either if coming from a few years old phone, i want the new case redesign and the bezel reduction in the front panel.

cha0z_ - Monday, September 21, 2020 - link

high MP sensors lead to worse photos overall. There are things like physics, you know... binning smaller pixels leads to more issues than less MP and bigger pixels, it was proven already again and again. Check s20 ultra/note 20 ultra and all their issues because of the "big" sensor with insane MP numbers. Marketing, nothing more. Bigger 16MP sensor will take tons better photos than 48/64/108MP one. Not that nowdays processing is not 90% of the phone photos (look at pixel 4a + I guarantee you that they could made it to take even better photos with the same camera setup if they wanted to).cha0z_ - Monday, September 21, 2020 - link

This one, most likely this year will be just a big camera update. That's nice for someone who takes a lot of pictures and don't just post them on FB, for everyone else... totally not worth to change your phone.