Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTMore on Async Shading, the New Benchmark, & the Test

As we’ve previously covered the principles of asynchronous shading in depth, we’re not going to completely reiterate what it does and what it’s for in this preview. However for our non-regular readers, here is a quick high-level overview of async shading.

GPUs are, at their most fundamental levels, a large collection of arithmetic logic units (i.e. CUDA Cores/Stream Processors) combined with various other scheduling and fixed function graphics hardware. Because graphics rendering is an embarrassingly parallel problem, GPUs are able to easily subdivide the work in processing a scene into multiple parts, meaning it is relatively easy to scale up the performance of a GPU by adding more ALUs. At the same time because any given graphics operation is likely being applied to a large number of pixels at once, ALUs are grouped together to execute a single instruction over multiple pieces of data (SIMD), which greatly limits the independence of the ALUs, but in turn also allows them to be packed far more densely.

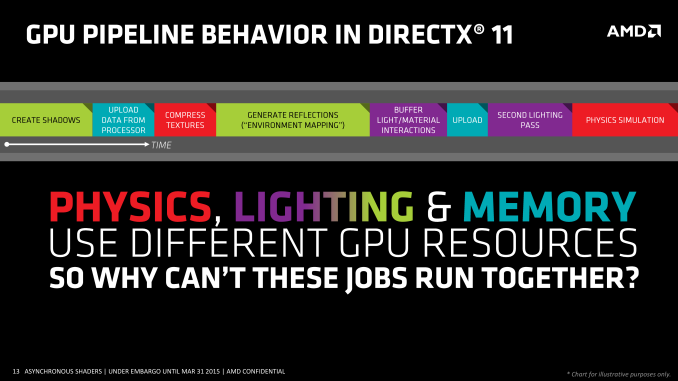

In a traditional (DX11 and earlier) graphics rendering scenario, a GPU will be occupied with one job/task at any given time, time sharing the GPU if necessary in order to let multiple applications use it. By and large this is fine, especially as games are run in a near-exclusive manner and full-screened. However within even a single application the same rules apply: with certain exceptions, the GPU can only handle one task at a time. So if a game wishes to execute multiple tasks, it must execute them in serial, one after another.

Again in a traditional environment all of this is fine, however as GPUs have advanced they have begun to test the limits of a single execution queue. As GPUs add ever more ALUs, even embarrassingly parallel begins to break down, and it is harder to keep a GPU filled the more ALUs there are to fill. Meanwhile new paradigms such as virtual reality have come along, where certain operations such as time warping require executing them with far less latency than the traditional high throughput/high latency execution model of a GPU allows. Thus GPU developers and software developers alike have needed the means to concurrently execute multiple jobs on a GPU’s ALUs, and this is where asynchronous shading comes in.

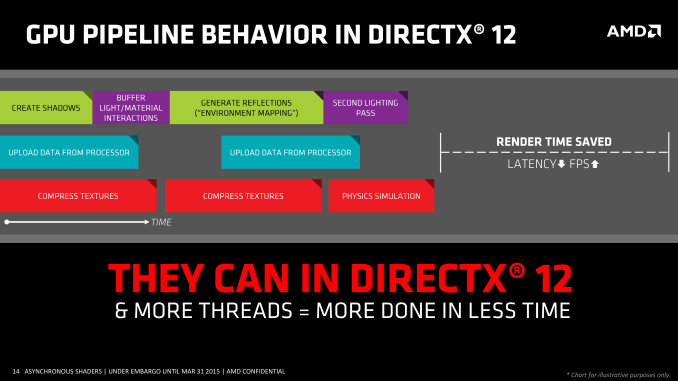

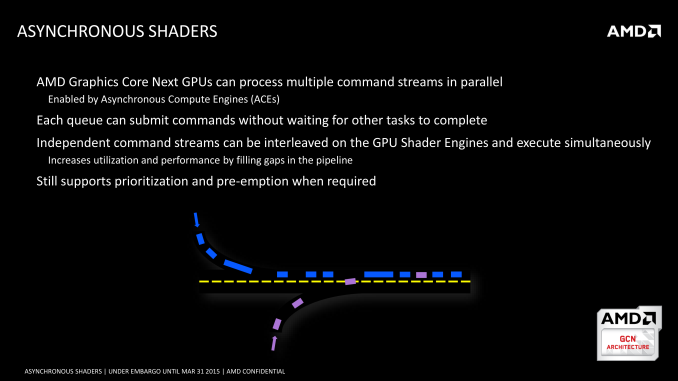

Whereas the traditional model is serial execution, asynchronous shading is executing multiple jobs over the ALUs at the same time. By implementing multiple queues within a GPU’s thread scheduler, a GPU executing jobs in an asynchronous manner can potentially run upwards of several jobs at once; more queues presents more options for work. Doing so can allow a GPU to be better utilized – by filling the underutilized ALUs with additional, related work – and at the same time work queues can be prioritized so that more important queues get finished sooner, if not as soon as outright possible.

Meanwhile on the API side of matters, while this functionality has been implemented into GPUs for a few years now, DirectX 11 and earlier APIs aren’t built for this paradigm and are unable to submit work to multiple queues. As a result this functionality has been going largely unused. But along with modernizing multi-core rendering, DirectX 12 also modernizes work queuing, and for the first time for DirectX gives developers the ability to issue work to multiple queues. There are a bunch of limitations here – in particular, only one queue can access non-ALU graphics hardware – but overall it gives both GPU developers and game developers tools to further improve performance and better implement certain rendering algorithms and technologies.

Like DirectX 12’s other headlining features, async shading is a powerful tool, but it’s one whose potency will depend on the hardware it’s being executed on and what a game is attempting. Async shading itself is a bit of a catch-all term – not unlike calling a CPU/SoC a multi-core CPU – and can mean any number of things depending on the context. Hardware can have a different number of queues, different rules on how resources are shared, different rules on how queues are scheduled, etc. So not all async shading capable hardware is the same, and there will be varying levels of how much work can actually be done concurrently. At the same time from a throughput perspective async shading can only fill ALUs that aren’t already being fully utilized, so the upper limit to its benefits is whatever resources aren't already being used.

All of this is in turn closely tied to the actual application being run, and how much of its shading/compute tasks can actually be executed concurrently. An application that can issue work to multiple queues but doesn’t actually have much work to issue to multiple queues will not benefit as much, whereas an application that can fill up multiple queues may benefit more. Ultimately it’s a technology whose benefit will vary on a case-by-case basis, with Ashes of the Singularity being one possible way to use the technology.

FAQs & More

Jumping back into the real world and the business that surrounds it, even though Ashes is still in beta, as one of the first games to use DX12 async shading, it’s going to be a big deal.

AMD's Radeon Technologies Group for their part has been heavily promoting Ashes for some time now, and for them the release of this latest beta is definitely a major event. From a marketing standpoint RTG has been touting the benefits of low-level APIs and async shading for some time now, and this latest beta of Ashes brings the first potential killer app one step closer. Meanwhile from a technical perspective it’s fair to say that Ashes via DX12 addresses many of RTG’s perceived weakspots over the past few years: driver CPU utilization, multi-GPU performance, and GPU shader/ALU utilization. A successful DX12 game with both DX12 and DX11 rendering paths gives RTG a prime opportunity to show that these problems are resolved under DX12, so RTG is keen to show that off. To that end, we do want to quickly note that while this beta is being handled through Oxide/Stardock, RTG has also sent the press their own thoughts in a new Ashes benchmark guide.

Meanwhile Oxide is distributing their own benchmark guide to the press for this latest beta. At the very end of the guide is a FAQ, which gives a good overview of what new functionality has been implemented in the latest beta, and what the developer’s polices are on IHV relations. Also included is a brief summary of Oxide’s plans to support Vulkan in the future.

I’ve heard you allow source access to vendors? Is this true?

Yes. Oxide and Stardock want our game to run as fast as possible and with as few issues as possible on everyone’s hardware. Thus, we have an open door policy. For security reasons, we can’t dive into details, but we should be clear that this level of source access is almost unprecedented in the game industry. It is not common industry practice to share source code with IHVs.

The basic way it works is that we have branches in our code tree. Unfortunately, we can’t give complete unrestricted access to our entire source tree to everyone for legal reasons (our lawyers would rather us not share source at all, but we overrode them ;)), but we have created a special branch where not only can vendors see our source code, but they can even submit proposed changes. That is, if they want to suggest a change our branch gives them permission to do so. Naturally, any changes will be carefully reviewed by us and we don’t think it’s ever been made more simple. However, we stress that such changes are relatively rare and typically consist of bug fixes.

This branch is synchronized directly from our main branch so it’s usually less than a week from our very latest internal main software development branch. IHVs are free to make their own builds, or test the intermediate drops that we give our QA. Typically, IHVs receive builds about the same time as our own QA department. However, because they can make their own builds, IHVs can end up with builds that are more current then our own QA department.

Obviously, Oxide and Stardock are taking a huge risk in giving such level source access to everyone. We have significant IP in our code base which must actively protect. However, we’re strong believers in being transparent about our development process. Our hope is that sharing this information will make everyone’s products better.

Does Oxide optimize specifically for any hardware?

Oxide primarily optimizes at an algorithmic level, not for any specific hardware. We also take care to avoid the proverbial known “glass jaws” which every hardware has. However, we do not write our code or tune for any specific GPU in mind. We find this is simply too time consuming, and we must run on a wide variety of GPUs. We believe our code is very typical of a reasonably optimized PC game.

How much performance should I gains from a second graphics card in my computer?

This depends on your video cards. We expect around 70% scaling if you use two of the same card. However, mixing cards can vary the results. For example, you will never get more than twice the speed of the slowest video card. You would be better off just using the new card alone. If you are mixing and matching cards, we recommend running the benchmark in single GPU mode first, then matching cards which have similar single GPU scores.

Why do multiple GPUs matter?

Multiple GPU configurations are increasingly common amongst gamers. Moreover, it allows users with a reasonably new video card to greatly improve their performance by buying a second card, even if it is a different brand or model and gain performance. This will begin to matter more as gamers begin to migrate to 4K and higher resolution displays.

Where can I get detailed benchmark results?

In documents\my games\ashes of the singularity you will find a Benchmarks directory. Within it, the detailed log is kept. This log will store not only the aggregate timings, but additional information regarding the timings of every frame of the log.

Where can I change settings?

In documents\my games\ashes of the singularity you will see a settings.ini file. Within that, you can see many different settings to try. This should only be done by very technical, advanced users. Should you place the game in some sort of settings that prevent loading, you may always delete the settings.ini file and the game will regenerate it, setting it to default values.

Is Oxide still supporting Mantle?

Oxide is migrating the effort spent on Mantle to support on the upcoming Vulkan API. We have no solid time-table for Vulkan support at this time, however.

How close to final is the code?

In the era of digital updates, nothing is every really final. However, the code is nearing release form for our release on March 22nd. We expect few changes related to graphics rendering to occur before release.

Does Oxide/Stardock have some sort of business deal with any IHV with regards to Async Compute? Is Oxide promoting this feature because of some kind of marketing deal?

No. We have no marketing or business agreement to pursue or implement this feature. We pursued the multiple command queues also known as async compute because it is a new capability in D3D12 and Windows 10. That is, we implemented it entirely on our own accord and curiosity. Oxide is committed to exploiting as many capabilities of DX12 as possible.

In the previous benchmark, were you using async compute?

We had very basic support of this feature. During the process of development for Multi-GPU, we realized that some of the lessons learned and code written could be applied to async compute. Thus, this benchmark 2 has a much more advanced implementation of this feature.

Do you have any recommended settings?

All of the presets are appropriate for certain class of hardware. Internally, it is our expectation that a user with a high end video card would run at Extreme at 1600p. Though our game will attempt to auto detect settings appropriate for the video card, we tend to be a bit conservative with this detection and let users turn settings up.

Ashes of the Singularity Benchmark 2.0

Along with the new functionality introduced in this week’s beta, this release also contains a modified version of the benchmark distributed with the previous version of Ashes. The new benchmark is still 3 minutes long and many of the camera tracks/unit placements are identical, but this latest version utilizes the models for another of the game’s factions, implements newer graphics effects, and is overall intended to be a more strenuous benchmark (performance optimizations not withstanding).

The Test

And with that out of the way, let’s dive into benchmarking. As we don’t typically benchmark beta games, we want to reiterate that this is a true beta. We’ve already seen the performance of Ashes shift significantly since our last look at the game, and while the game is much closer to competition now, it is not yet final. Further optimizations or driver releases likely will further alter the performance of the game, so nothing here should be considered definitive about how the final game will perform.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 Fury X ASUS STRIX R9 Fury AMD Radeon R9 285 AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti EVGA GeForce GTX 960 NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 361.91 AMD Radeon Software 16.1.1 Hotfix |

| OS: | Windows 10 Pro |

153 Comments

View All Comments

BurntMyBacon - Thursday, February 25, 2016 - link

@anubis44: "nVidia wasn't expecting AMD to force Microsoft's hand and release DX12 so soon."I do believe you are correct. Given the lack of ability to throw driver optimizations at the DX12 code path and nVidia's proficiency at doing it, I'd say this will be quite damaging. They've lost one clear advantage they held (at least in DX11).

@anubis44: "It's beginning to look like nVidia's been check-mated by AMD here."

I wouldn't go that far. They probably won't have the necessary hardware in Pascal, but you can be sure Volta will have what it needs. Besides, most games will likely have a DX11 code path for the foreseeable future as developers wouldn't want to lock themselves out of an entire market. Also, at the moment, nVidia can still play DX12 fine, they just don't appear to have the advantage at the moment given the small sample set of available data points.

In conclusion, it is more like they have lost a rook or queen. Of course, they've taken a few of ATi's pieces as well, so lets just wait and see who plays their remaining pieces better.

rhysiam - Thursday, February 25, 2016 - link

The other thing I would add to this is that it's not like Nvidia have nowhere to go here. Take the GTX 970 vs the R9 390 for example... they're in a similar price & performance tier. Yet the 970 is smaller with fewer transistors (usually meaning it's cheaper to produce) and generally has a much higher overclocking headroom (because Nvidia wasn't under pressure to clock the card closer to the limit to reach relevant performance). So it's reasonable to expect Nvidia could both lower the price and clock it higher to get a significantly better value card with minimal basically no substantive engineering/architectural changes.I'm not suggesting Nvidia will do that with the 970 specifically. Rather, what I'm saying is that if they find Pascal is similarly behind AMD they've got plenty of room to tweak performance and price before we can start calling them "check-mated". But it's certainly good new for us if DX12 performance like this continues and AMD essentially forces Nvidia to lower its margin.

CiccioB - Sunday, February 28, 2016 - link

They can do exactly as AMD has done with GCN: they just can start using 30 or 50% bigger GPUs to close the performance gap if they really need to.The_Countess - Thursday, February 25, 2016 - link

nvidia's entire performance advantage in DX11 is based on game specific driver optimizations. they have a virtual army of developers slaving away on those (and coming up with way to hurt everyone's performance as long as it hurt AMD the most or makes their own latest gen cards look better... but that's a different matter)with DX12 however the drivers becomes MUCH thinner and doesn't have nearly as much influence. so basically nvidia's main competitive advantage is gone with dx12 and vulkan.

as for being relevant: this year pretty much every game where performance matters will have either a DX12 or Vulkan render option. adding in the fact that AMD cards generally age better then nvidia's (those game specific optimizations focus pretty much exclusively only on their latest generation of cards) and i would say that yes it is very relevant.

BurntMyBacon - Thursday, February 25, 2016 - link

@The_Countess: "nvidia's entire performance advantage in DX11 is based on game specific driver optimizations. they have a virtual army of developers slaving away on those ..."True, they have lost a large advantage. Keep in mind, though, that nVidia's developer relations are still in play. What they once achieved through the use of driver optimizations may still be accomplished through code path optimization and design guidance for nVidia architecture. The first beta for Vulkan (The Talos Principle) showed that merely replacing a high level API (OpenGL/DX11) with a low level one (Vulkan/DX12) does not automatically improve the experience. If nVidia can convince developers to avoid certain non-optimal features or program in such a way as to take better advantage of nVidia hardware in their titles (for the sake of performance on the majority of discrete card owners out there of course) then ATi will be in the same position as they are now. Better hardware, worse software support. Then again, low level API cross-platform titles will most assuredly program to take advantage of the console architectures which happens to be ATi's at the moment.

nevcairiel - Wednesday, February 24, 2016 - link

Considering the Fury X just has a tad bit more raw power than a (older) 980Ti, I would say the DX12 numbers are fine, and what is really showing is AMDs lack of performance in DX11?tuxRoller - Wednesday, February 24, 2016 - link

I don't agree with this. I think this is more a case of nvidia not being able to rely so much on the ENORMOUS number of special cases in their driver.IOW, this is about two things: hardware and game design. The drivers are trivial next to d3d11/ogl.

jasonelmore - Wednesday, February 24, 2016 - link

Fury X's Architecture is much newer than Maxwell 2's. Lets see what the true DX12 cards can do this summer.tuxRoller - Wednesday, February 24, 2016 - link

Did you not notice the across the board improvements for all gcn cards?The point I was making, and that others have made for sometime, is that AMD makes really good hardware but this is typically masked by poor drivers.

You can see this by looking at their excellent performance in compute workloads where the code in the driver is more recent and doesn't have the legacy cruft of their d3d/ogl code.

Despoiler - Thursday, February 25, 2016 - link

It's not their drivers. It's purely architectural. GCN moved their schedulers into to hardware. GCN requires the API to be able to feed it enough work. What people have been calling "driver overhead" is nothing of the sort. DX11 is just not capable of fully utilizing AMD hardware. DX12 is and that is why AMD created Mantle. It forced MS to create DX12 and that set off the creation of Vulkan. All of the next gen APIs are tailored to exploit AMDs already being sold hardware.