NVIDIA Unveils “Titan RTX” Video Card: $2500 Turing Tensor Terror Out Later This Month

by Ryan Smith on December 3, 2018 8:00 AM EST

By this point we’ve seen most of NVIDIA’s 2018 Turing GPU product stack. After kicking things off with the Quadro RTX series, NVIDIA released a trio of consumer GeForce RTX cards, and following that the first Turing Tesla, the T4. However as regular industry watchers are well aware, NVIDIA typically does one more high-end card in their product stack, and that’s the ever-popular Titan. Not quite a flagship card and not really a consumer card, the Titan none the less holds an interesting spot in NVIDIA’s lineup as the fastest card most mere mortals can get their hands on, and these days as NVIDIA’s prime workstation compute card.

Last year around this time we saw the launch of the Titan V at the Neural Information Processing Systems (NeurIPS) conference. It seems like that went well for the company, as they’ve once again picked that venue for the launch of their latest Titan card, the aptly named Titan RTX. Set to hit the streets a bit later this month, the card is set to be NVIDIA’s big bruiser for workstation compute and ray tracing users – and anyone else who wants to throw down $2500 for a video card.

| NVIDIA Compute Accelerator Specification Comparison | ||||||

| Titan RTX | Titan V | RTX 2080 Ti Founders Edition |

Tesla V100 (PCIe) |

|||

| CUDA Cores | 4608 | 5120 | 4352 | 5120 | ||

| Tensor Cores | 576 | 640 | 544 | 640 | ||

| Core Clock | 1350MHz | 1200MHz | 1350MHz | ? | ||

| Boost Clock | 1770MHz | 1455MHz | 1635MHz | 1370MHz | ||

| Memory Clock | 14Gbps GDDR6 | 1.7Gbps HBM2 | 14Gbps GDDR6 | 1.75Gbps HBM2 | ||

| Memory Bus Width | 384-bit | 3072-bit | 352-bit | 4096-bit | ||

| Memory Bandwidth | 672GB/sec | 653GB/sec | 616GB/sec | 900GB/sec | ||

| VRAM | 24GB | 12GB | 11GB | 16GB | ||

| L2 Cache | 6MB | 4.5MB | 5.5MB | 6MB | ||

| Single Precision | 16.3 TFLOPS | 13.8 TFLOPS | 14.2 TFLOPS | 14 TFLOPS | ||

| Double Precision | 0.51 TFLOPS | 6.9 TFLOPS | 0.44 TFLOPS | 7 TFLOPS | ||

| Tensor Performance (FP16 w/FP32 Acc) |

130 TFLOPS | 110 TFLOPS | 57 TFLOPS | 112 TFLOPS | ||

| GPU | TU102 (754mm2) |

GV100 (815mm2) |

TU102 (754mm2) |

GV100 (815mm2) |

||

| Transistor Count | 18.6B | 21.1B | 18.6B | 21.1B | ||

| TDP | 280W | 250W | 260W | 250W | ||

| Form Factor | PCIe | PCIe | PCIe | PCIe | ||

| Cooling | Active | Active | Active | Passive | ||

| Manufacturing Process | TSMC 12nm FFN | TSMC 12nm FFN | TSMC 12nm FFN | TSMC 12nm FFN | ||

| Architecture | Turing | Volta | Turing | Volta | ||

| Launch Date | 12/2018 | 12/07/2017 | 09/20/2018 | Q3'17 | ||

| Price | $2499 | $2999 | $1199 | ~$10000 | ||

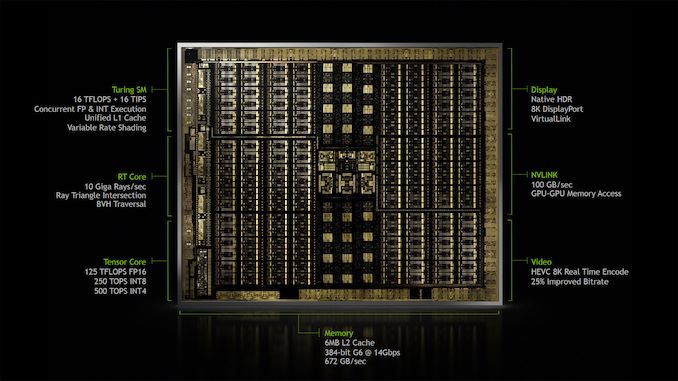

By the numbers, the Titan RTX looks a lot like a more powerful GeForce RTX 2080 Ti. And while it’s not nearly as consumer-focused, this is certainly the most relatable way to look at it. The card is based on the same TU102 GPU as NVIDIA’s consumer flagship, but while the RTX 2080 Ti used a slightly cut-down version of the GPU, Titan RTX gets a fully enabled chip, similar to NVIDIA’s best Quadro cards. Indeed along with the GeForce comparisons, the card is also functionally very close to the Quadro RTX 6000. Which is to say that while the Titan RTX doesn’t really fall under the category of a flagship, it’s not a second-tier card: it’s as powerful and as fast as NVIDIA’s best TU102 cards, so it’s very much at the top of its game.

Looking at its place in the market, with the launch of the Titan V last year, NVIDIA shifted away from the idea of a “prosumer” Titan that was closer to a GeForce with more memory and slightly higher performance, and more towards the idea of a straight-up professional grade workstation card for non-graphics tasks. Using a cut-down version of the server-grade GV100 GPU, Titan V filled this spot nicely, though it did come with some of the baggage that a server-grade GPU entails. Now that NVIDIA is back to using something closer to a workstation-grade GPU in the TU102, NVIDIA has once again shifted the balance between their cards a bit. But the Titan RTX remains the company’s workstation compute card, and thanks to the Turing architecture’s ray-tracing capabilities, is also now being pitched as a ray-tracing card for content creators.

Drilling a bit deeper, there are really three legs to Titan RTX that sets it apart from NVIDIA’s other cards, particularly the GeForce RTX 2080 Ti. Raw performance is certainly once of those; we’re looking at about 15% better performance in shading, texturing, and compute, and around a 9% bump in memory bandwidth and pixel throughput.

However arguably the lynchpin to NVIDIA’s true desired market of data scientists and other compute users is the tensor cores. Present on all NVIDIA’s Turing cards and the heart and soul of NVIIDA’s success in the AI/neural networking field, NVIDIA gave the GeForce cards a singular limitation that is none the less very important to the professional market. In their highest-precision FP16 mode, Turing is capable of accumulating at FP32 for greater precision; however on the GeForce cards this operation is limited to half-speed throughput. This limitation has been removed for the Titan RTX, and as a result it’s capable of full-speed FP32 accumulation throughput on its tensor cores.

| NVIDIA Turing Tensor Core Relative Performance | ||||||

| Titan | GeForce | Quadro | Titan (Volta) | |||

| FP16 w/FP32 Accumulate | 1x | 0.5x | 1x | 1x | ||

| FP16 w/FP16 Accumulate | 1x | 1x | 1x | 1x | ||

| INT8 | 1x | 1x | 1x | N/A | ||

| INT4 | 1x | 1x | 1x | N/A | ||

Given that NVIDIA’s tensor cores have nearly a dozen modes, this may seem like an odd distinction to make between the GeForce and the Titan. However for data scientists it’s quite important; FP32 accumulate is frequently necessary for neural network training – FP16 accumulate doesn’t have enough precision – especially in the big money fields that will shell out for cards like the Titan and the Tesla. So this small change is a big part of the value proposition to data scientists, as NVIDIA does not offer a cheaper card with the chart-topping 130 TFLOPS of tensor performance that Titan RTX can hit.

Similarly, the final leg for the Titan RTX is memory capacity. Whereas the GeForce RTX 2080 Ti is an 11GB card, Titan RTX is a 24GB card. For gamers even 11GB is generally overkill, however the extra 13GB of VRAM can make or break a large dataset. NVIDIA knows their market very well, and as we’ve seen time and time again, has market segmentation down to a fine art.

Market positioning aside, the launch of the Titan RTX also means that the rest of the tensor performance benefits are finally coming to a Titan-level card. Turing introduced support for lower precision modes, which help to further set apart the Titan RTX from last year’s Titan V. Overall, data scientists who would otherwise be looking at a Titan V are looking at a doubling in VRAM capacity, a 20% improvement in tensor performance – with far more at lower precisions – and all the other improvements of the Turing architecture. And if that’s not enough, NVIDIA is also enabling NVLink functionality this time around (it was disabled on Titan V), so workstation users can also scale out for more performance with a second Titan RTX by linking up the two cards.

Meanwhile NVIDIA is also chasing after content creators with this card a bit. Data scientists are still the bread and butter, but given that Turing also made significant investments into ray-tracing, NVIDIA would seem to also be experimenting a bit here to see what kind of a market there is for a high-end yet non-Quadro card for ray tracing. Strictly speaking the Quadro 6000 should be superior here (if only due to drivers & support), however it’s also a good deal more expensive. So it will be interesting to see what kind of a market NVIDIA finds for a $2500 ray tracing card that’s not already served by tried & true Quadro or the much cheaper GeForce.

And while NVIDIA is the first to note that the card is not really for gaming, even the Titan V sold to some gamers out there since Titans use the GeForce driver stack, and I expect much the same here. While the potential 15% performance improvement by no means justifies the greater-than 2x jump in cost, for the crazy rich out there, I do expect the Titan RTX to be a little better suited to gaming than the Titan V was. Whereas the Titan V was an awkward card in terms of game support due to the fact that it was the only Volta architecture card to use the GeForce drivers, Turing is everywhere. So the Titan RTX should behave more like a slightly faster 2080 Ti, without so many of the performance inconsistencies we saw when trying to game on the Titan V.

In terms of design, like its predecessors, the Titan RTX also follows very closely in the stylings of the GeForce family. Notably NVIDIA is using an open-air double-fan cooler here, which NVIDIA switched to on this generation, and not a traditional blower like the Titan V or the current Quadro cards. As we’ve already seen on the GeForce cards this maximizes airflow and brings down temperatures, however it’s a bit more of a mixed bag for the Titan since NVIDIA allows pairing the cards up with NVLink. Open air cooled cards require a little more care here, whereas the blowers are pretty much set-it-and-forget-it in a workstation. However with a TDP of 280W – the highest of the Turing cards and 30W higher than the Titan V – one can see why NVIDIA would be interested in maximizing cooling performance above other priorities. This also means that in theory, the Titan RTX should average slightly higher clockspeeds than the Quadro cards, as it has a bit more cooling and TDP headroom to play with; so at least for now, it likely is the fastest of all the TU102 cards.

NVIDIA's Nickname for the Titan RTX is "T-Rex"

Past that, this is a pretty typical card in terms of NVIDIA design. It gets the same port arrangement as the other Quadro and GeForce cards, with 3x DisplayPort 1.4 outputs, an HDMI 2.0b port, and a USB-C port that supports DP alt mode as well as the VirtualLink standard for VR headsets. Unique to the Titan of course is its golden color scheme, least it be confused with a GeForce. NVIDIA has nicknamed the card the T-rex, and I’m fairly sure this is the first time anyone has offered a T-rex in gold.

In any case, for the data scientists and whoever else wants to get their hands on what’s sure to be NVIDIA’s tensor terror for workstations, be prepared to set aside some cash. $2500 to be precise. Atypically for NVIDIA, this price is actually down a bit from the $3000 Titan V – TU102 is cheaper to make, especially without the HBM2 – but it’s still going to be one expensive card. Meanwhile NVIDIA tells us that we should expect to see the card become available on their website later this month.

83 Comments

View All Comments

Ryan Smith - Tuesday, December 4, 2018 - link

Likely not.alpha754293 - Tuesday, December 4, 2018 - link

"Strictly speaking the Quadro 6000 should be superior here (if only due to drivers & support), however it’s also a good deal more expensive."Why would a Fermi card be superior than the Turing card?

(You know that there IS an actual, separate, and much older Quadro 6000 model, right?)

(Source: https://en.wikipedia.org/wiki/List_of_Nvidia_graph...

DeepLearner - Tuesday, December 4, 2018 - link

I was really excited to see they'd enabled nvlink for a Titan but disappointed that it only supports 2 cards. The V100 does 6. I'm planning on building a new neural net training machine next year with some grant money and I want 4 GPUs. 4 of these babies would be perfect but I'd have to do some serious thinking of having 2 pairs of nvlinked cards. I have no idea what that would do for performance.Socius - Wednesday, December 5, 2018 - link

Overpriced garbage. I've bought SLI every generation since the 580 came out. 2x gtx 580, 4x gtx 680, 3x gtx titan, 3x gtx titan Maxwell, 2x gtx titan pascal. And I bought 2 of the $2000 Asus 4K 144Hz monitors. I bought the latest 1tb ipad pro 12.9 and iPhone XS max 512gb outright. I'm not afraid to spend money on things that are worth it. But this RTX generation of cards is absolute garbage, and a complete rip off. And the RTX Titan makes that even worse.This is by far the worst generational improvement Nvidia has had in at least 9 years. Yet it is the greatest generational price increase in at least 9 years. 2 years wait after the last generation came out, for a whopping 25-30% FPS increase. A much touted ray tracing feature that's only has 1/4th of the power it needs to be useful. A degradation in VRAM for gamer grade cards (Titan XP was still a gamer card, albeit a high price one, due to the price similarity with the RTX 2080ti price), going from 12GB to 11GB. And a ridiculously overpriced 24GB VRAM card that is no longer a gamer card at all.

Just absolute trash. And this is coming from a former hardcore Nvidia Fanboy. Ever since their stock went up from the AI and Mining rush, they've become 10x more greedy than normal, and care far more about gouging customers than about advancing gaming performance.

Here's hoping AMD forces them to be a bit more competitive next year, along with a node shrink. Competition is the greatest thing for consumers. Heck, due to competition between ISPs, a 150mbps plan that was $100/month 2 years ago has now been replaced with a 600mbps plan for $45 because new competition showed up in town.

I'm just incredibly disappointed. Not just by Nvidia for being so greedy, but disappointed for the limited advancement in gaming. While Apple comes out with 50% GPU performance increase every years, Nvidia gives us a price increase and just 25-30% increase after 2 years.

cbns - Thursday, December 6, 2018 - link

couldn't have said it better myself.if anyone from nvidia is reading this, take my big middle finger you nasty, greedy, monopoly monsters

Beaver M. - Friday, December 7, 2018 - link

Youre mainly talking about high end. But even the mid range cards are completely useless this generation.Why would I buy a 2070 for that money, if a 2080 is only a few bucks away? But then again, why would I buy a 2080 with only 8 GB? And why would I dish out that much money for a Ti, while its only 10-15% faster than a non-Ti?

As you said, this isnt explainable with logic, its just pure and utter greed. But enough people seem to still buy into this crap, and so they will keep doing it.

I am actually pretty angry, because I need a new GPU, since mine is becoming too slow. But there simply arent any GPUs I would buy. And I do have the money for a 2080 ti, but that price is just perverse and I would be a hypocrite if I would buy it.

Maybe they release a 2080 with 12 GB, then maybe I would grab one. But also only if the price wouldnt go up much.

Beaver M. - Friday, December 7, 2018 - link

Oh and I would have bought a 1080 Ti, but in their greed they stopped production of it, because it would be the MUCH better choice than a 2080. Even for years to come.Luke212 - Monday, December 10, 2018 - link

Please test turing cores, as the Geforce RTX turing cores are handicapped compared to the Quadro RTX so we need to know if the Titan RTX is also disabled or if its more like the QuadrosDeepLearner - Monday, December 10, 2018 - link

The reason none of you are excited about this is because you don't need that much on chip memory. People running big deep learning applications do! It's for us! The hottest NLP method right now is called BERT. It was trained on a TPU with 64 GB VRAM, and the code they published doesn't scale to multiple GPUs. So batch size has to go down when you run it on a GPU, and this has an affect on model performance. Honestly, with 2 of these linked over nvlink I could treat it as a single 48 GB gpu and nearly run BERT fine-tuning training as it was meant to be. I just wish it came with more links supported. Great looking card, but disappointed about only 2 cards being linkable.evernessince - Wednesday, December 12, 2018 - link

Only problem with that is certain features designed for deep learning are disabled on this card. So yeah...