Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

CPU Tests: Office and Science

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

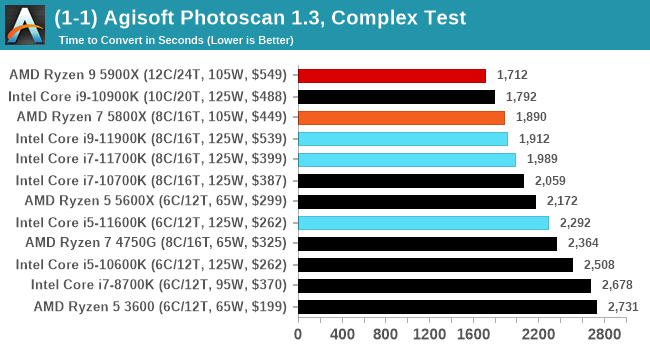

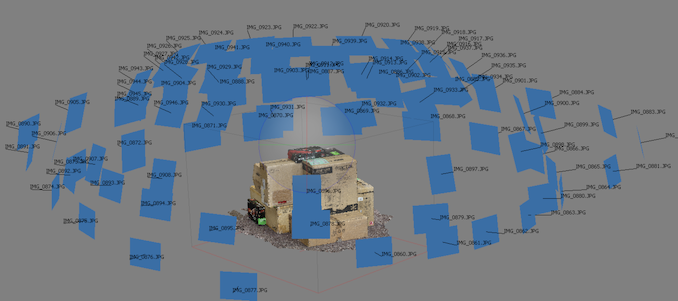

Agisoft Photoscan 1.3.3: link

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

For a variable threaded load, the i9-10900K sits above the Rocket Lake parts.

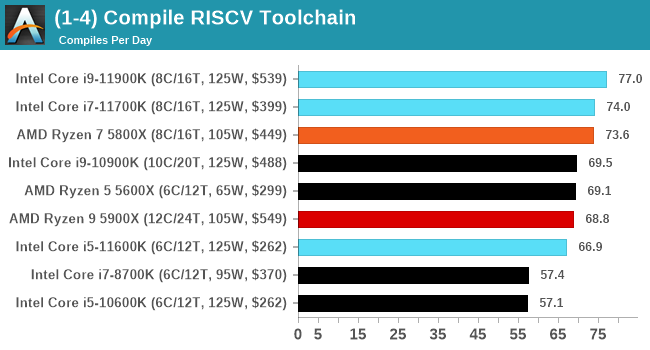

RISC-V Toolchain Compile

Our latest test in our suite is the RISCV Toolchain compile from the Github source. This set of tools enables users to build software for a RISCV platform, however the tools themselves have to be built. For our test, we're running a complete fresh build of the toolchain, including from-scratch linking. This makes the test not a straightforward test of an updated compile on its own, but does form the basis of an ab initio analysis of system performance given its range of single-thread and multi-threaded workload sections. More details can be found here.

One place where Intel is winning in absolute terms in our compile-from-scratch test. We re-ran the numbers on Intel with the latest microcode due to a critical issue, but we can see here that AMD's best are single chiplet designs but Intel ekes out a small lead.

Science

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

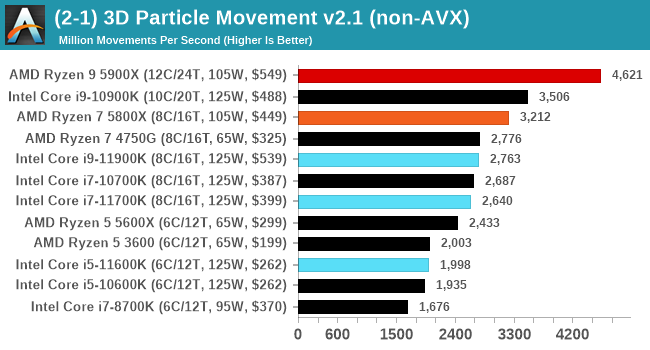

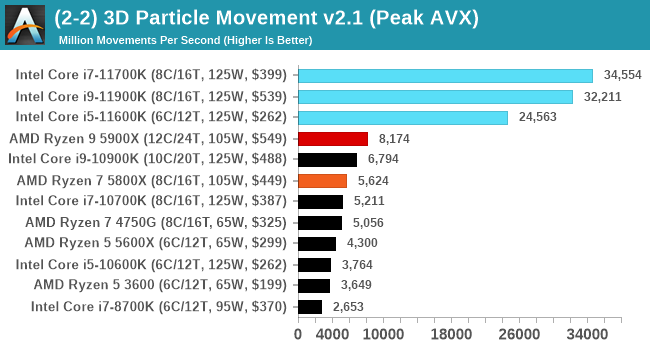

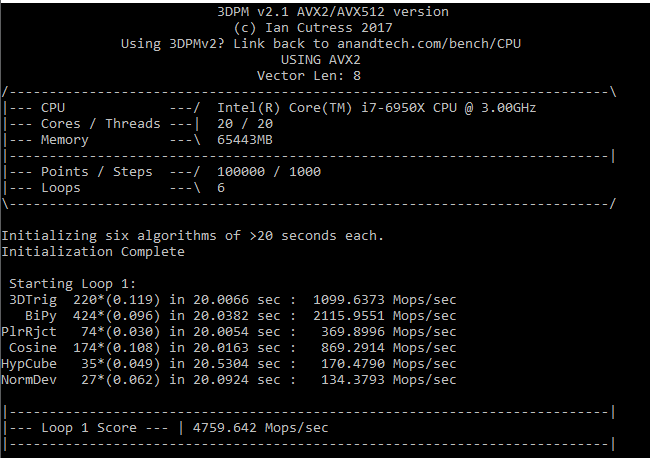

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of this benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, and flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements.

When AVX-512 comes to play, every-one else goes home. Easiest and clearest win for Intel.

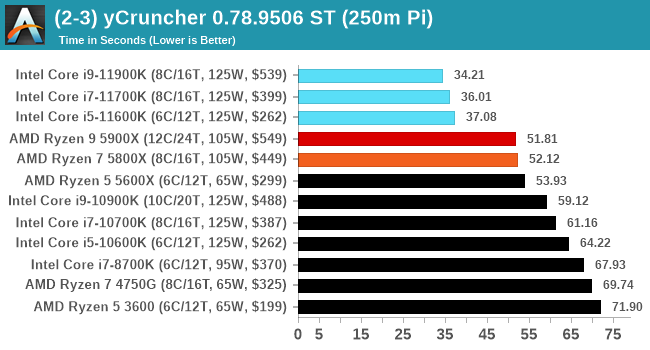

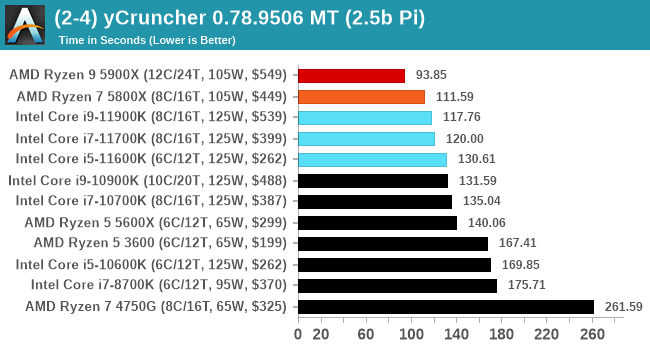

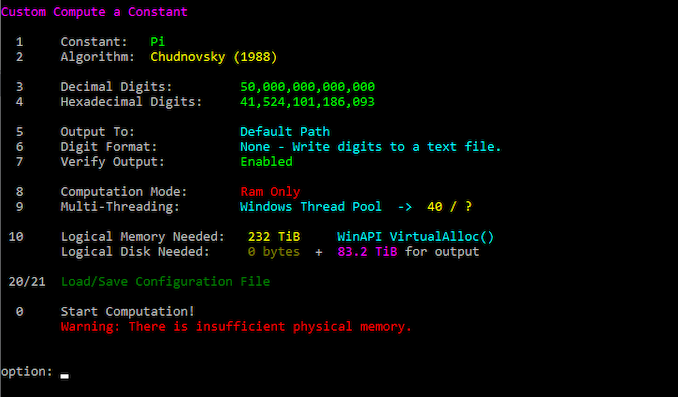

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In ST and MT mode we calculate 250 million digits.

In ST mode, we are more dominated by the AVX-512 instructions, whereas in MT it becomes a mix of memory as well.

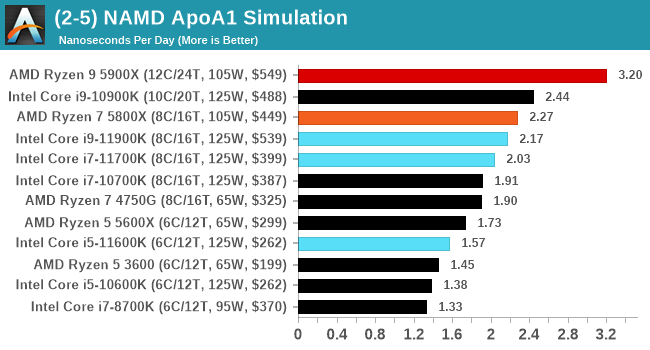

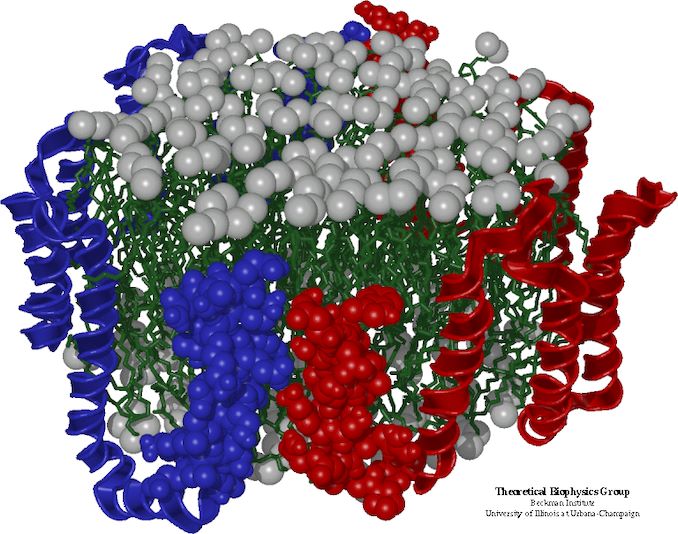

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modeling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps. We use version 2.13 as this was the recommended version at the time of integrating this benchmark into our suite. The latest nightly builds we’re aware have started to enable support for AVX-512, however due to consistency in our benchmark suite, we are retaining with 2.13. Other software that we test with has AVX-512 acceleration.

The Intel parts shows some improvement over the previous generations of Intel, however the 10-core Comet Lake still wins ahead of Rocket Lake.

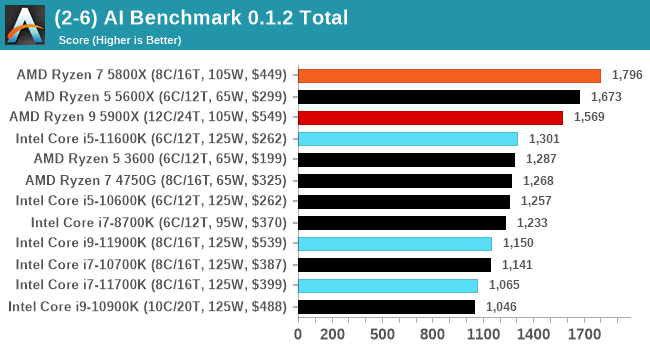

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6.

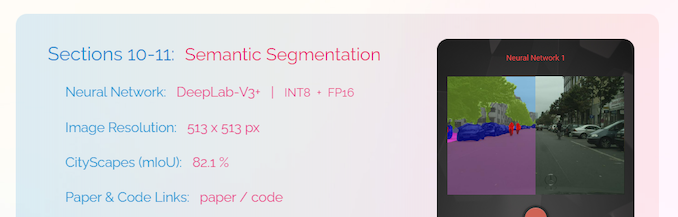

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

Every generation of Intel seems to regress with AI Benchmark, most likely due to MKL issues. I have previously identified the issue for Intel, however I have not heard of any progress to date.

279 Comments

View All Comments

blppt - Tuesday, March 30, 2021 - link

I disagree. I had a 9590 (which shipped WITH a small AIO cooler!) and the thing was shaky at best for stability, easily topping 90c at stock settings.Not the mobo fault either, I had the top end ASUS CHVF-Z 990FX, which was such a mature chipset it practically had grey hairs.

TheinsanegamerN - Wednesday, March 31, 2021 - link

the 9000 series all had stability issues. Backing off 1 clock bin or tinkering with voltage would usually fix them.Bulldozer didnt have the thermal density issues modern CPUs have. If you had the cooling, it would work. Bulldozer's issue was the sheer amount of heat being being generated would overwhelm many CPU coolers of the time, which were built aroudn the more tradiitonal ~100w power draw of intel I7s and the ~125-140 of phenoms. The 200+ that bulldozer was pulling was new territory.

Oxford Guy - Wednesday, March 31, 2021 - link

Certain motherboard makers played loose with the VRMs. AsRock in particular was known for its 9000-series-certified boards frying. MSI was also bad. Only a few boards were suited to the 9000 series and any enthusiast would have skipped the 9000 series in favor of one of the lower-leakage chips, which could be overclocked to the same 4.7 GHz. 5 GHz with Piledriver was not stable, requiring too much voltage. ASUS tried to hide that by under-reporting the voltage used in its flagship board. 4.4 GHz was optimal, 4.5 was okay, and 4.7 was as far as one wanted to go for frequent use. That's with the lower-leakage 'E' parts."The Stilt" said AMD would have sent the 9000 series to the crusher had it not come up with an after-the-fact lower standard for leakage. So, Hruska gets his take spectacularly wrong in his Rocket Lake article. The 9000 series was not aimed at 'the enthusiast faithful'. Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7.

It was aimed at people who could be tricked by the 5 Ghz number. It was the most cynical cash grab possible. Not only did AMD offer only 4 FPU cores (important for gaming) it offered a CPU that was priced into the stratosphere while having un-fixable single-core performance.

Piledriver's fatal flaw was its abysmal single-thread performance, not its power consumption. It could have been okay enough with the lower-leakage standard (and a more strict socket standard as Zen 1 had). But, reportedly, the 32nm SOI wasn't very good for some time (Bulldozer and the first generation of Piledriver), so AMD let the AM3+ spec be pretty loose (although not as loose as FM).

Overclocking Piledriver even to 5 GHz wasn't enough to give it decent single-thread performance.

I do have to agree that the 9590 was the single worst consumer CPU product ever released. It even edges out the Pentium III that wasn't stable — since that one was actually pulled from the market. Not only was the 9590 100% cynical exploitation of consumer ignorance, it was really bad technologically. Figures that Hruska would praise it.

(If, though, one lived in Iceland with a solar array backed by an iron-nickle battery complex, the 9590 would have been okay for playing Deserts of Kharak, provided one didn't buy it at its original price.)

blppt - Thursday, April 1, 2021 - link

"Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7."What is especially sad here is that even IF he managed to pump the 250-300W into that 9590 to run at 5.1 (all cores), it was probably still slower than a 4790K at stock speeds.

Oxford Guy - Saturday, April 3, 2021 - link

In single core, certainly. However, 2011 is stamped onto the spreaders of Piledriver and it hit the market in 2012. The 4790K hit the market in Q2 2014.In 2014, the only FX to consider was the 8320E. Not only was it cheap (at least at MicroCenter), it could run in any AM3+ board without killing it — and could be overclocked better than a 9000 series with anything below nitrogen, due to its much superior leakage.

The 8320E was the only FX worth anyone’s time. Paired with a UD3P board it could do 4.4 GHz readily and could manage 4.7 with a fast fan angled at the VRM sink. Total cost was very low for the CPU and board from MicroCenter, which is why I recommended that setup to the tightest budget people. But, the bad single core was a problem for frametime consistency.

AMD should have been publicly tarred and feathered by the tech press for the 9590. All the light mockery wasn’t enough.

Spunjji - Friday, April 9, 2021 - link

Broadly agreed, but I'd note that the 6300 was also reasonable if you were on a painfully low budget. I suggested it to a friend (his alternative was a Sandy Bridge i3) and it lasted him until a year back as his main gaming system. It's now moved on to another friend, who still uses it for games. Those chips have aged surprisingly well, all things considered, though it is probably holding his RX 470 back a little bit.Oxford Guy - Wednesday, March 31, 2021 - link

• The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then.• The overclocked 'The Stilt' said the 9000 series is not the chip to judge the design by because it has the worst leakage characteristics and would have been sent to the crusher had AMD not decided to create a lower standard after the fact. Instead, the chips that should be used to represent Piledriver are the 'E' series. They have the lowest leakage and can manage the same 4.7 GHz the 9590 uses with much more reasonable (although still non-competitive) demands. The 9000 series was really AMD's gift to Intel, by making the bad ancient Piledriver design look much worse.

• AMD was a small cash-strapped company, thanks to Intel's monopoly abuses. When AMD was leading the x86 industry Intel kept it from getting the profit. So, Piledriver, although very bad in a number of ways, will never be as bad as Rocket Lake. The 9000 series is the only exception, though, since it was a purely cynical cash grab by AMD, using '5 GHz' to sucker people.

blppt - Thursday, April 1, 2021 - link

"The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then."As I stated, in the (exceedingly rare) case where a game or app can saturate all 8 cores, when the 9590 was in its prime, it could be competitive.

That almost never happened, especially in games. About the only 2 I can think of offhand that could do that in the 9590's prime was GTA5 and Company of Heroes 2. And even then, you were using 150+ more watts to get the same or slightly better performance than Intel's high-end quad cores. Along with the required AIO water cooling and required high-end mobo with a beastly VRM setup. As far as I know, only 3 pricey mobos were approved for the 9590, my CHVF-Z, one Gigabyte board, and an ASRock.

9590 was one of the worst cpus ever. Probably the single worst (special edition) cpu. I had one for years.

This rocket lake, while disappointing, hot, and power consuming, is consistently competitive in every game versus its direct competitors. The 9590 cannot come close to saying that.

Oxford Guy - Saturday, April 3, 2021 - link

I cite Desert of Kharak because it’s the only game I’ve seen put the FX ahead of Intel at below 4K.Not only would the game need to be able to leverage 8 integer cores without needing more than 4 FPU cores, it would have to be able to saturate a narrow deep pipeline and not rely heavily on single thread IPC. It should also scale with clock and not need the best RAM and L3 performance. RTS is probably the best genre for the Piledriver design.

Gondalf - Tuesday, March 30, 2021 - link

AMD FX-9590 had not AVX-512. Very high performance have a cost.Try to image Zen 3 with AVX-512, it could not be a champion in low power consumption at all.

If you do not like high power draw, simply disable AVX-512.