Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Huge Memory Bandwidth, but not for every Block

One highly intriguing aspect of the M1 Max, maybe less so for the M1 Pro, is the massive memory bandwidth that is available for the SoC.

Apple was keen to market their 400GB/s figure during the launch, but this number is so wild and out there that there’s just a lot of questions left open as to how the chip is able to take advantage of this kind of bandwidth, so it’s one of the first things to investigate.

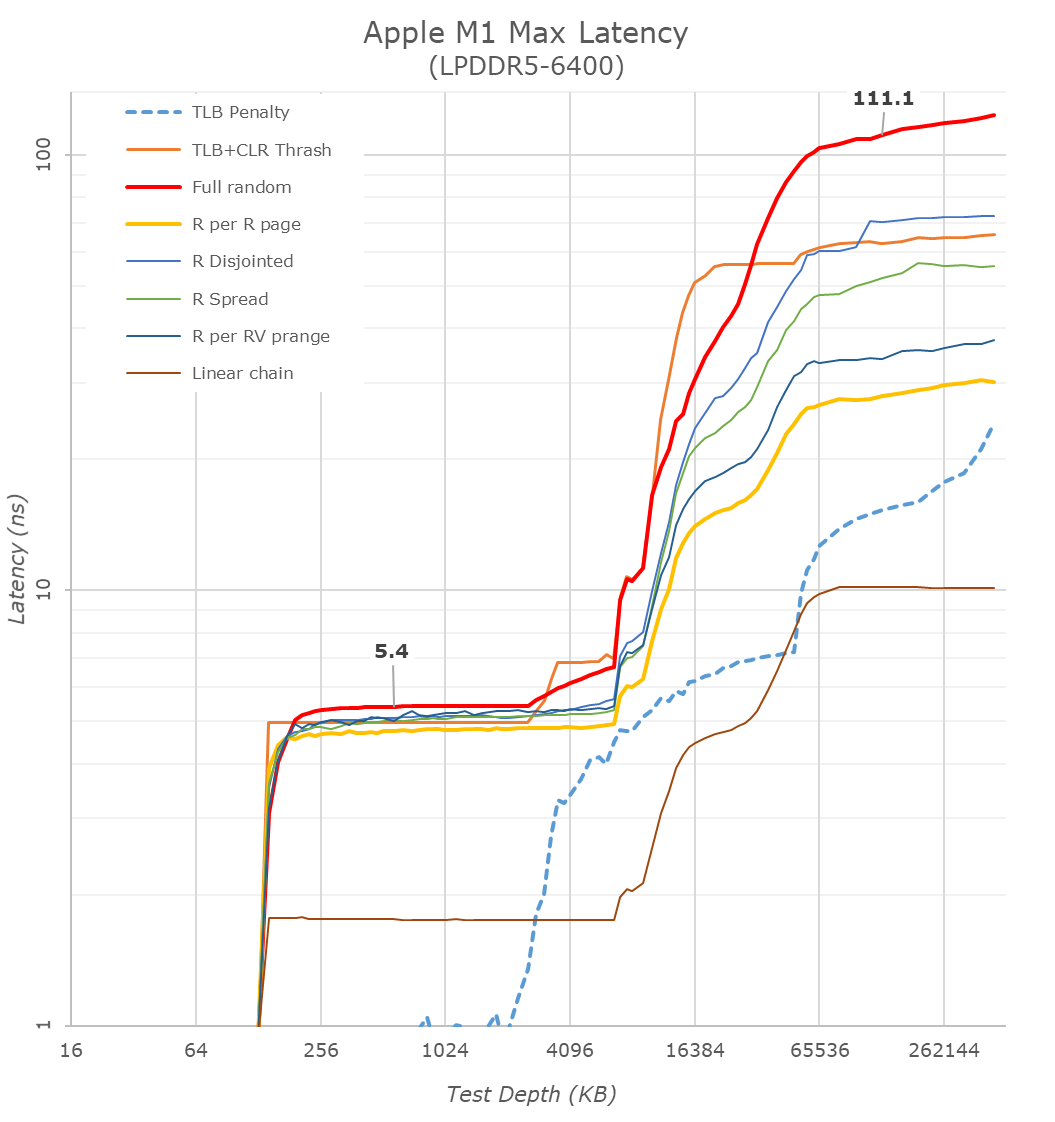

Starting off with our memory latency tests, the new M1 Max changes system memory behaviour quite significantly compared to what we’ve seen on the M1. On the core and L2 side of things, there haven’t been any changes and we consequently don’t see much alterations in terms of the results – it’s still a 3.2GHz peak core with 128KB of L1D at 3 cycles load-load latencies, and a 12MB L2 cache.

Where things are quite different is when we enter the system cache, instead of 8MB, on the M1 Max it’s now 48MB large, and also a lot more noticeable in the latency graph. While being much larger, it’s also evidently slower than the M1 SLC – the exact figures here depend on access pattern, but even the linear chain access shows that data has to travel a longer distance than the M1 and corresponding A-chips.

DRAM latency, even though on paper is faster for the M1 Max in terms of frequency on bandwidth, goes up this generation. At a 128MB comparable test depth, the new chip is roughly 15ns slower. The larger SLCs, more complex chip fabric, as well as possible worse timings on the part of the new LPDDR5 memory all could add to the regression we’re seeing here. In practical terms, because the SLC is so much bigger this generation, workloads latencies should still be lower for the M1 Max due to the higher cache hit rates, so performance shouldn’t regress.

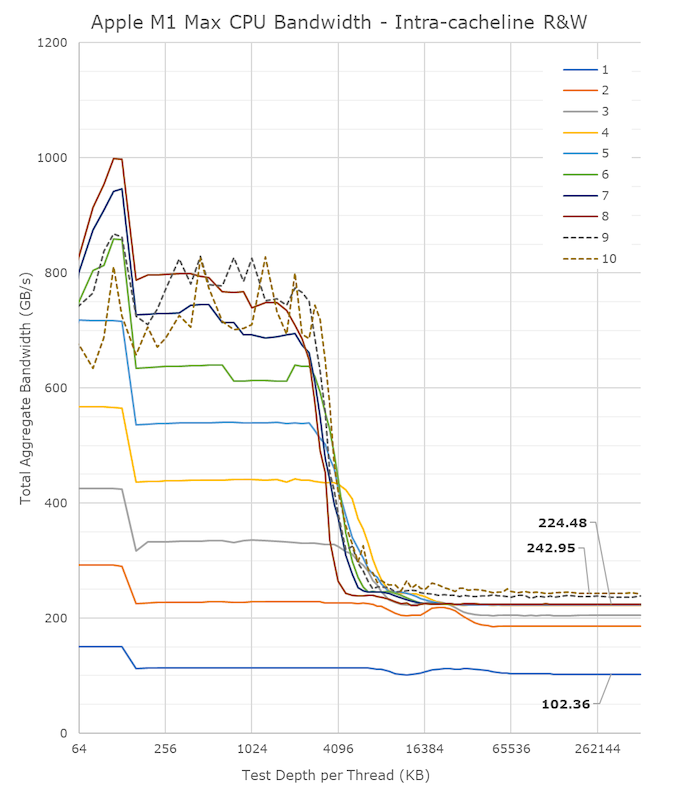

A lot of people in the HPC audience were extremely intrigued to see a chip with such massive bandwidth – not because they care about GPU or other offload engines of the SoC, but because the possibility of the CPUs being able to have access to such immense bandwidth, something that otherwise is only possible to achieve on larger server-class CPUs that cost a multitude of what the new MacBook Pros are sold at. It was also one of the first things I tested out – to see exactly just how much bandwidth the CPU cores have access to.

Unfortunately, the news here isn’t the best case-scenario that we hoped for, as the M1 Max isn’t able to fully saturate the SoC bandwidth from just the CPU side;

From a single core perspective, meaning from a single software thread, things are quite impressive for the chip, as it’s able to stress the memory fabric to up to 102GB/s. This is extremely impressive and outperforms any other design in the industry by multiple factors, we had already noted that the M1 chip was able to fully saturate its memory bandwidth with a single core and that the bottleneck had been on the DRAM itself. On the M1 Max, it seems that we’re hitting the limit of what a core can do – or more precisely, a limit to what the CPU cluster can do.

The little hump between 12MB and 64MB should be the SLC of 48MB in size, the reduction in BW at the 12MB figure signals that the core is somehow limited in bandwidth when evicting cache lines back to the upper memory system. Our test here consists of reading, modifying, and writing back cache lines, with a 1:1 R/W ratio.

Going from 1 core/threads to 2, what the system is actually doing is spreading the workload across the two performance clusters of the SoC, so both threads are on their own cluster and have full access to the 12MB of L2. The “hump” after 12MB reduces in size, ending earlier now at +24MB, which makes sense as the 48MB SLC is now shared amongst two cores. Bandwidth here increases to 186GB/s.

Adding a third thread there’s a bit of an imbalance across the clusters, DRAM bandwidth goes to 204GB/s, but a fourth thread lands us at 224GB/s and this appears to be the limit on the SoC fabric that the CPUs are able to achieve, as adding additional cores and threads beyond this point does not increase the bandwidth to DRAM at all. It’s only when the E-cores, which are in their own cluster, are added in, when the bandwidth is able to jump up again, to a maximum of 243GB/s.

While 243GB/s is massive, and overshadows any other design in the industry, it’s still quite far from the 409GB/s the chip is capable of. More importantly for the M1 Max, it’s only slightly higher than the 204GB/s limit of the M1 Pro, so from a CPU-only workload perspective, it doesn’t appear to make sense to get the Max if one is focused just on CPU bandwidth.

That begs the question, why does the M1 Max have such massive bandwidth? The GPU naturally comes to mind, however in my testing, I’ve had extreme trouble to find workloads that would stress the GPU sufficiently to take advantage of the available bandwidth. Granted, this is also an issue of lacking workloads, but for actual 3D rendering and benchmarks, I haven’t seen the GPU use more than 90GB/s (measured via system performance counters). While I’m sure there’s some productivity workload out there where the GPU is able to stretch its legs, we haven’t been able to identify them yet.

That leaves everything else which is on the SoC, media engine, NPU, and just workloads that would simply stress all parts of the chip at the same time. The new media engine on the M1 Pro and Max are now able to decode and encode ProRes RAW formats, the above clip is a 5K 12bit sample with a bitrate of 1.59Gbps, and the M1 Max is not only able to play it back in real-time, it’s able to do it at multiple times the speed, with seamless immediate seeking. Doing the same thing on my 5900X machine results in single-digit frames. The SoC DRAM bandwidth while seeking around was at around 40-50GB/s – I imagine that workloads that stress CPU, GPU, media engines all at the same time would be able to take advantage of the full system memory bandwidth, and allow the M1 Max to stretch its legs and differentiate itself more from the M1 Pro and other systems.

493 Comments

View All Comments

techconc - Monday, October 25, 2021 - link

I guess you missed the section where they showed the massive performance gains for the various content creation applications.GatesDA - Monday, October 25, 2021 - link

Apple currently has the benefit of an advanced manufacturing process. If it feels like future tech compared to Intel/AMD, that's because it is. The real test will be if it still holds up when x86 chips are on equal footing.Notably, going from M1 Pro to Max adds more transistors than the 3080 has TOTAL. This wouldn't be feasible without the transistor density of TSMC's N5 process. M1's massive performance CPU cores also benefit from the extra transistor density.

Samsung and Intel getting serious about fabrication mean it'll be much harder for future Apple chips to maintain a process advantage. From the current roadmaps they'll actually fall behind, at least for a while.

michael2k - Monday, October 25, 2021 - link

That's a tautology and therefore a fallacy and bad logic:Apple is only ahead because they're ahead. When they fall behind they will fall behind.

You can deconstruct your fallacy by asking this:

When will Intel get ahead of Apple? The answer is never, at least according to Intel itself:

https://appleinsider.com/articles/21/03/23/now-int...

By the time Intel has surpassed TSMC, it means Intel will need to have many more customers to absorb the costs of surpassing TSMC, because it means Intel's process advantage will be too expensive to maintain without the customer base of TSMC.

kwohlt - Tuesday, October 26, 2021 - link

It's pretty clear that Apple will never go back to x86/64, and that they will be using in-house designed custom silicon for their Macs. Doesn't matter how good AMD or Intel get, Apple's roadmap on that front is set in stone for as far into the future as corporate roadmaps are made.Intel saying they hope to one day get A and M series manufacturing contracts suggests they're confident about their ability to rival TSMC in a few years, not that they will never be able to reach Apple Silicon perf/watt.

Intel def won't come close to M series in perf/watt until at least 2025 with Royal Core Project, and even then, who knows, still probably not.

daveinpublic - Monday, October 25, 2021 - link

So by your logic, Apple is ahead right now.Samsung and Intel are behind right now. And could be for a while.

Sunrise089 - Tuesday, October 26, 2021 - link

The Apple chips have perf/watt numbers in some instances 400% better than the Intel competition. Just how much benefit are you expecting a node shrink to provide? Are you seriously suggesting Intel would see a doubling, tripling, or even quadrupling of perf/watt via moving to a smaller node? You are aware node shrink efficiency gains don’t remotely approach that level of improvement be it on Intel or TSMC, aren’t you?“Samsung and Intel getting serious about fabrication.” What does this even mean? Intel has been the world leader in fabrication investment and technology for decades before recently falling behind. How on earth could you possibly consider them not ‘serious’ about it?

AshlayW - Tuesday, October 26, 2021 - link

Firestorm cores have >2X the transistors as Zen3/Sunny Cove cores in >2X the area on the same process (or slightly less). The cores are designed to be significantly wider making use of the N5 process, and yes, I very much expect at LEAST a doubling of perf/w from N5 CPUs from AMD, since they doubled Ryzen 2000 with 3000, and +25% from 3000 to 5000 on the same N7 node.kwohlt - Tuesday, October 26, 2021 - link

Ryzen 3000 doubled perf/watt over Ryzen 2000?? Which workloads on which SKUs are you comparing?dada_dave - Monday, October 25, 2021 - link

So I wonder why Geekbench scores have so far shown M1Max very far off it's expected score relative to the M1 (22K)? I've checked other GPUs in its score range across a variety of APIs (including Metal) and so far they all show the expected scaling (or close enough) between TFLOP and GB score except the M1 Max. Even the 24 core Max is not that far off, it's the 32 core scores are really far off. They should be in the 70Ks or even high 80Ks for perfect scaling which is achieved by the 16-core Pro GPU, but the 32-core scores are actually in the upper 50Ks/low 60Ks. Do you have any hypotheses as to why that is? Also does the 16" have the high performance mode supposedly coming (or here already)?Andrei Frumusanu - Monday, October 25, 2021 - link

The GB compute is too short in bursts and the GPU isn't ramping up to peak frequencies. Just ignore it.