ATI's Late Response to G70 - Radeon X1800, X1600 and X1300

by Derek Wilson on October 5, 2005 11:05 AM EST- Posted in

- GPUs

Mid-Range Perforamnce

The X1600 XT costs much more than the 6600 GT and performs only slightly better in some cases. It's real competition should be something more along the lines of the 6800 GT which is able handle more than the new midrange ATI part. $249 for the X1600 XT compared to $288 for the 6800 GT shows the problem with the current pricing.

As we can easily see, the 6800 GT performs quite a bit better than the X1600 XT. From what we see here, the X1600 XT will need to fall well below the $200 mark for it to have real value at these resolutions with the highest settings. The 6600 GT is the clear choice for people who want to run a 1280x1024 LCD panel and play games comfortably with high quality and minimal cost.

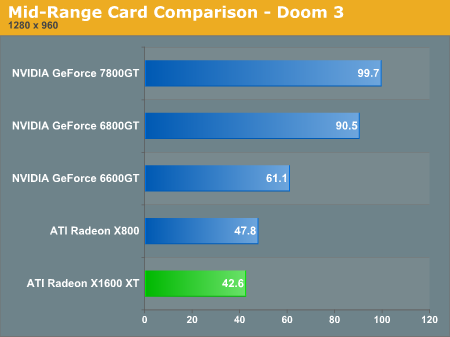

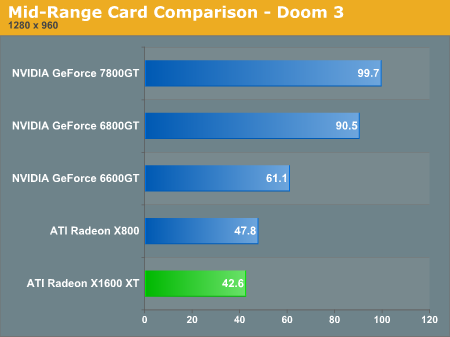

Looking at Doom 3, it's clear that the X1600 XT falls fairly far behind. But once again, when 4xAA and 8xAF are enabled the X1600 performs at the level of the 6600 GT.

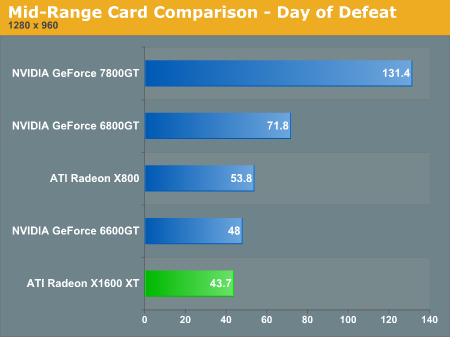

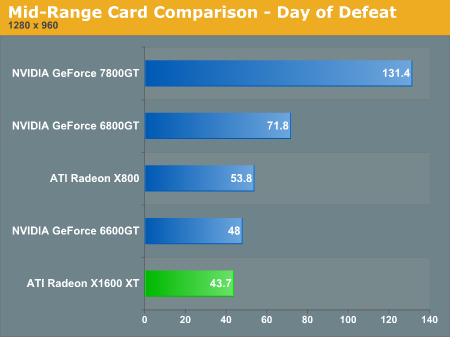

Eventhough this game is based on the engine that powered Half-Life 2 (and traditionally favored ATI hardware), the X1600 XT isn't able to surpass the 6600 GT in performance. The game isn't playable at 1280x960 with 4xAA and 8xAF enabled, but for what it is worth the X1600 XT again scales better than the 6600 GT.

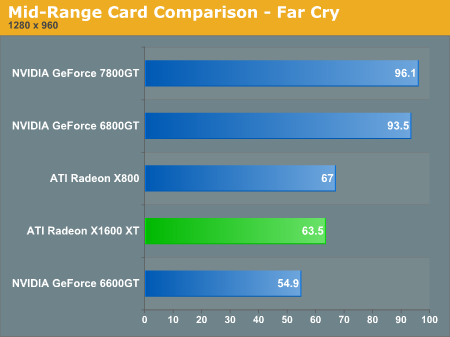

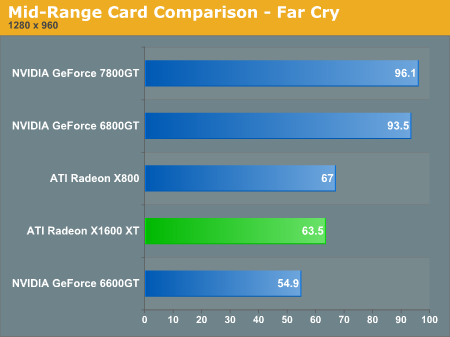

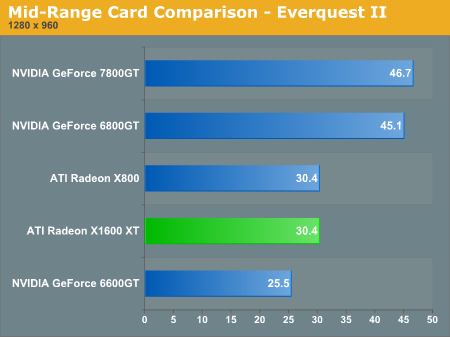

Far Cry and Everquest II are the only two games that show X1600 XT performing beyond the 6600 GT at 1280x960 with no AA or AF. Even though these games scale better with AA and AF enabled on ATI's newest hardware, the framerates are not playable (with the exception of Far Cry). We should see a patch from Crytek in the not too distant future that expands HDR and SM3.0 features. We will have to revisit Far Cry performance when we can get our hands on the next patch.

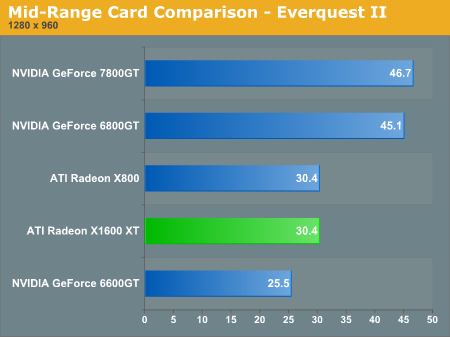

The X1600 performs exactly on par with the X800 in this test. Both of these ATI midrange cards outpace the 6600 GT from NVIDIA, though the 6800 GT is 50% faster than the X1600 XT. Again, cost could become a major factor in the value of these cards.

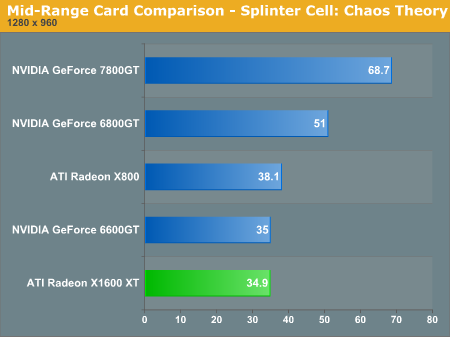

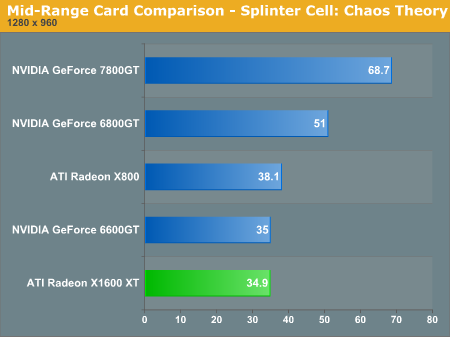

Splinter Cell is a fairly demanding game and the X1600 XT and 6600 GT both perform at the bottom of the heap in this test. Of course, ultra high frame rates are not necessary for this stealth action game, but the game certainly plays more smoothly on the 6800 GT at 51 fps. The 6800 GT also remains playable with AA/AF enabled while the X1600 and 6600 GT do not.

The X1600 XT costs much more than the 6600 GT and performs only slightly better in some cases. It's real competition should be something more along the lines of the 6800 GT which is able handle more than the new midrange ATI part. $249 for the X1600 XT compared to $288 for the 6800 GT shows the problem with the current pricing.

As we can easily see, the 6800 GT performs quite a bit better than the X1600 XT. From what we see here, the X1600 XT will need to fall well below the $200 mark for it to have real value at these resolutions with the highest settings. The 6600 GT is the clear choice for people who want to run a 1280x1024 LCD panel and play games comfortably with high quality and minimal cost.

Looking at Doom 3, it's clear that the X1600 XT falls fairly far behind. But once again, when 4xAA and 8xAF are enabled the X1600 performs at the level of the 6600 GT.

Eventhough this game is based on the engine that powered Half-Life 2 (and traditionally favored ATI hardware), the X1600 XT isn't able to surpass the 6600 GT in performance. The game isn't playable at 1280x960 with 4xAA and 8xAF enabled, but for what it is worth the X1600 XT again scales better than the 6600 GT.

Far Cry and Everquest II are the only two games that show X1600 XT performing beyond the 6600 GT at 1280x960 with no AA or AF. Even though these games scale better with AA and AF enabled on ATI's newest hardware, the framerates are not playable (with the exception of Far Cry). We should see a patch from Crytek in the not too distant future that expands HDR and SM3.0 features. We will have to revisit Far Cry performance when we can get our hands on the next patch.

The X1600 performs exactly on par with the X800 in this test. Both of these ATI midrange cards outpace the 6600 GT from NVIDIA, though the 6800 GT is 50% faster than the X1600 XT. Again, cost could become a major factor in the value of these cards.

Splinter Cell is a fairly demanding game and the X1600 XT and 6600 GT both perform at the bottom of the heap in this test. Of course, ultra high frame rates are not necessary for this stealth action game, but the game certainly plays more smoothly on the 6800 GT at 51 fps. The 6800 GT also remains playable with AA/AF enabled while the X1600 and 6600 GT do not.

103 Comments

View All Comments

HamburgerBoy - Wednesday, October 5, 2005 - link

Seems kind of odd that you'd include nVidia's best but not ATi's.cryptonomicon - Wednesday, October 5, 2005 - link

I was expecting ATI to make a comback here, but the performance is absolutely abysmal in most games. I dont know what else to say except this product is just gonna be sitting in shelves unless the price is cut severely.bob661 - Thursday, October 6, 2005 - link

LOL! I wouldn't say abysmal. Abysmal would be the X1800XT performing like a 6600GT. The card that doesn't do well is the X1600. X1800's are fantastic performers and certainly much better than my 6600GT at displaying all of a games glory. It just wasn't the ass kicker most everyone hyped it up to be. But technically speaking, it IS an ass kicker.flexy - Wednesday, October 5, 2005 - link

i am a bit disappointed - while at work i overflew the other reviews and then, as the crowning end of my day i read the AT review.I (and probably many others) were waiting for this card like it's the best think since sliced bread - and now, WAY too late we do *indeed* have a good card - but a card which is a contender to NV's offerings and nothing groundbreaking.

Don't get me wrong - better AF/AA is something i always have a big eye on, but then ATI always had this slight edge when it came to AF/AA.

The pure performance in FPS itself is rather sobering - just what we're used to the last few years...usually we have TWO high-end cards out which are PRETT MUCH comparable - and no card is really the "sliced bread" thing which shadows all others.

This is kind of sad.

The price also plays a HUGE factor - and amongst the nice AA/AF features i have a hard time to legitimate say spending $500 for "this edge"...especially as someone who already owns a X850XT .

Not as long i am still playable in HL2/DOD/Lost Coats etc....i dont think i will see FPS fall *that quick* - in other words: I can "afford" to wait longer (R580 ?) and wait for appropriate Game engines (UT2K4 ??) which would make it necessary for me to ditch my X850XT because the X850 got "slow".

D3/OpenGL performance is still disappointing - but then i dont know what NV-specific code D3 uses - but still sad to see this card getting it in the face even if it now has SM3.0 and everything.

Availability:

Well..here we go again....

Bottomline: If i were rich and the card would be orderable RIGHT NOW i would get the XT - no question.

But since i am not rich and the card is *a bit* a disappointment and obviously NOT EVEN AVAILABLE - i will NOT get this card.

It's time to sit back, relax, enjoy my current hardware, watch the prices fall, watch the drivers get better...and then, maybe, one day get one of those or A R580 :)

I WISHED it would NOT have been a day making one "sit and relax" but instead burst out in joy and enthusiasm....but well, then this is real life :)

Wesleyrpg - Thursday, October 6, 2005 - link

summarised very well mate!Regs - Wednesday, October 5, 2005 - link

They were likely better off trying to market that we didn't need new video cards this year and save their capital for next year. These performance charts, especially the "mid range" parts are awfully embarrassing to their company.photoguy99 - Wednesday, October 5, 2005 - link

I assume it was not one of the cards that come overclocked stock to 490Mhz?It seems like it would be fair to use a 490Mhz NVidia part since manufacturers are selling them at that speed out of the box with full warrenty intact.

Evan Lieb - Wednesday, October 5, 2005 - link

"Unless you want image quality."There is no image quality difference, and I doubt you've used either card. Fact of the matter is that you'll never notice IQ differences in the vast majority of the games today. Hell, it's even hard to notice differences in slower paced games like Splinter Cell. The reality is that speed is and always will be the number one priority, because eye candy doesn't matter if you're bogged down by choppy frame rate.

Right now, there is zero reason to want to purchase these cards, if you can even find them. That's fact. Accept it and move on until something else is released.

Madellga - Thursday, October 6, 2005 - link

Quality includes also playing a game without shimmering. I can't get that on my 7800GTX.Before anyone replies, the 78.03 drivers improve a lot the problem but does not fix it.

The explanation is inside Derek's article:

"Starting with Area Anisotropic (or high quality AF as it is called in the driver), ATI has finally brought viewing angle independent anisotropic filtering to their hardware. NVIDIA introduced this feature back in the GeForce FX days, but everyone was so caught up in the FX series' abysmal performance that not many paid attention to the fact that the FX series had better quality anisotropic filtering than anything from ATI. Yes, the performance impact was larger, but NVIDIA hardware was differentiating the Euclidean distance calculation sqrt(x^2 + y^2 + z^2) in its anisotropic filtering algorithm. Current methods (NVIDIA stopped doing the quality way) simply differentiate an approximated distance in the form of (ax + by + cz). Math buffs will realize that the differential for this approximated distance simply involves constants while the partials for Euclidean distance are less trivial. Calculating a square root is a complex task, even in hardware, which explains the lower performance of the "quality AF" equation.

Angle dependant anisotropic methods produce fine results in games with flat floors and walls, as these textures are aligned on axes that are correctly filtered. Games that allow a broader freedom of motion (such as flying/space games or top down view games like the sims) don't benefit any more from anisotropic filtering than trilinear filtering. Rotating a surface with angle dependant anisotropic filtering applied can cause noticeable and distracting flicker or texture aliasing. Thus, angle independent techniques (such as ATI's area aniso) are welcome additions to the playing field. As NVIDIA previously employed a high quality anisotropic algorithm, we hope that the introduction of this anisotropic algorithm from ATI will prompt NVIDIA to include such a feature in future hardware as well. "

Phantronius - Wednesday, October 5, 2005 - link

Unless you a fanboy