The Intel SSD 320 Review: 25nm G3 is Finally Here

by Anand Lal Shimpi on March 28, 2011 11:08 AM EST- Posted in

- IT Computing

- Storage

- SSDs

- Intel

- Intel SSD 320

Random Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

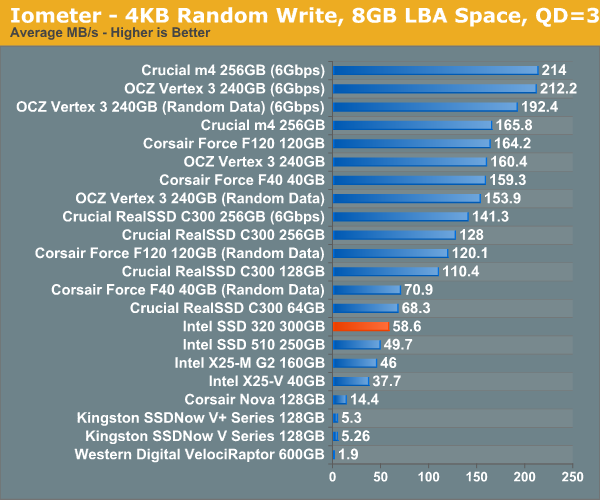

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Random write speed is improved compared to the 510 thanks to Intel's controller, but we're only looking at a marginal improvement compared to the original X25-M G2.

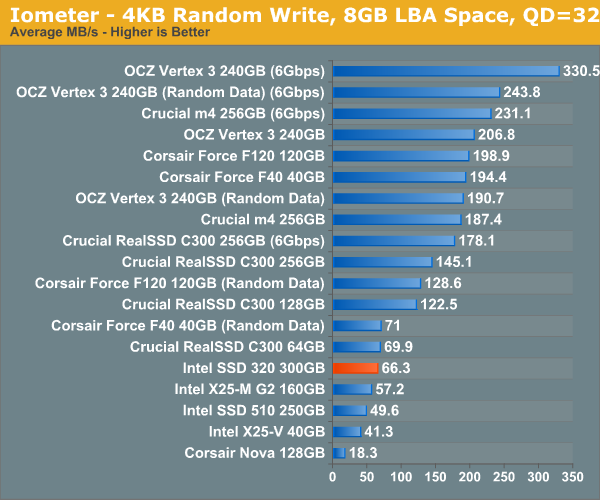

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

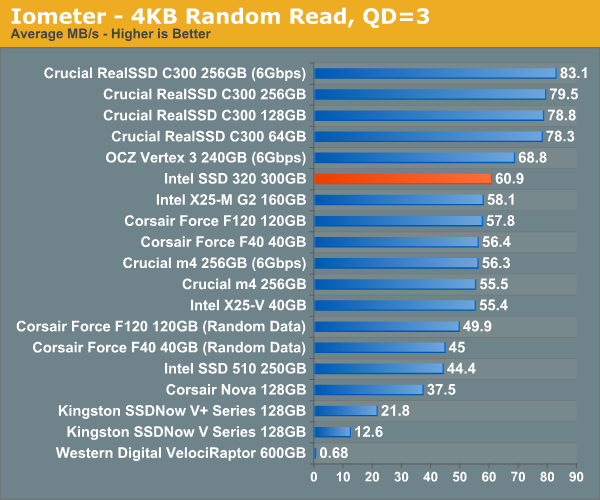

Random read performance has always been a strong point of Intel's controller and the 320 is no different. While we're not quite up to C300 levels, the 320 is definitely competitive here.

Sequential Read/Write Speed

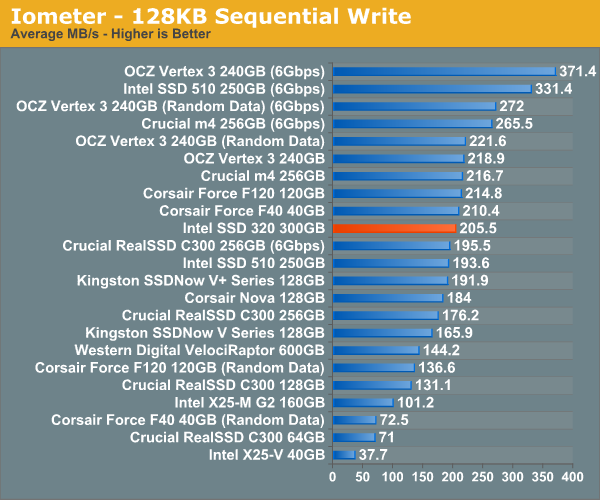

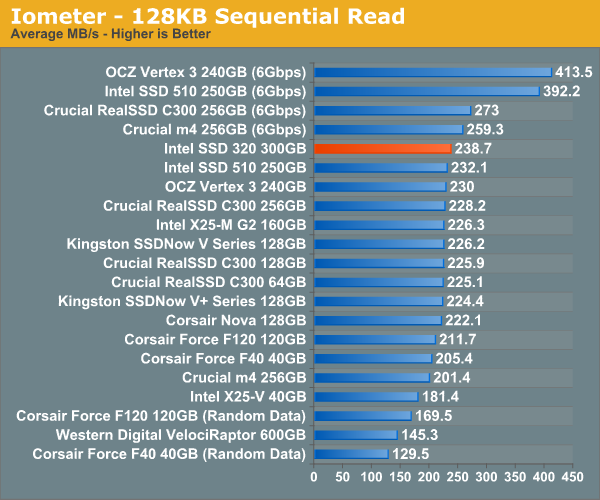

To measure sequential performance I ran a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

Without a 6Gbps interface the 320's performance is severely limited. Compared to other 3Gbps drives the 320 is quite good here though.

Read performance is at the top of the chart for 3Gbps drives. I wonder how far Intel would've been able to push things if the 320 had a 6Gbps controller.

194 Comments

View All Comments

bji - Monday, March 28, 2011 - link

But don't they? I am pretty sure that the listed price for a 160 GB drive is about the same as I paid for my 80 GB G2 a year ago. Maybe these prices are higher than current prices on the older generation drives but you really should compare products at the same point of their life cycle to be fair. The G3 is half the cost that the G2 was at launch (actually less than half if I am remembering correctly) and in a year when they are at a later point in the product life cycle they wil be half as expensive or less per GB than the G2 is at the same point of its life cycle.qwertymac93 - Monday, March 28, 2011 - link

I think we all knew it wouldn't be as fast as The new sandforces, but i didn't expect it to be so expensive. i guess intel figured people will buy it just because it's intel. Not me though, I'm waiting for next gen(<25nm) ssd's before i make the plunge, i want sub $1 per gig.A5 - Monday, March 28, 2011 - link

Oh well - $170 for 120GB is still pretty good.AnnonymousCoward - Sunday, April 3, 2011 - link

G2 120GB is $230.aork - Monday, March 28, 2011 - link

"That works out to be 320GB of NAND for a drive whose rated capacity is 300GB. In Windows you'll see ~279GB of free space, which leaves 12.8% of the total NAND capacity as spare area."Actually you only see 279 GB of free space in Windows because Windows displays in GiB (2^30 bytes), not true GB (10^9 bytes). In truth, you are only getting the expected 6.25% of the total NAND capacity as spare area.

Stahn Aileron - Monday, March 28, 2011 - link

Flash storage is, as far as I know, always treated as binary multiples, not decimal. SSD drive manufacturers take advantage of the discrepancy between the OS's definition and the HD manufacturers' definition of storage units (GiB - 2^(x*10) - vs GB - 10^(x*3)) to help cover the spare area.It also helps keep everything consistent within the storage industry. Can you imagine the fallout from having a 300GB SSD actually being 300GiB vs an "identically sized" 300GB HDD reporting only 279GiB? If the consumers don't get pissy about that, I'm positive the HDD manufacturers would.

Mr Perfect - Monday, March 28, 2011 - link

Yeah, disappointing really. The switch to SSDs would have been a golden opportunity for drives to format to what the label says. Oh well.Taft12 - Monday, March 28, 2011 - link

Marketing would never allow that. We're not going back to binary measures of storage ever again :(Zan Lynx - Monday, March 28, 2011 - link

And why would we? RAM is the only thing that was ever calculated in powers of 2. Ever.CPU MHz? 10-base.

Network speed? 10-base.

Hard drive capacity? Always 10-base. Since forever!

Making Flash size in base-2 would introduce a new exception, not restore any wondrous old measurement system.

If the label says 300 GB and the box contains 300,000,000,000 bytes, then it does contain exactly what was advertised. Giga as a prefix has always meant 1 billion. Gigahertz? 1 billion cycles per second. Gigameter? 1 billion meters. 1 gigaflops? 1 billion floating point operations per second.

jwilliams4200 - Monday, March 28, 2011 - link

Well said, Zan. I thought that almost everyone knew this, but it is disappointing to see Anand still using the wrong units in his reviews and tables. Windows reports sizes in GiB, but incorrectly labels it GB. Unfortunately, Anand makes the same mistake. Sad.