NVIDIA Announces Quadro K6000

by Ryan Smith on July 23, 2013 9:00 AM EST

As SIGGRAPH 2013 continues to roll on, today’s major announcements include those from NVIDIA. SIGGRAPH is NVIDIA’s favored show for professional graphics and Quadro product announcements, with NVIDIA using last year’s SIGGRAPH to announce their first Kepler based Quadro part, the Quadro K5000. This year NVIDIA is back again with a new Quadro part, finally fully fleshing out the Quadro family with the most powerful of the Quadro cards, the Quadro K6000.

In NVIDIA’s product hierarchy, the Quadro 6000 cards hold the position of NVIDIA’s most powerful products. They’re not just the flagship cards for the Quadro family, but really the flagship for the entire generation of GPUs, possessing the compute functionality of Tesla combined with the graphics functionality of GeForce/Quadro, and powered by what’s typically the single most powerful GPU configuration NVIDIA offers. They’re unabashedly high end – and have a price tag to match – but in many ways they’re the capstone of a generation. It should come as no surprise then that with the Quadro K6000, NVIDIA is looking to launch what will become the king of the Keplers.

| Quadro K6000 | Quadro K5000 | Quadro 6000 | |

| Stream Processors | 2880 | 1536 | 448 |

| Texture Units | 240 | 128 | 56 |

| ROPs | 48 | 32 | 48 |

| Core Clock | ~900MHz | ~700MHz | 574MHz |

| Shader Clock | N/A | N/A | 1148MHz |

| Memory Clock | 6GHz GDDR5 | 5.4GHz GDDR5 | 3GHz GDDR5 |

| Memory Bus Width | 384-bit | 256-bit | 384-bit |

| Frame Buffer | 12GB | 4GB | 6GB |

| FP64 | 1/3 FP32 | 1/24 FP32 | 1/2 FP32 |

| Max Power | 225W | 122W | 204W |

| Architecture | Kepler | Kepler | Fermi |

| Transistor Count | 7.1B | 3.5B | 3B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 40nm |

When Quadro K5000 was launched at SIGGRAPH 2012, it was to be based on NVIDIA’s more graphics-oriented GK104 GPU. A GK110 Quadro was a forgone conclusion, but of course GK110 would not start shipping until the end of 2012, and NVIDIA had large Tesla orders to fill. So it has taken the better part of a year, but NVIDIA is finally shipping a GK110 based Quadro card and it is a doozy.

With Quadro NVIDIA doesn’t promote the specifications of the card nearly as much as they promote the use cases – in fact they don’t even publish clockspeeds – but based on their performance data it’s always easy to quickly calculate the necessary information. With a quoted compute performance of 5.2TFLOPs/sec for single precision (FP32), Quadro K6000 is the first fully enabled GK110 part, shipping with all 15 SMXes up and running. These in turn are clocked at roughly 900MHz, the highest base clockspeed for any GK110 card. Paired with this GPU is a staggering 12GB of VRAM clocked at 6GHz, marking the first NVIDIA product shipping with 4Gb GDDR5. The TDP on all of this? Only 225W.

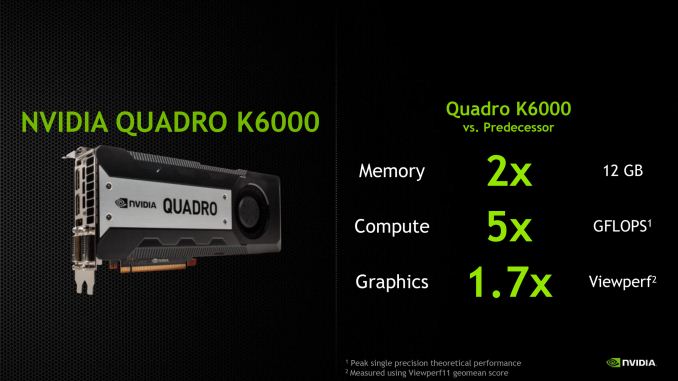

Compared to the outgoing Quadro 6000, it’s interesting to note on just where NVIDIA’s performance ambitions have taken them. Besides the obvious doubling in memory, Quadro K6000 offers 1.7x the graphics performance but 5x the compute performance. The compute performance gains clearly outpace the graphics performance gains, reflecting where NVIDIA has gone with Kepler in their designs. The professional graphics market still highly values graphics performance and the Quadro K6000 should have no problem delivering on that, but for mixed use cases the compute gains will be even more remarkable.

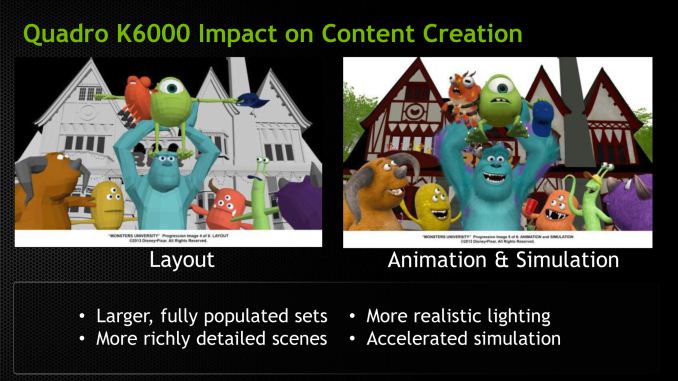

So who and what is NVIDIA pitching the Quadro K6000 at? As with the Quadro 6000 this is primarily (and unabashedly) being pitched at the deep pocketed content creation and product design crowds, who can afford to drop several kilobucks on a single card. In these cases the gains from Quadro K6000 are a mix of greater rendering performance and the greater VRAM. The latter in particular may very well be more important than the former, since often the limits in real time workloads is fitting all of the assets into memory rather than drawing them, due to the very severe performance cliff from spilling over into system memory.

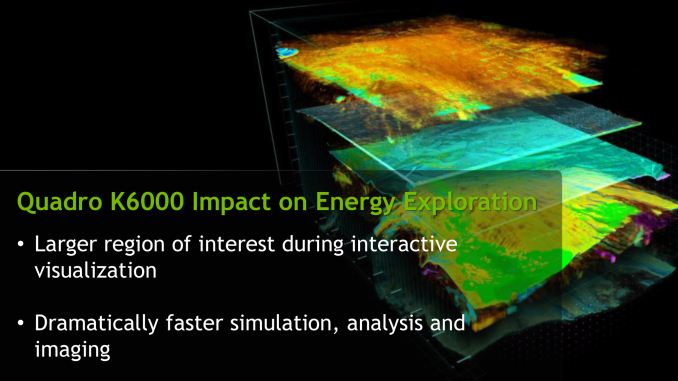

NVIDIA’s other big market for Quadro K6000, like Tesla K10 and K20, is the oil and gas industry, who use GPU products both to analyze seismic data and to display it. In this case the more that can be analyzed and the more that can be displayed at once, the more useful the resulting product is.

Moving on, as with Quadro K5000, Quadro K6000 is compatible with NVIDIA’s second generation Maximus technology, allowing for the K6000 to be dedicated to graphics work while compute work is offloaded to a Tesla card. The need for Maximus isn’t quite as great with K6000 as it was K5000 due to the K6000’s much greater compute performance, but offloading via Maximus still offers benefits in some mixed usage workloads by freeing up each device to work on a specialized workload as opposed to consistently context switching back and forth.

Finally, let’s quickly talk about launch details. As is usually the case for Quadro products NVIDIA is announcing the Quadro K6000 a couple months ahead of its ship date, so K6000 is not officially slated to start shipping until sometime in the fall. As always the cards will be sold both individually and through NVIDIA’s usual OEM partners, including Dell, HP, and Lenovo. NVIDIA has yet to announce a price tag for individual K6000 cards, but based on the price of the previous Quadro 6000 cards we’d expect to see them at around $5000-$7000 each.

22 Comments

View All Comments

lmcd - Tuesday, July 23, 2013 - link

I'd buy the car instead, man.nathanddrews - Wednesday, July 24, 2013 - link

Cars break down.mavere - Tuesday, July 23, 2013 - link

Considering the clocks and TDP, I guess Nvidia snatched up all the golden Titan dies for this.Shame. I wouldn't mind a cooler, quieter single GPU behemoth for gaming.

cmikeh2 - Tuesday, July 23, 2013 - link

The only way that is ever going to happen is if people are willing to pay the same price for that consumer card as NVIDIA would make on the Quadro. I find that unlikely.iMacmatician - Tuesday, July 23, 2013 - link

On NVIDIA's Quadro spec page, there's a link to a Linecard PDF that gives the peak GFLOPS of all the recent Quadro cards, to what looks to be the nearest 1 GFLOPS.The K6000 has 5196 SP GFLOPS (1/3 DP) and the K5000 has 2150 SP GFLOPS, which would give clock speeds of 902 and 700 MHz respectively.

chizow - Wednesday, July 24, 2013 - link

Guess this opens the door for the rumored GTX Titan Ultra, possibly in November to counter AMD's Volcanic Islands. If they have binned parts with all fully-enabled GK110 functional units, they have fully-enabled ASICs that are too leaky to make it as 225W Tesla/Quadro parts. Fully expect 250W Titan Ultras....$1500 np, all the Titan early adopters will be happy to get owned by Nvidia all over again for 192sp and 6GB more.Also expect to see a few more GK110-based SKUs, like the rumored 2304SP, 320-bit version. Shuffle Titan and 780 pricing based on how AMD competes with Volcanic Islands GCN 2.0 update on 28nm.

vailr - Saturday, July 27, 2013 - link

Too bad the new (yet-to-be-released) 2013 Mac Pro won't be able to use these. Unless maybe Apple decides to offer an updated "cheese cutter" case Mac Pro (with an updated nVidia GPU) in tandem with their "round cylinder" AMD-only dual GPU Mac Pro.Gothmoth - Tuesday, July 30, 2013 - link

lets hope they have better drivers for this card.i have problems with nvidia drivers newer then 314.22.

all drivers newer then 314.22 give me sporadic freezes for 2-4 seconds.

looking in the event log i see that "nvlddmkm" was causing a problem every time these freezes happen.

i have just installed a fresh win7 64 bit, because i bought a news SSD, and tried the latest WHQL driver. i immediately noticed that, using IE, the latest 320.49 driver has this "micro-freezes" too.

going back to 314.22 solved all my problems.

i don´t understand how nvidia gets a "WHQL" stamp for these new drivers.

and it´s really sad to see this happen.

i never had big problems with nvidia drivers before and that was a reason i bought nvidia since the gefore 256.

instead of tweaking benchmarks and game performance they should focus on stability!!

Toyist - Thursday, August 1, 2013 - link

Have to diagree. I've had mostly Pro cards and my son gaming cards on the same machines. We used to do side-by-side tests. I could render a 3D image in about 1/6 the time he could, but in some games he could get almost double the framerates. I would spend a lot more on my cards. Also, until recently (last five years or so) the architectures of pro cards and game cards were different - you couldn't get a pro and game version of the "same" card. Used to be Pro cards were OpenGL and gaming things like DirectX. Game companies would write to DirectX not OpenGL and while they would run on the Pro cards the Pro cards had only weak support for things liek DirectX.There is also a common misconception about manufacturers "disabling" the same card for pro and game markets. They do, but there are other factors. Binning is a big one (test the same chips and the "super performers" go in the pro cards and the others game cards). Also some of the "disabling" is more like tuning. If I drop a frame or two every second in a game no big deal. If I drop a frame when rendering a high-budget movie big issue. Different firmware very similar, but "disabled" drivers.

nonebeforenoneafter - Wednesday, August 7, 2013 - link

It would be very hard for me to imagine buying one of these rather than a 4 X Titan 6GB Liquid cooled cards paired with a High end 4way-SLI MOBO and 1200 watt PSU for many reasons. Water cooled Titans have a higher speed than standard titans. GPU rendering Cuda/OpenCL accross 4 gpus or even 3 would be incredible. Games now and future would be insane fast. Don't need to see 3D viewports in extreme quality too often. Fast enough to view thousands of objects. Should be organizing scenes and polycount anyway. Aftereffects would gain a lot from 4 Titans.