NVIDIA’s GeForce 800M Lineup for Laptops and Battery Boost

by Jarred Walton on March 12, 2014 12:00 PM ESTNew for GTX 800M: Battery Boost

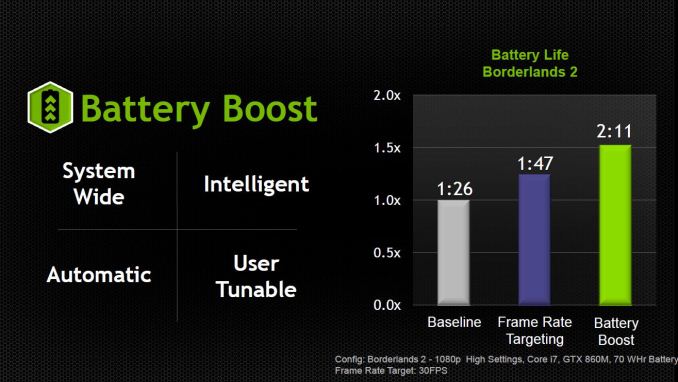

While the core hardware features have not changed in most respects – Maxwell and Kepler are both DX11 parts that implement some but not all of the DX 11.1 features – there is one exception. NVIDIA has apparently modified the hardware in the new GTX 800M chips to support a feature they’re calling Battery Boost. The short summary is that with this new combination of software and hardware features, laptops should be able to provide decent (>30 FPS) gaming performance while delivering 50-100% more battery life during gaming.

This could be really important for laptop gaming, as many people have moved to tablets and smartphones simply because a laptop doesn’t last long enough off AC power to warrant consideration. Battery Boost isn’t going to suddenly solve the problem of a high-end GPU and CPU using a significant amount of power, but instead of one hour (or less) of gaming we could actually be looking at a reasonable 2+ hours. Regardless, NVIDIA is quite excited to see where things go with Battery Boost, and we’ll certainly be testing the first GTX 800M laptops to provide some of our own measurements. Let’s get into some of the details of the implementation.

First, Battery Boost will require the use of NVIDIA’s GeForce Experience (GFE) software. You can see the various settings in the above gallery, though the screenshots are provided by NVIDIA so we have not yet been able to test this. Battery Boost builds on some of the already existing features like game profiles and optimizations, but it adds in some additional tweaks. Each GFE game profile on a laptop with Battery Boost will now have options for plugged in and battery power settings, and along with that setting is the ability to set a target frame rate (with 30 being commonly recommended as a nice balance between smoothness and reducing power use).

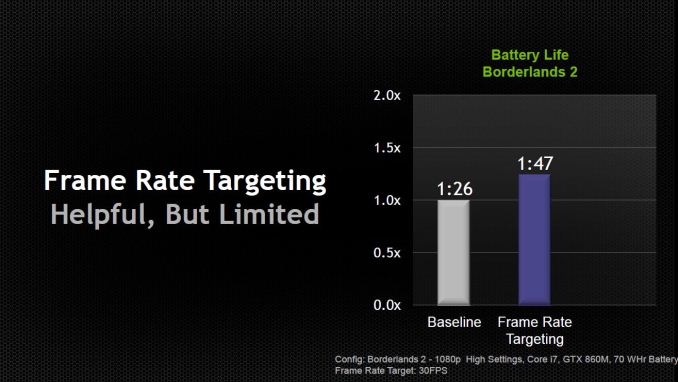

NVIDIA went into quite a bit of detail explaining how Battery Boost is more than simply targeting a lower average frame rate. That’s certainly a large part of the power savings, but it’s more than just capping the frame rate at 30 FPS. NVIDIA provided some information with a test laptop running Borderlands 2 where the baseline measurement was 86 minutes of battery life; turning on frame rate targeting at 30 FPS improved battery life by around 25% to 107 minutes, while Battery Boost is able to further improve on that result by another 22% and deliver 131 minutes of gameplay.

NVIDIA didn’t reveal all the details of what they’re doing, but they did state that Battery Boost absolutely requires a new 800M GPU – that’s it’s not a purely software driven solution. It’s an “intelligent” solution that has the drivers monitoring all aspects of the system – CPU, GPU, RAM, etc. – to reduce power draw and reach maximum efficiency. I suspect some of the “secret sauce” comes by way of capping CPU clocks, since most games generally don’t need the CPU running at maximum Turbo Boost to deliver decent frame rates, but what else might be going on is difficult to say. It also sounds as though Battery Boost requires certain features in the laptop firmware to work, which again would limit the feature to new notebooks.

Besides being system wide and intelligent, NVIDIA has two other “talking points” for Battery Boost. It will be automatic – unplug and the Battery Boost settings are enabled; plug in and you switch back to the AC performance mode. That’s easy enough to understand, but there’s a catch: you can’t have a game running and have it switch settings on-the-fly. That’s not really surprising, considering many games require you to exit and restart if you want to change certain settings. Basically, if you’re going to be playing a game while unplugged and you want the benefits of Battery Boost to be active, you’ll need to unplug before starting the game.

As for being user tunable, the above gallery already more or less covers that point – you can customize the settings for each game within GFE. I did comment to NVIDIA that it would be good to add target frame rate to the list of customization options on a per-game basis, as there are some games where you might want a slightly higher frame rate and others where lower frame rates would be perfectly adequate. NVIDIA indicated this would be something they can add at a later date, but for now the target frame rate is a global setting, so you’ll need to manually change it if you want a higher or lower frame rate for a specific game – and understand of course that higher frame rates will generally increase the load on the GPU and thus reduce battery life.

There’s one other aspect to mobile gaming that’s worth a quick note. Most high-end gaming laptops prior to now have throttled the GPU clocks when unplugged. This wasn’t absolutely necessary but was a conscious design decision. In order to maintain higher clocks, the battery and power circuitry would need to be designed to deliver sufficient power, and this often wasn’t considered practical or important. Even with a 90Wh battery, the combination of a GTX-class GPU and a fast CPU could easily drain the battery in under 30 minutes if allowed to run at full performance. So the electrical design power (EDP) of most gaming notebooks until now has capped GPU performance while unplugged, and even then battery life while gaming has typically been less than an hour. Now with Battery Boost, NVIDIA has been working with the laptop OEMs to ensure that the EDP of the battery subsystem will be capable of meeting the needs of the GPU.

Your personal opinion of Battery Boost and whether or not it’s useful will largely depend on what you do with your laptop. Presumably the main reason for getting a laptop instead of a desktop is the ability to be mobile and move around the house or take your PC with you, and Battery Boost should help improve the mobility aspect for gaming. If you rarely/never game while unplugged, it won’t necessarily help in any way but then it won’t hurt either. I suspect many of us simply don’t bother trying to game while unplugged because it drains the battery so quickly, and potentially doubling your mobile gaming time will certainly help in that respect. It’s a “chicken and egg” scenario, where people don’t game because it’s not viable and there’s not much focus on improving mobile gaming because people don’t play while unplugged. NVIDIA is hoping by taking the first step to improving mobile battery life that they can change what people expect from gaming laptops going forward.

91 Comments

View All Comments

MrSpadge - Wednesday, March 12, 2014 - link

Only if you equate the next gen consoles with the PS4. The XBone (~HD7790) is about matched by GTX750Ti.rish95 - Wednesday, March 12, 2014 - link

Are you sure? As far as I know, the 750 Ti outperforms the PS4 in Battlefield 4 and the XBone in Titanfall.jasonelmore - Thursday, March 13, 2014 - link

it plays titanfall on medium settings @1080p 60FPS.. The Xbox One is using similar settings but only rendering at 760p then upscaled. So yes, it's faster if you dismiss variables like CPU and Platform performance.No real way to compare unless someone pops in a Steamroller cpu with 750 Ti

rish95 - Thursday, March 13, 2014 - link

While that would be nice to see, I don't think that's the point. The OP said the 750 Ti can't make for a viable gaming platform compared to current gen consoles, because it is "slower."Clearly it can. It's outperforming both consoles with some CPU/platform setups. The 860Ms in these laptops would likely be paired with i5s and i7s, which will yield much better CPU performance than the Steamroller APUs in the consoles.

sheh - Wednesday, March 12, 2014 - link

860M and 860M. That's nice. A new low in obfuscation of actual specs?schizoide - Wednesday, March 12, 2014 - link

Agreed, I also found that disgusting.MrSpadge - Wednesday, March 12, 2014 - link

Exactly. Especially since the "860M (Kepler)" is crippled by its memory bus. What's the point of putting such a large, powerful and expensive chip in there when many shaders are deactivated and not even the remaining ones can use their full performance potential. You also can't make a huge chip as energy efficient as a smaller one by disabling parts of it.GK106 with full shaders, 192 bit bus and moderate clocks would have been more economic and at least as powerful, probably a bit faster depending on game and settings.

Yet regarding power efficiency "860M (Maxwell)" destroys both of these configurations, which makes them rather redundant. Especially since it should be cheaper for nVidia to produce the GM107. Do they have so many GK104 left to throw away?

En1gma - Wednesday, March 12, 2014 - link

> 800M will be a mix of both Kepler and Maxwell partsand even Fermi: 820m is GF108-based

En1gma - Wednesday, March 12, 2014 - link

or GF117..r3loaded - Wednesday, March 12, 2014 - link

Why does Nvidia persist with the GT/GTX prefixes? They're largely meaningless as people just go off the 3-digit model number.