LG 34UM67: UltraWide FreeSync Review

by Jarred Walton on March 31, 2015 3:00 PM ESTFreeSync Gaming on the LG 34UM67

The LG34UM67 is a great example of what LG does right as well as where it falls short. FreeSync is a technology largely geared towards gaming, but LG strikes new ground in ways that can be both good and bad. The UltraWide 21:9 resolution can be a blessing or a curse, depending on the game – even in 2015, there are sadly numerous games where the aspect ratio causes problems. When it works, it can provide a cinematic experience that draws you into the game; when it doesn’t, you get stretched models and a skewed aspect ratio. Sometimes registry or configuration file hacks can fix the problem, but 21:9 is still new enough that it doesn’t see direct support in most games.

My, Lara, you’ve let yourself go….

Tomb Raider with registry hack to fix the aspect ratio (using 2560/1080*10000 = 23703/0x5c97).

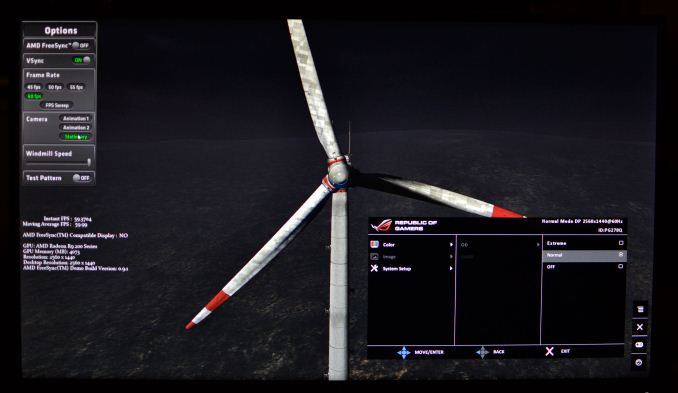

Similarly, the use of an IPS panel can be good and bad. The good news is that you get the wide viewing angles associated with IPS – and really, for a 34” display you’re going to want them! – but at the same time there’s a question of pixel response times, with most IPS panels rated at 5ms+ compared to TN panels rated at 1-2ms. LG specifies a response time of 14ms for the 34UM67, though they don’t mention whether that’s GtG or Tr/Tf. There’s also a setting in the OSD to improve response times, which we used to capture the following images with a 1/400s shutter speed. In the gallery below, we also compare the LG 34UM67 with the ASUS ROG Swift to show how the two panels handle the same content (from AMD’s FreeSync Demo).

My personal opinion is that LG's 14ms response time value may be incorrect, at least depending on the setting. The ASUS ROG Swift clearly has a faster response time in the above images and gallery, and if we compare best-case ghosting results, the “Normal” setting on the ASUS is very good while even the “High” setting on the LG still shows about two-thirds of the blades ghosting – I had some other images where the ghosting indicates the transition between frames occurs by the time around half of the display has been updated (~8ms). But the windmill in AMD's FreeSync demo is actually something of a best-case scenario if you happen to enable overdrive features. Let's look at what may be less ideal: F1 2014.

LG 34UM67 with Image Response on High

ASUS ROG Swift PG278Q with "Normal" Overdrive

Here the tables turn, with Normal Overdrive on the ASUS display causing some rather obvious artifacts, and if you enable Extreme Overdrive it can be very distracting. The LG display by comparison doesn't show any artifacting from increasing the Response Time setting, and at High it shows much less ghosting than in the windmill demo. I still like the higher refresh rates of the ASUS display, but I also very much prefer the IPS panel in the LG. The long and short of the response time question is that it's going to depend at least in part on the content you're viewing. Personally, I was never been bothered by ghosting on the 34UM67; your mileage may vary.

Perhaps the biggest flaw with the LG 34UM67 however comes down to the implementation of FreeSync. While FreeSync is in theory capable of supporting refresh rates as low as 9Hz and as high as 240Hz, in practice the display manufacturers need to choose a range where their display will function optimally. All crystal matrices will experience some decay over time, so if you refresh the display too infrequently you can get an undesirable flicker/fade effect in between updates. The maximum refresh rate is less of a concern, but if the pixel response time is too slow then refreshing faster won’t do any good. In the case of the 34UM67 and 29UM67, LG has selected a variable refresh rate range of 48 to 75 Hz. That can be both too high (on the minimum) and too low (on the maximum).

What that means is that as long as you’re running at 48 FPS to 75 FPS in a game, everything looks nice and smooth. Try to go above that value and you’ll either get some moderately noticeable tearing (VSYNC Off) or else you’ll max out at 75 FPS (VSYNC On), which is also fine. The real issue is when you drop below 48 FPS. You’re basically falling back to standard LCD behavior at that point, so either you have very noticeable tearing with a 75Hz refresh rate (AMD tells us that they drive a display at its max refresh rate when the frame rate drops below the cutoff) or you get stutter/judder from subdividing a sub-48 FPS frame rate into a 75Hz refresh rate. This is definitely an issue you can encounter, and the limited 48-75 Hz FreeSync range is a real concern.

Tearing is visible in the center of the windmill.

Some will point at this and lay the blame on AMD’s FreeSync and/or DisplayPort Active-Sync, but really that’s just a standard that allows the GPU and display to refresh at variable rates. The real problem here is the minimum refresh rate chosen by the manufacturer. AMD can still potentially improve the situation with driver tweaks (e.g. sending a frame exactly twice when the GPU falls below the minimum supported refresh rate), but while that should work fine on something like the Acer or BenQ FreeSync displays that support 40-144Hz, the two LG displays (34UM67 and 29UM67) have both the highest minimum and the lowest maximum refresh rate and so it won’t work quite as well. Of course all that is a moot point with the current AMD drivers, which leave you with a choice between tearing or judder at <48 FPS.

Ultimately, the gaming experience on the LG 34UM67 ends up being both better and worse than what I’ve seen with G-SYNC. It’s better in the sense that IPS is better – I’ve had a real dislike of TN panels for a decade now, for all the usual reasons. I’m not bothered by the response times either, and armed with an AMD Radeon R9 290X there are really not too many occasions where falling below 48 FPS is a problem. We typically look at 2560x1440 Ultra quality settings when comparing high-end GPUs, and the R9 290X usually is able to hit 48+ FPS or higher in most recent games. Where it falls short, a drop to Very High or High settings (or disabling 4xMSAA or similar) is usually all you need to do. Now couple that with 25% fewer pixels to render (2560x1080 vs. 2560x1440) and you will typically see frame rates improve by 20% or more compared to WQHD. So if you have an R9 290X (which can be had for as little as $310 these days), I don’t see falling below 48 FPS as a real problem… but going above 75 FPS will certainly happen.

On lesser hardware the story isn’t quite so rosy, unfortunately. The $240 Radeon R9 285 will mostly require High settings at 2560x1080 in demanding games, and if you have anything slower than that you will frequently not hit the 48-75 FPS sweet spot. Since the primary reason to buy a FreeSync capable display is presumably to avoid tearing and judder (as much as possible), what we’d really need to see is panels that support variable refresh rates from 30-100+ Hz at a minimum. The Acer and BenQ FreeSync displays are closer (40-144 Hz), but the 30-40 FPS range is still going to be a better experience on G-SYNC right now. If AMD can tweak their drivers to understand the minimum refresh rates of FreeSync monitors they might be able to work around some of the issues (e.g. by sending two frames at 78 Hz instead of one frame at 39 Hz), but until/unless that happens there are cases where G-SYNC simply works better.

Of course, G-SYNC displays also carry a price premium, but some of the price difference appears to go towards providing better panels or at least a "better" scaler. Again, this isn’t a flaw with FreeSync so much as an issue with the current generation of hardware scalers and displays. Long-term I expect the situation will improve, but waiting for driver updates is never a fun game to play. Perhaps more importantly however, the FreeSync displays are at worst a better experience on AMD GPUs than the normal fixed refresh rate monitors that have been around for decades. AMD can’t support G-SYNC, so the real choice is going to be whether you want to buy a FreeSync display now or use a “normal” display. The price premium doesn’t appear to be any more than $50, and it might be even lower once the newness fades a bit. Everything else being equal, for AMD GPUs I’d rather have FreeSync than not, which seems like the goal AMD set out to achieve.

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

_thumb.jpg)

96 Comments

View All Comments

willis936 - Wednesday, April 1, 2015 - link

It's worth mentioning that this wouldn't be good test methodology. Youd be at the mercy of how windows is feeling that day. To test monitor input lag you need to know how long it takes between when a pixel is sent across displayport or whatever to when it is updated on the display. It can be done without "fancy hardware" with a CRT and a high speed camera. Outside of that you'll need to be handling gigabit signals.willis936 - Wednesday, April 1, 2015 - link

Actually it can still be done with inexpensive hardware. I don't have a lot of experience with how low level you can get on the display drivers. Uou would need to find one that has the video transmission specs you want and you could dig into the driver to give out debug times when a frame started being sent (I could be making this unnecessarily complicated in my head, there may be easiest ways to do it). Then you could do a black and white test pattern with a photodiode to get the response time + input lag then some other test patterns to try to work out each of the two components (you'd need to know something about pixel decay and things I'm not an expert on).All of the embedded systems I know of are vga or hdmi though...

Murloc - Wednesday, April 1, 2015 - link

I saw some time ago that some company sold an affordable FPGA development board with video output.Maybe that would work.

Soulwager - Wednesday, April 1, 2015 - link

You can still calibrate with a CRT, but you can get thousands of times more samples than with a high speed camera(with the same amount of effort). USB polling variance is very easy to account for with this much data, so you can pretty easily get ~100 microsecond resolution.willis936 - Wednesday, April 1, 2015 - link

100 microsecond resolution is definitely good enough for monitor input lag testing. I won't believe you can get that by putting mouse input into a black box until I see it. It's not just windows. There's a whole lot of things between the mouse and the screen. anandtech did a decent article on it a few years back.http://www.anandtech.com/show/2803/7

Soulwager - Thursday, April 2, 2015 - link

Games are complicated, but you can make a test program as simple as you want, all you really need to do is go from dark to light when you give an input. And the microcontroller is measuring the timestamps at both ends of the chain, so if there's an inconsistency you haven't accounted for, you'll notice it.AnnonymousCoward - Friday, April 3, 2015 - link

If Windows adds unpredictable delays, all you need to do is take enough samples and trials and compare averages. That's a cool thing about probability.Ryan Smith - Wednesday, April 1, 2015 - link

CRTs aren't a real option here unfortunately. You can't mirror a 4K LCD to a CRT, and any additional processing will throw off the calculations.invinciblegod - Tuesday, March 31, 2015 - link

Having proprietary standards in pc gaming accessories is extremely frustrating. I switch between AMD and nVidia every other generation or so and I would hate for my monitor to be "downgraded" because I bought the wrong graphics card. I guess the only solution here is to pray for nVidia to support Adaptive-Sync so that we can all focus on one standard.invinciblegod - Tuesday, March 31, 2015 - link

I assume you didn't encounter supposed horrible backlight bleed that people seem to complain about on forums. That (and the currently proprietary nature of freesync until intel or nvidia supports it) is preventing me from buying this monitor.