Intel’s Raja Koduri Teases Even Larger Xe GPU Silicon

by Ryan Smith on June 25, 2020 10:00 AM EST- Posted in

- GPUs

- Intel

- Deep Learning

- Xe

- Xe-HP

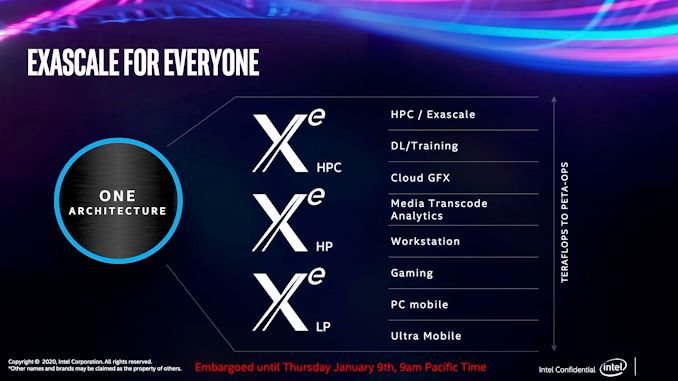

Absent from the discrete GPU space for over 20 years, this year Intel is set to see the first fruits from their labors to re-enter that market. The company has been developing their new Xe family of GPUs for a few years now, and the first products are finally set to arrive in the coming months with the Xe-LP-based DG1 discrete GPU, as well as Tiger Lake’s integrated GPU, kicking off the Xe GPU era for Intel.

But those first Xe-LP products are just the tip of a much larger iceberg. Intending to develop a comprehensive top-to-bottom GPU product stack, Intel is also working on GPUs optimized for the high-power discrete market (Xe-HP), as well as the high-performance computing market (Xe-HPC).

That high end of the market, in turn, is arguably the most important of the three segments for Intel, as well as being the riskiest. The server-class GPUs will be responsible for broadening Intel’s lucrative server business beyond CPUs, along with fending off NVIDIA and other GPU/accelerator rivals, who in the last few years have ridden the deep learning wave to booming profits and market shares that increasingly threaten Intel’s traditional market dominance. The server market is also the riskiest market, due to the high-stakes nature of the hardware: the only thing bigger than the profits are the chips, and thus the costs to enter the market. So under the watchful eye of Raja Koduri, Intel’s GPU guru, the company is gearing up to stage a major assault into the GPU space.

That brings us to the matter of this week’s teaser. One of the benefits of being a (relatively) upstart rival in the GPU business is that Intel doesn’t have any current-generation products that they need to protect; without the risk of Osborning themselves, they’re free to talk about their upcoming products even well before they ship. So, as a bit of a savvy social media ham, Koduri has been posting occasional photos of Intel's Xe GPUs, as Intel brings them up in their labs.

BFP - big ‘fabulous’ package😀 pic.twitter.com/e0mwov1Ch1

— Raja Koduri (@Rajaontheedge) June 25, 2020

Today’s teaser from Koduri shows off a tray with three different Xe chips of different sizes. While detailed information about the Xe family is still limited, Intel has previously commented that the Xe-HPC-based Ponte Vecchio would be taking a chiplet route for the GPU, using multiple chiplets to build larger and more powerful designs. So while Koduri's tweets don't make it clear what specific GPUs we're looking at – if they're all part of the Xe-HP family or a mix of different families – the photo is an interesting hint that Intel may be looking at a wider use of chiplets, as the larger chip sizes roughly correlate to 1x2 and 2x2 configurations of the smallest chip.

And with presumably multiple chiplets under the hood, the resulting chips are quite sizable. With a helpful AA battery in the photo for reference, we can see that the smaller packages are around 50mm wide, while the largest package is easily approaching 85mm on a side. (For refence, an Intel desktop CPU is around 37.5mm x 37.5mm).

Finally, in a separate tweet, Koduri quickly talks about performance: “And..they let me hold peta ops in my palm(almost:)!” Koduri doesn’t go into any detail about the numeric format involved – an important qualifier when talking about compute throughput on GPUs that can process lower-precision formats at higher rates – but we’ll be generous and assume INT8 operations. INT8 has become a fairly popular format for deep learning inference, as the integer format offers great performance for neural nets that don’t need high precision. NVIDIA’s A100 accelerator, for reference, tops out at 0.624 PetaOPs for regular tensor operations, or 1.248 PetaOps for a sparse matrix.

And that is the latest on Xe. With the higher-end discrete parts likely not shipping until later in 2021, this is likely not going to be the last word from Intel and Koduri on their first modern family of discrete GPUs.

Update: A previous version of the article called the large chip Ponte Vecchio, Intel's Xe-HPC flagship. We have since come to understand that the silicon we're seeing is likely not Ponte Vecchio, making it likely to be something Xe-HP based

Source: Intel/Raja Koduri

37 Comments

View All Comments

mode_13h - Friday, June 26, 2020 - link

And then it died.extide - Sunday, June 28, 2020 - link

Yeah, basically Intel added AVX-512 to their regular Xeons and made it redundant.xrror - Friday, June 26, 2020 - link

Anyone else here getting a bad feeling that Raja is going to do a repeat of what he did at AMD?His designs really do seem to be compute first, graphics second.

He got lucky that the cryptocurrency bubble saved his bacon when at AMD.

But all the of things he keeps exhibiting about Xe at Intel he really doesn't seem very excited about actual graphics performance - it's all about future AI scaling and compute.

And now he's showing these huge packages that honestly I don't see ever being on any affordable video card. They are technically impressive but do you seriously see these being mass produced for $300 video cards?

Last I fear if he keeps taking longer and longer the whole thing will languish like what happened to Larrabee/Phi.

Oxford Guy - Saturday, June 27, 2020 - link

Well, let's look at the state of Intel's business.1. Can't compete with AMD in terms of the "low-end" desktop enthusiast platform due to the 10nm flop and the lack of a good post-Skylake architecture.

2. Is getting lots of sales from enterprise thanks to COVID.

Which would you target right now if you were Intel?

mode_13h - Saturday, June 27, 2020 - link

I don't think crypto saved his bacon. I think when Vega launched late and wasn't competitive in either graphics or AI, the writing was on the wall.stadisticado - Saturday, June 27, 2020 - link

The products in this article are for HPC/AI use cases. The closest these will get to being used by consumers is if you subscribe to Stadia or similar type service. Intel hasn't really talked specifically about client GPUs as yet.TheJian - Thursday, July 2, 2020 - link

"Absent from the discrete GPU space for over 20 years, this year Intel is set to see the first fruits from their labors to re-enter that market."Uh, Larrabee. It was supposed to kill Nvidia and AMD, but failed to even convince people because you had to learn to program all over again (which is almost impossible to get programmers to do). You act like that was NOT an attempt at a GPU. It is still alive in many formats, just couldn't convince game devs it was worth the time. But don't act like they didn't attempt a comeback that already failed in gaming.

It still remains to be seen if they have a driver team that can keep up with either of the other giants, not to mention apple is coming with what I suspect is a better driver team than Intel (I'd bet on that) and gpu experience for ages on mobile gaming.

Look at win10. They gave it away for years (still a loophole today), and it still sucks, people still hate it, and given a choice would leave in seconds. Most stuck with it simply don't know how to change to something else. So you buy a win10 PC (like many of my parents friends, all retired of course), and end up ONLY using your phone to communicate with the world...ROFL. Windows 10 is killing PC users. It doesn't help that every patch breaks at least 4 versions of the OS...ROFL. That to me was larrabee but the hardware version. The difference is, you don't have 2 other options if you drop windows (well, not ones that will be painless), where the vid card had no chance to win vs. regular NV/AMD stuff that required no new learning. Windows 10 is just lucky Linux still sucks in gaming and most apps vs. windows (1 choice, vs, 10 on windows etc usually). If I was NV I'd be pouring millions into linux/android gaming and moving to my own PC system sans WINTEL completely. Apple is about to do it, sans everyone else. If they pull an EPIC like deal, giving away games for 5yrs or something (they can afford 10-20billion to dominate...LOL), don't act like it wouldn't attract millions of users and a huge base. If the phones are 5nm, even they will be decent gaming devices with apple aiming at the gpu. AppleTV just got upgraded, and expect a new version yearly I'd bet to advance you to death (how will msft/sony respond to yearly updates of hardware?). There are a number of ways to attack this with apple's cash. Cheap trade-ins for a few gens to get the whole base up to a certain level after leaving them behind yearly to kill everyone else, free games, etc, or a combo of tricks. Epic got 60m+ users in short order and they are broke compared to APPLE's level of shenanigans.

Please re-release win7 or xp and put dx12 or vuklan in (heck Vulkan already works on XP). Don't tell me you can't run it there...BS. Vulkan runs on anything pretty much, so does DX12 REALLY need win10...NOPE. If you still can't admit the obvious, ok, kill DX12, go vulkan and make a better OS made for DESKTOPS only. We are sick of your Mobile OS called win10. People are still waiting for a patch for the current patch that breaks all current versions (4?, almost all? whatever, win10 sucks, EVERY version). We have been beta testers for years on this crap OS. I digress...Apple might win our family (all homes) if win11 doesn't come soon and is radically OLDer, and at most 1 service pack a year or two, no features for 3yrs. Then it won't be broke for it's whole life, because you actually have time to fix crap.