The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

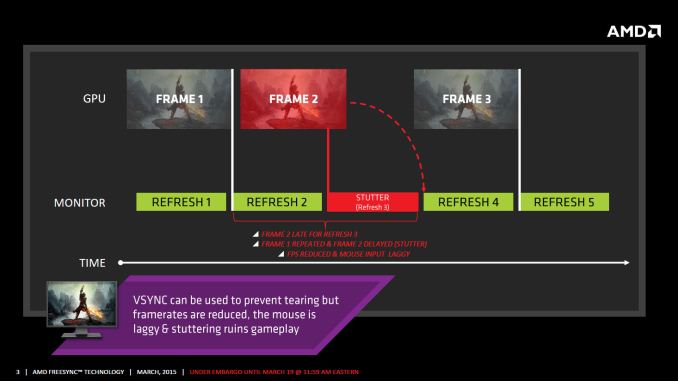

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

barleyguy - Thursday, March 19, 2015 - link

I was already shopping for a 21:9 monitor for my home office. I'm now planning to order a 29UM67 as soon as I see one in stock. The GPU in that machine is an R7/260X, which is on the compatible list. :-)boozed - Thursday, March 19, 2015 - link

"the proof is in the eating of the pudding"Thankyou for getting this expression right!

Oh, and Freesync looks cool too.

D. Lister - Thursday, March 19, 2015 - link

I have had my reservations with claims made by AMD these days, and my opinion of 'FreeSync' wasn't quite in contrast. If this actually works at least just as well as G-Sync (as claimed by this rather brief review) with various hardware/software setups then it is indeed a praiseworthy development. I personally would certainly be glad that the rivalry of two tech giants resulted (even if only inadvertently) in something that benefits the consumer.cmdrdredd - Thursday, March 19, 2015 - link

I love the arguments about "freesync is an open standard" when it doesn't matter. 80% of the market is Nvidia and won't be using it. Intel is a non-issue because not many people are playing games that benefit from adaptive v-sync. Think about it, either way you're stuck. If you buy a GSync monitor now you likely will upgrade your GPU before the monitor goes out. So your options are only Nvidia. If you buy a freesync monitor your options are only AMD. So everyone arguing against gsync because you're stuck with Nvidia, have fun being stuck with AMD the other way around.Best to not even worry about either of these unless you absolutely do not see yourself changing GPU manufacturers for the life of the display.

barleyguy - Friday, March 20, 2015 - link

NVidia is 71% of the AIB market, as of the latest released numbers from Hexus. That doesn't include AMD's APUs, which also support Freesync and are often used by "midrange" gamers.The relevance of being an open standard though, is that monitor manufacturers can add it with almost zero extra cost. If it's built into nearly every monitor in a couple of years, then NVidia might have a reason to start supporting it.

tsk2k - Thursday, March 19, 2015 - link

@Jarred WaltonYou disappoint me.

What you said about G-sync below minimum refresh rate is not correct, also there seems to be issues with ghosting on freesync. I encourage everyone to go to PCper(dot)com and read a much more in-depth article on the subject.

Get rekt anandtech.

JarredWalton - Friday, March 20, 2015 - link

If you're running a game and falling below the minimum refresh rate, you're using settings that are too demanding for your GPU. I've spent quite a few hours playing games on the LG 34UM67 today just to see if I could see/feel issues below 48 FPS. I can't say that I did, though I also wasn't running settings that dropped below 30 FPS. Maybe I'm just getting too old, but if the only way to quantify the difference is with expensive equipment, perhaps we're focusing too much on the theoretical rather than the practical.Now, there will undoubtedly be some that say they really see/feel the difference, and maybe they do. There will be plenty of others where it doesn't matter one way or the other. But if you've got an R9 290X and you're looking at the LG 34UM67, I see no reason not to go that route. Of course you need to be okay with a lower resolution and a more limited range for VRR, and you're also willing to go with a slower response time IPS (AHVA) panel rather than dealing with TN problems. Many people are.

What's crazy to me is all the armchair experts reading our review and the PCPer review and somehow coming out with one or the other of us being "wrong". I had limited time with the FreeSync display, but even so there was nothing I encountered that caused me any serious concern. Are there cases where FreeSync doesn't work right? Yes. The same applies to G-SYNC. (For instance, at 31 FPS on a G-SYNC display, you won't get frame doubling but you will see some flicker in my experience. So that 30-40 FPS range is a problem for G-SYNC as well as FreeSync.)

I guess it's all a matter of perspective. Is FreeSync identical to G-SYNC? No, and we shouldn't expect it to be. The question is how much the differences matter. Remember the anisotropic filtering wars of last decade where AMD and NVIDIA were making different optimizations? Some were perhaps provably better, but in practice most gamers didn't really care. It was all just used as flame bait and marketing fluff.

I would agree that right now you can make the case the G-SYNC is provably better than FreeSync in some situations, but then both are provably better than static refresh rates. It's the edge cases where NVIDIA wins (specifically, when frame rates fall below the minimum VRR rate), but when that happens you're already "doing it wrong". Seriously, if I play a game and it starts to stutter, I drop the quality settings a notch. I would wager most gamers do the same. When we're running benchmarks and comparing performance, it's all well and good to say GPU 1 is better than GPU 2, but in practice people use settings that provide a good experience.

Example:

Assassin's Creed: Unity runs somewhat poorly on AMD GPUs. Running at Ultra settings or even Very High in my experience is asking for problems, no matter if you have a FreeSync display or not. Stick with High and you'll be a lot happier, and in the middle of a gaming session I doubt anyone will really care about the slight drop in visual fidelity. With an R9 290X running at 2560x1080 High, ACU typically runs at 50-75FPS on the LG 34UM67; with a GTX 970, it would run faster and be "better". But unless you have both GPUs and for some reason you like swapping between them, it's all academic: you'll find settings that work and play the game, or you'll switch to a different game.

Bottom Line: AMD users can either go with FreeSync or not; they have no other choice. NVIDIA users likewise can go with G-SYNC or not. Both provide a smoother gaming experience than 60Hz displays, absolutely... but with a 120/144Hz panel only the high speed cameras and eagle eyed youth will really notice the difference. :-)

chizow - Friday, March 20, 2015 - link

Haha love it, still feisty I see even in your "old age" there Jarred. I think all the armchair experts want is for you and AT to use your forum on the internet to actually do the kind of testing and comparisons that matter for the products being discussed, not just provide another Engadget-like experience of superficial touch-feely review, dismissing anything actually relevant to this tech and market as not being discernable to someone "your age".JarredWalton - Friday, March 20, 2015 - link

It's easy to point out flaws in testing; it's a lot harder to get the hardware necessary to properly test things like input latency. AnandTech doesn't have a central location, so I basically test with what I have. Things I don't have include gadgets to measure refresh rate in a reliable fashion, high speed cameras, etc. Another thing that was lacking: time. I received the display on March 17, in the afternoon; sometimes you just do what you can in the time you're given.You however are making blanket statements that are pro-NVIDIA/anti-AMD, just as you always do. The only person that takes your comments seriously is you, and perhaps other NVIDIA zealots. Mind you, I prefer my NVIDIA GPUs to my AMD GPUs for a variety of reasons, but I appreciate competition and in this case no one is going to convince me that the closed ecosystem of G-SYNC is the best way to do things long-term. Short-term it was the way to be first, but now there's an open DisplayPort standard (albeit an optional one) and NVIDIA really should do everyone a favor and show that they can support both.

If NVIDIA feels G-SYNC is ultimately the best way to do things, fine -- support both and let the hardware enthusiasts decide which they actually want to use. With only seven G-SYNC displays there's not a lot of choice right now, and if most future DP1.2a and above displays use scalers that support Adaptive Sync it would be stupid not to at least have an alternate mode.

But if the only real problem with FreeSync is when you fall below the minimum refresh rate you get judder/tearing, that's not a show stopper. As I said above, if that happens to me I'm already changing my settings. (I do the same with G-SYNC incidentally: my goal is 45+ FPS, as below 40 doesn't really feel smooth to me. YMMV.)

Soulwager - Saturday, March 21, 2015 - link

You can test absolute input latency to sub millisecond precision with ~50 bucks worth of hobby electronics, free software, and some time to play with it. For example, an arduino micro, a photoresistor, a second resistor to make a divider, a breadboard, and a usb cable. Set the arduino up to emulate a mouse, and record the difference in timing between a mouse input and the corresponding change in light intensity. Let it log a couple minutes of press/release cycles, subtract 1ms of variance for USB polling, and there you go, full chain latency. If you have access to a CRT, you can get a precise baseline as well.As for sub-VRR behavior, if you leave v-sync on, does the framerate drop directly to 20fps, or is AMD using triple buffering?