Hidden Secrets: Investigation Shows That NVIDIA GPUs Implement Tile Based Rasterization for Greater Efficiency

by Ryan Smith on August 1, 2016 5:00 AM EST

As someone who analyzes GPUs for a living, one of the more vexing things in my life has been NVIDIA’s Maxwell architecture. The company’s 28nm refresh offered a huge performance-per-watt increase for only a modest die size increase, essentially allowing NVIDIA to offer a full generation’s performance improvement without a corresponding manufacturing improvement. We’ve had architectural updates on the same node before, but never anything quite like Maxwell.

The vexing aspect to me has been that while NVIDIA shared some details about how they improved Maxwell’s efficiency over Kepler, they have never disclosed all of the major improvements under the hood. We know, for example, that Maxwell implemented a significantly altered SM structure that was easier to reach peak utilization on, and thanks to its partitioning wasted much less power on interconnects. We also know that NVIDIA significantly increased the L2 cache size and did a number of low-level (transistor level) optimizations to the design. But NVIDIA has also held back information – the technical advantages that are their secret sauce – so I’ve never had a complete picture of how Maxwell compares to Kepler.

For a while now, a number of people have suspected that one of the ingredients of that secret sauce was that NVIDIA had applied some mobile power efficiency technologies to Maxwell. It was, after all, their original mobile-first GPU architecture, and now we have some data to back that up. Friend of AnandTech and all around tech guru David Kanter of Real World Tech has gone digging through Maxwell/Pascal, and in an article & video published this morning, he outlines how he has uncovered very convincing evidence that NVIDIA implemented a tile based rendering system with Maxwell.

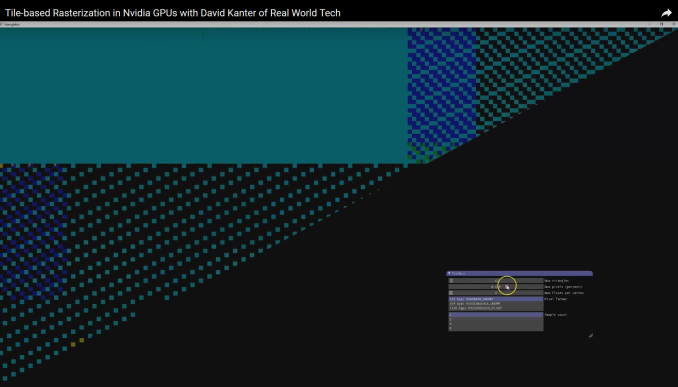

In short, by playing around with some DirectX code specifically designed to look at triangle rasterization, he has come up with some solid evidence that NVIDIA’s handling of tringles has significantly changed since Kepler, and that their current method of triangle handling is consistent with a tile based renderer.

NVIDIA Maxwell Architecture Rasterization Tiling Pattern (Image Courtesy: Real World Tech)

Tile based rendering is something we’ve seen for some time in the mobile space, with both Imagination PowerVR and ARM Mali implementing it. The significance of tiling is that by splitting a scene up into tiles, tiles can be rasterized piece by piece by the GPU almost entirely on die, as opposed to the more memory (and power) intensive process of rasterizing the entire frame at once via immediate mode rendering. The trade-off with tiling, and why it’s a bit surprising to see it here, is that the PC legacy is immediate mode rendering, and this is still how most applications expect PC GPUs to work. So to implement tile based rasterization on Maxwell means that NVIDIA has found a practical means to overcome the drawbacks of the method and the potential compatibility issues.

In any case, Real Word Tech’s article goes into greater detail about what’s going on, so I won’t spoil it further. But with this information in hand, we now have a more complete picture of how Maxwell (and Pascal) work, and consequently how NVIDIA was able to improve over Kepler by so much. Finally, at this point in time Real World Tech believes that NVIDIA is the only PC GPU manufacturer to use tile based rasterization, which also helps to explain some of NVIDIA’s current advantages over Intel’s and AMD’s GPU architectures, and gives us an idea of what we may see them do in the future.

Source: Real World Tech

191 Comments

View All Comments

jjj - Monday, August 1, 2016 - link

Makes the ARM Mali presentation at Hot Chips even more interesting as mobile and PC GPUs become more and more alike.Michael Bay - Monday, August 1, 2016 - link

I thought nV went explicit about mobile-centric design right around Maxwell and cited it as a reason for power efficiency increase? Kind of like intel did a while earlier.telemarker - Monday, August 1, 2016 - link

Link to how this is done on Mali (hotchips last year)https://youtu.be/HAlbAj-iVbE?t=1h5m

Alexvrb - Monday, August 1, 2016 - link

PowerVR was doing it before it was cool. :D On mobile and desktop - had a Kyro and a Kyro II back in the day when they were kings of efficiency on a dime.Strunf - Tuesday, August 2, 2016 - link

Yes, but as far as I remember they had some problems with overlapping objects, like wheels not showing up properly and so on. Probably fixable with drivers but I think they gave up on the desktop market within 1 or 2 years after their comeback.Scali - Tuesday, August 2, 2016 - link

Those weren't hardware or driver problems, as mentioned elsewhere.They were poorly designed software, which assumed that the zbuffer was 'just there', which is not the case on PowerVR hardware. PowerVR acts the same as a device with a full hardware buffer, between rendering passes. However, if you don't mark your rendering passes properly in your code, then you get undefined results.

TessellatedGuy - Monday, August 1, 2016 - link

This is why nvidia will always be miles ahead of amd in perf/watt and architectureRemon - Monday, August 1, 2016 - link

Yes, the whole 2 years they've been more efficient than AMD will dictate the future...milli - Monday, August 1, 2016 - link

I was thinking exactly the same.People seem to forget that it was ATI that was ahead in performance and market share up until 10 years ago and it's just the past two years that nVidia has taken a serious lead in market share and perf/watt. Things change, especially in markets like these.

close - Monday, August 1, 2016 - link

His name is TessellatedGuy and we all know AMD doesn't do tessellation that well. This means that using AMD might make him look like crap... or something :).