A Thought on Silicon Design: Intel’s LCC on HEDT Should Be Dead

by Ian Cutress on June 1, 2018 9:00 AM EST

In the past couple of weeks, we have been re-testing and re-analysing our second generation Ryzen 2000-series review. The extra time and writing, looking at the results and the state of the market, led me down some interesting thoughts, ideas, and concepts, about how the competitive landscape is set to look over the next 12-18 months.

Based on our Ryzen 2000-series review, it was clear that Intel’s 8-core Skylake-X product is not up to task. The Core i7-7820X wins in memory bandwidth limited tests because of the fact that it is quad channel over the dual channel competition, but it falls behind in almost every other test and it costs almost double compared to the other chips in benchmarks where the results are equal. It also only has 28 PCIe lanes, rather than the 40 that this chip used to have two generations ago, or 60 that AMD puts on its HEDT Threadripper processors.

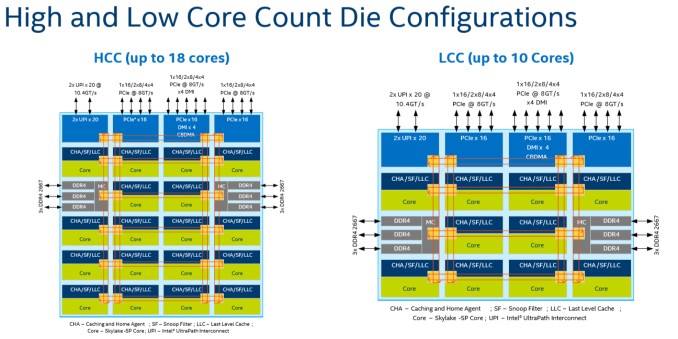

Intel uses its monolithic low-core-count (LCC) Xeon design for the 6-8-10 Skylake-X processors, as it has 10 cores in the silicon floor plan. AMD is currently highly competitive at 8 cores, with a much lower price point in the consumer space, making it hard for Intel to justify its 8-core Skylake-X design. Intel is also set to launch 8-core mainstream processors later this year, and is expected to extend its consumer ring-bus design from six-cores to eight-cores to do so, rather than transpose the 8-core LCC design using the latest Coffee Lake microarchitecture updates.

Because of all this, I am starting to be of the opinion that we will not see Intel release another LCC Xeon in the high-end desktop space in the future. AMD’s Threadripper HEDT processors run mainly at 12 and 16 cores, and we saw Intel ‘had to’* release its mid-range core count (called high core count, HCC) silicon design to compete.

*Officially Intel doesn’t consider its launch of 12-18 core Core i7/Core i9 processors a ‘response’ to AMD launching 16-core Threadripper processors. Many in the industry, due to the way the information came to light in spots and without a unified message, disagree.

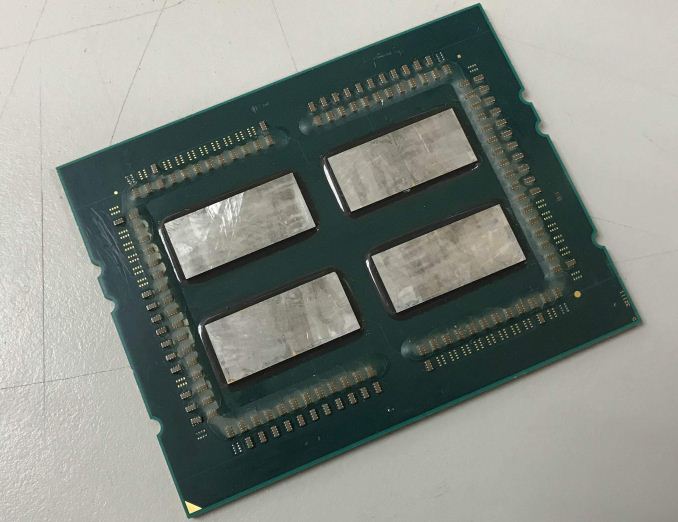

In this high-end desktop space, looking to the future, AMD is only ever going to push higher and harder, and AMD has room to grow. The Infinity Fabric, between different dies on the same package, is now a tried and tested technology, allowing AMD to scale out its designs in future products. The next product on the block is Threadripper 2, a minor update over Threadripper but based on 12nm and presumably with higher frequencies and better latencies as well. We expect to see similar 3-10% uplift over the last generation, and it is likely to be up to 16 cores in a single package coming out later this year.

With AMD turning the screw, especially with rumors of more high performance cores in the future, several things are going to have to happen from Intel to compete:

- We will only see HCC processors for HEDT to begin

- The base LCC design is relegated to low-end Xeons, and

- Intel will design its next big microarchitecture update with EMIB* in mind

- To compete, Intel will have to put at least two dies on a single package.

*EMIB: Embedded Multi-Die Interconnect Bridge, basically an intra-package interposer to connect two chips at high bidirectional speed without a bulky interposer by inserting a micro-interposer in the package PCB/substrate. We currently see this technology on Intel’s Core with Radeon RX Vega (‘Kaby Lake-G’) processors in the latest Intel NUC.

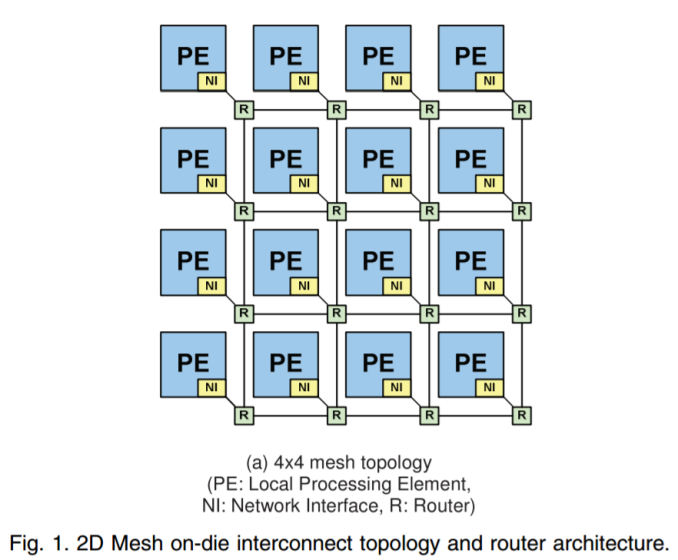

For the next generation of server-class Xeon processors, called Cascade Lake-SP and which are expected to be coming either this year or early next (Intel hasn’t stated), we believe it to be a minor update over the current Skylake-SP. Thus for CL-SP, option (1)+(2) could happen then. If Intel wants to make the mainstream platform on Coffee Lake go up to 8 cores, the high-end desktop is likely to only see 10 cores and up. The simple way to do this is to put the HCC core design (could be up to 18 cores) and cut it as necessary for each processor. Unless Intel are updating the LCC design to 12 cores (not really feasible given the way the new inter-core mesh interconnect works, image below), Intel should leave the LCC for the low count Xeons and only put the HCC chips in the high-end desktop space.

Representation of Intel's Mesh topology for its SP-class processors

Beyond CL-SP, for future generations, options (3)+(4) are the smarter paths to take. EMIB adds additional expense for packaging, but using two smaller dies should have a knock-on effect with better yields and a more cost effective implementation. Intel could also leave out EMIB and do an intra-package connection like AMD.

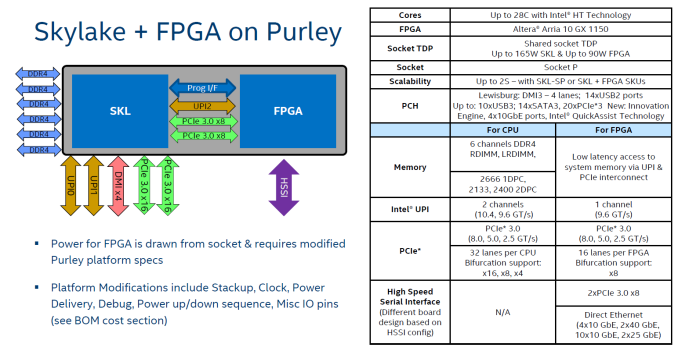

But one question is if Intel’s current library of interconnects, i.e. the ones that are competitors or analogues to AMD’s Infinity Fabric, are up to the task. Intel currently uses its UPI technology to connect between 2 socket, 4 socket and 8 socket platforms. Intel also uses it in the upcoming Xeon+FPGA products to combine two chips in a single package using an intra-package connection, but it comes at the expense of limiting those Xeon Gold processors down to two sockets rather than four (this is more a design thing of how the Xeon Gold has only 3 UPI connectors). But we will have to see if Intel can appropriately migrate UPI (or other technologies) across EMIB and over multiple dies in the same package. With the side of Intel, those dies might not need to be identical, like AMD, but as mentioned, AMD already has its Infinity Fabric in the market and selling today.

The question will be if Intel has had this in mind. We have seen ‘leaks’ in the past of Intel combining two reasonably high-core count chips into a single package, however we have never seen products like it in the market. If these designs are flying around Intel, which I’m sure they are, are they only for 10nm? Based on delays of 10nm, are Intel still waiting it out, or will they back-port the design as 14nm delays grow?

Intel’s Dr. Murthy Renduchintala, in a recent JP Morgan investment call, was clear that 10nm high volume manufacturing is set for 2019 (didn’t say when), but Intel is learning how to get more design wins within a node rather than waiting for new ones. I would not be surprised if this is one project that gets brought into 14nm in order to be competitive.

If Intel hasn’t done it by the time AMD launch Zen 2 on 7nm, the 10-15 year one-sided seesaw will tip the other way in the HEDT market.

Based on previous discussions from one member of the industry, I do not doubt that Intel might still win in the absolute raw money-is-no-object performance with its best high-end $10k+ parts. They are very good at that, and they have the money and expertise for these super halo, super high-bin monolithic parts. But if AMD makes the jump to Zen 2 and 7 nm before Intel comes to market with a post-Cascade Lake product on 10nm, then AMD is likely have the better, more aggressive, and more investment friendly product portfolio.

Competition is good.

30 Comments

View All Comments

DanNeely - Friday, June 1, 2018 - link

I agree that the 4x4 10+2+4 layout doesn't make sense if they're going to put 8 cores in next gen mainstream parts. I'm not convinced that Intel will only have a single HEDT/Xeon layout in the future. Instead, I'd expect both the LCC and HCC meshes to grow.A 5x4 layout for LCC could cover 10/12/14 core options at the lower end of the range.

HCC needs to grow too, a 5x6 layout - up from 4x6 - would add room for 2 more UPI lanes or 16 more PCIe lanes (depending on which Intel judges most needed), and 23 cores. If the odd core count itself is a problem, they could leave connectivity the same and offer 24 cores instead.

XCC would need to grow too, going from 6x6 to 6x8 would let them do 40 cores with IO unchanged, or 36 if they decide to add more ram/PCIe/UPI connections. More memory controllers might be a good option so they could slot in lots of Optane DIMMs while still having having plenty of DRAM available for application code and data that doesn't need to be persisted and which changes too frequently for Optane.

milkywayer - Friday, June 1, 2018 - link

I'm just thankful to AMD for forcing Intel to move from the 4 core BS that intel milked for almost a decade. Competition does wonderful things. Intel needed the kick in the butt.III-V - Friday, June 1, 2018 - link

I doubt AMD had anything to do with that. Where they absolutely had an effect is with pricing, but the existence of the 6 core part was a long time coming. And the "4 core" stuff wasn't BS -- Intel pursued graphics over core count, because it made more sense to them.It was likely that we'd have seen a 6 core part on 10nm, but due to delays, we saw that come on 14nm so that there was something new to keep OEMs happy.

Ket_MANIAC - Friday, June 1, 2018 - link

Oh no, it was my pet dog who ordered Intel to release all the products it did in 2017. AMD? What's that?FreckledTrout - Friday, June 1, 2018 - link

I hear this argument a lot, that Intel had to plan for it way before now so it could not have been AMD that caused it. If I had to guess Intel had 6-core designs for mainstream CPU's for at least two generations back. It's not like they got a new process node and found all this extra die space, no they are still running on 14nm and have a cheap 6-core CPU now. The fact that we see 6 cores on the mainstream CPU's is directly attributable to AMD aka competition.Alexvrb - Friday, June 1, 2018 - link

They had the configurations "waiting in the wings" but as FreckledTrout points out they had no intention of moving 6+ cores into the mainstream quite this soon. Now they're talking 8 cores on a mainstream platform.If you buy the official company line, it's all just a big coincidence. Indeed, they ignore products from other companies, plowing ahead with blinders on, totally isolated from the outside world.

FullmetalTitan - Saturday, June 2, 2018 - link

Based on the testimonials of former Intel employees the "blinders on" mentality does exist, but it's more of a problem at a higher level of design (see the 10nm debacle due to stubbornness) than consumer facing product segmentation.FullmetalTitan - Saturday, June 2, 2018 - link

"Intel pursued graphics over core count, because it made more sense to them."What? Intel integrated graphics are like 2 generations behind AMD APUs. If you mean they pursued discrete graphics instead, that commitment of resources happened after ~8 years of 4-core parts being the top of the mainstream offerings.

mode_13h - Sunday, June 3, 2018 - link

The point was that their graphics core count (and width) continued to increase as the manufacturing node decreased, even while their mainstream CPU core counts remained constant. So, it looks an awful lot like they spent much of the additional silicon budget afforded by die shrinks to grow their iGPU instead of adding more CPU cores.In 2010, their 32 nm Nehalem CPUs had Gen5 iGPUs with 12 EUs, each featuring 4-wide SIMD.

In 2012, their 22 nm Ivy Bridge CPUs had Gen7 iGPUs with 16 EUs, each featuring 8-wide SIMD.

In 2013, Haswell extended the mainstream up to 20 EUs.

In 2015, their 14 nm Broadwell CPUs had Gen8 iGPUs with 24 EUs.

Their mainstream CPUs are still at 14 nm and have iGPUs with 24 EUs.

Lolimaster - Friday, June 1, 2018 - link

Then you see how all of that seems idiotic vs AMD's approach.14-12nm

4core CCX, dual-CCX package for HEDT/EPYC (up to 32cores on a single socket)

7-7nm+

6/8core CCX, dual-CCX package for HEDT/EPYC (up to 64cores on a single socket)

HCC/LCC from intel just got OBSOLETE.